Beruflich Dokumente

Kultur Dokumente

Stereoscopy (Mrovlje)

Hochgeladen von

Jhon PaulOriginalbeschreibung:

Originaltitel

Copyright

Verfügbare Formate

Dieses Dokument teilen

Dokument teilen oder einbetten

Stufen Sie dieses Dokument als nützlich ein?

Sind diese Inhalte unangemessen?

Dieses Dokument meldenCopyright:

Verfügbare Formate

Stereoscopy (Mrovlje)

Hochgeladen von

Jhon PaulCopyright:

Verfügbare Formate

9th International PhD Workshop on Systems and Control: Young Generation Viewpoint

1. - 3. October 2008, Izola, Slovenia

Distance measuring based on stereoscopic pictures

Jernej Mrovlje1 and Damir Vrani2

Abstract Stereoscopy is a technique used for recording and representing stereoscopic (3D) images. It can create an illusion of depth using two pictures taken at slightly different positions. There are two possible way of taking stereoscopic pictures: by using special two-lens stereo cameras or systems with two single-lens cameras joined together. Stereoscopic pictures allow us to calculate the distance from the camera(s) to the chosen object within the picture. The distance is calculated from differences between the pictures and additional technical data like focal length and distance between the cameras. The certain object is selected on the left picture, while the same object on the right picture is automatically detected by means of optimisation algorithm which searches for minimum difference between both pictures. The calculation of objects position can be calculated by doing some geometrical derivations. The accuracy of the position depends on picture resolution, optical distortions and distance between the cameras. The results showed that the calculated distance to the subject is relatively accurate.

similarly to our own eyes. The most important restrictions in taking a pair of stereoscopic pictures are the following: cameras should be horizontally aligned (see Figure 1), and the pictures should be taken at the same instant [3]. Note that the latest requirement is not so important for static pictures (where no object is moving).

horizontal

(a)

vertical error

I. INTRODUCTION Methods for measuring the distance to some objects can be divided into active and passive ones. The active methods are measuring the distance by sending some signals to the object [2, 4] (e.g. laser beam, radio signals, ultra-sound, etc.) while passive ones only receive information about objects position (usually by light). Among passive ones, the most popular are those relying on stereoscopic measuring method. The main characteristic of the method is to use two cameras. The objects distance can be calculated from relative difference of objects position on both cameras [6]. The paper shows implementation of such algorithm within program package Matlab and gives results of experiments based on some stereoscopic pictures taken in various locations in space. II. STEREOSCOPIC MEASUREMENT METHOD Stereoscopy is a technique used for recording and representing stereoscopic (3D) images. It can create an illusion of depth using two pictures taken at slightly different positions. In 1838, British scientist Charles Wheatstone invented stereoscopic pictures and viewing devices [1, 5, 7]. Stereoscopic picture can be taken with a pair of cameras,

(b )

vertical error

(c )

Fig. 1. Proper alignment of the cameras (a) and alignments with vertical errors (b) and (c).

Stereoscopic pictures allow us to calculate the distance between the camera(s) and the chosen object within the picture. Let the right picture be taken in location SR and the left picture in location SL. B represents the distance between the cameras and 0 is cameras horizontal angle of view (see Figure 2). Objects position (distance D) can be calculated by doing some geometrical derivations. We can express distance B as a sum of distances B1 and B2:

B = B1 + B2 = D tan 1 + D tan 2 ,

(1)

if optical axes of the cameras are parallel, where 1 and 2 are angles between optical axis of camera lens and the chosen object. Distance D is as follows:

1 Jernej Morvlje is with the Faculty of Electrical Engineering, University of Ljubljana, Slovenia; (e-mail: jernej.mrovlje@gmail.com).

Damir Vrani is was with Department of Systems and Control, J. Stefan Institute, Jamova 39, 1000 Ljubljana, Slovenia (e-mail: damir.vrancic@ijs.si).

D=

B tan 1 + tan 2

(2)

9th International PhD Workshop on Systems and Control: Young Generation Viewpoint

1. - 3. October 2008, Izola, Slovenia

where 0 is cameras horizontal angle of view and x0 picture resolution (in pixels).

x1

x0 2

D

D

2

1

0

B1

B

0

B2 SR

SL

SL

Fig. 2. The picture of the object (tree) taken with two cameras.

Using images 3 and 4 and basic trigonometry, we find:

Fig. 3. The picture of the object (tree) taken with left camera.

tan 1 x1 = , x0 0 tan 2 2 x2 tan 2 = . x0 0 tan 2 2

Distance D can be calculated as follows:

(3)

x2

x0 2

(4)

D=

Bx 0 2 tan (xL x D ) 2 0

(5)

Therefore, if the distance between the cameras (B), number of horizontal pixels (x0), the viewing angle of the camera (0) and the horizontal difference between the same object on both pictures (xL-xD) are known, then the distance to the object (D) can be calculated as given in expression (5). The accuracy of the calculated position (distance D) depends on several variables. Location of the object in the right picture can be found within accuracy of one pixel (see Fig. 5). Each pixel corresponds to the following angle of view

SR

Fig. 4. The picture of the object (tree) taken with right camera.

0

x0

(6)

Image 5 shows one pixel angle of view which results in distance error D. In image we find:

9th International PhD Workshop on Systems and Control: Young Generation Viewpoint

1. - 3. October 2008, Izola, Slovenia

tan D + D = , tan( ) D

(7)

Using basic trigonometry identities, the distance error can be expressed as follows:

subtracted images are, lower is the mean absolute value of matrix Ii. Image 6 shows two examples of subtraction. Images in the first example do not match, while in the second example images match almost perfectly.

D =

D2 tan( ) . B

(8)

Note that the actual error might be higher due to optical errors (barrel or pincushion distortion, aberration, etc.) [8].

I L ( NxN )

I R ( MxN )

Fig. 6. Selected object on the left picture (red colour) and search area in the right picture (green colour).

I R1

STEP 1

MxN NxN

1px

STEP 2

I R2

2 px

STEP3

I R3

Fig. 5. Distance error caused by 1 pixel positioning error.

...

1px

I RM N

STEP M N

...

III. OBJECT RECOGNITION When the certain object is selected on the left picture, the same object on the right picture has to be automatically located. Let the square matrix IL represent selected object on the left picture (see Figure 6). Knowing the properties of stereoscopic pictures (the objects are horizontally shifted), we can define search area within the right picture matrix IR. Vertical dimensions of IR and IL should be the same, while horizontal dimension of IR should be higher. Our next step is to find the location within the search area IR, where the picture best fits matrix IL. We do that by subtracting matrix IL from all possible submatrixes (in size of matrix IL) within the matrix IR. When size of the matrix IL is NxN and size of the matrix IR is MxN, where M>N, M-N+1 submatrixes have to be checked. Image 7 shows the search process. The result of each subtraction is another matrix Ii which tells us how similar subtracted images are. More similar two

STEP M N + 1

I RM N +1

Fig. 7. Finding appropriate submatrix in the right picture.

The matrix Ik with the lowest mean of its elements, represents the location where matrix IL best fits to matrix IR. This is also the location of the chosen object within the right image. Knowing the location of the object in the left and right image allow us to calculate the distance between the pictures:

xL xD =

N M + k 1 . 2 2

(9)

9th International PhD Workshop on Systems and Control: Young Generation Viewpoint

1. - 3. October 2008, Izola, Slovenia

Example 1:

I Rj

E j = mean( I R j I L )

I j = I Rj I L

IL

Example 2 :

I Rk

I k = I Rk I L IL

Ek = mean( I Rk I L )

Fig. 10. Picture with targets (small white panel boards) located at 10m, 20m, 30m, 40m, 50m and 60m.

Fig. 8. The difference between selected object in the left picture and submatrix on the right picture.

N / 2 + k 1

CL x L xD

CD

Fig. 11. Detailed view of Figure 10 (markers).

M / 2

N / 2

N /2

M /2

Fig. 9. Calculation of the horizontal distance of the object between left and right picture.

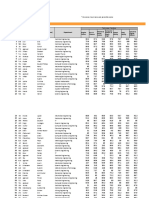

After adjusting the cameras to be parallel, the distances were calculated in program package Matlab. The calculated distances for different locations and different markers (10m, 20m, 30m, 40m, 50m and 60m) are given in Tables 1 to 6.

The calculated difference (9) can be used in calculation of objects distance (5).

Table 1. Calculated distance to marker at 10m in various locations

base

Marker at 10m location 1 10,81 10,63 10,45 10,09 10,18 10,18 location 2 10,58 10,40 10,04 10,04 10,03 9,84 location 3 10,01 9,77 10,01 9,81 9,90 9,96 location 4 10,34 10,47 10,28 10,13 10,18 10,13

IV. EXAMPLES Four experiments have been conducted in order to test the accuracy of our distance-measuring method. We have used six markers placed at distances 10m, 20m, 30m, 40m, 50m and 60m. Those markers have been placed at various locations in space (nature) and then photographed by two cameras. One of the pictures (with detail view) is shown in Figs. 10 and 11.

[m] 0,2 0,3 0,4 0,5 0,6 0,7

9th International PhD Workshop on Systems and Control: Young Generation Viewpoint

1. - 3. October 2008, Izola, Slovenia

Table 2. Calculated distance to marker at 20m in various locations

Table 6. Calculated distance to marker at 60m in various locations

base [m] 0,2 0,3 0,4 0,5 0,6 0,7 location 1 21,23 20,86 20,81 20,04 20,19 20,44

Marker at 20m location 2 21,57 21,87 20,49 20,62 20,59 20,41 location 3 21,57 21,87 20,49 20,62 20,59 20,41 location 4 20,56 20,68 20,33 20,62 20,14 19,86

base [m] 0,2 0,3 0,4 0,5 0,6 0,7 location 1 63,36 63,56 64,97 61,95 62,07 61,57

Marker at 60m location 2 67,69 64,63 60,62 63,97 63,75 61,40 location 3 70,67 59,29 60,22 62,01 62,13 61,75 location 4 53,26 64,99 60,95 59,42 61,21 60,55

Table 3. Calculated distance to marker at 30m in various locations

base [m] 0,2 0,3 0,4 0,5 0,6 0,7 location 1 30,80 31,81 32,05 30,25 30,88 30,74

Marker at 30m location 2 32,47 31,56 30,93 31,55 31,58 30,33 location 3 33,02 27,97 32,12 29,22 30,06 31,25 location 4 29,57 31,13 30,65

Figures 12 to 17 show the results graphically. It can be seen that the distance to the subjects is calculated quite well taking into account the cameras resolution, barrel distortion [8] and chosen base. As expected, better accuracy is obtained with wider base between the cameras.

11

29,77 30,80 30,71

10.5

Location 1 Location 2 Location 3 Location 4

Table 4. Calculated distance to marker at 40m in various locations

base [m] 0,2 0,3 0,4 0,5 0,6 0,7 location 1 42,49 41,67 42,04 40,17 40,69 41,12

Marker at 40m location 2 45,28 42,72 40,86 41,40 41,34 40,84 location 3 40,29 40,76 38,61 40,25 41,08 39,73 location 4 37,98 39,78 40,93 38,42 40,42 40,05

calculated distance (D) [m]

10 9.5 0.1

0.2

0.3

0.4 0.5 distance between cameras (B) [m]

0.6

0.7

0.8

Fig. 12. Calculated distances to marker set at 10m at 4 different locations.

22.5 Location 1 Location 2 Location 3 Location 4 22

Table 5. Calculated distance to marker at 50m in various locations

21.5

base [m] 0,2 0,3 0,4 0,5 0,6 0,7 location 1 50,70 54,04 53,10 51,22 51,41 52,30

Marker at 50m location 2 57,53 52,74 51,16 52,50 52,34 52,05 location 3 47,38 45,92 57,50 49,01 51,48 53,85 location 4 46,80 50,00 50,15 48,21 50,32 50,07

calculated distance (D) [m]

21

20.5

20

19.5 0.1

0.2

0.3

0.4 0.5 distance between cameras (B) [m]

0.6

0.7

0.8

Fig. 13. Calculated distances to marker set at 20m at 4 different locations.

9th International PhD Workshop on Systems and Control: Young Generation Viewpoint

1. - 3. October 2008, Izola, Slovenia

35 Location 1 Location 2 Location 3 Location 4

80 Location 1 Location 2 Location 3 Location 4 75

34

33

70

calculated distance (D) [m]

calculated distance (D) [m]

32

65

31

60

30

55

29

28

50

27 0.1

0.2

0.3

0.4 0.5 distance between cameras (B) [m]

0.6

0.7

0.8

45 0.1

0.2

0.3

0.4 0.5 distance between cameras (B) [m]

0.6

0.7

0.8

Fig. 14. Calculated distances to marker set at 30m at 4 different locations.

Fig. 176. Calculated distances to marker set at 60m at 4 different locations.

V. CONCLUSION

48 Location 1 Location 2 Location 3 Location 4 46

44

42

40

38

36 0.1

0.2

0.3

0.4 0.5 distance between cameras (B) [m]

0.6

0.7

0.8

Fig. 15. Calculated distances to marker set at 50m at 4 different locations.

The proposed method for non-invasive distance measurement is based upon the pictures taken from two horizontally displaced cameras. The user should select the object on left camera and the algorithm finds similar object on the right camera. From displacement of the same object on both pictures, the distance to the object can be calculated. Although the method is based on relatively simple algorithm, the calculated distance is still quite accurate. Better results are obtained with wider base (distance between the cameras). This is all according to theoretical derivations. In future research, the proposed method will be tested on some other target objects. The influence of lens barrel distortion on measurement accuracy will be studied as well.

calculated distance (D) [m]

62 Location 1 Location 2 Location 3 Location 4

REFERENCES

[1] M. Vidmar, ABC sodobne stereofotografije z maloslikovnimi kamerami, Cetera, 1998. [2] H. Walcher, Position sensing Angle and distance measurement for engineers, Second edition, ButterworthHeinemann Ltd., 1994. [3] D. Vrani and S. L. Smith, Permanent synchronization of camcorders via LANC protocol, Stereoscopic displays and virtual reality systems XIII : 16-19 January, 2006, San Jose, California, USA, (SPIE, vol. 6055). [4] Navigation 2, Radio Navigation, Revised edition, Oxford Aviation Training, 2004. [5] P. Gedei, Ena in ena je tri, Monitor, july 2006, http://www.monitor.si/clanek/ena-in-ena-je-tri/, (21.12.2007). [6] J. Carnicelli, Stereo vision: measuring object distance using pixel offset, http://www.alexandria.nu/ai/blog/entry.asp?E=32, (29.11.2007). [7] A. Klein, Questions and answers about stereoscopy, http://www.stereoscopy.com/faq/index.html, (28.1.2007). [8] A. Woods, Image distortions and stereoscopic video systems, http://www.3d.curtin.edu.au/spie93pa.html, (27.1.2007).

60

58

56

calculated distance (D) [m]

54

52

50

48

46

44

42 0.1

0.2

0.3

0.4 0.5 distance between cameras (B) [m]

0.6

0.7

0.8

Fig. 165. Calculated distances to marker set at 50m at 4 different locations.

Das könnte Ihnen auch gefallen

- Derrida, Declarations of Independence PDFDokument7 SeitenDerrida, Declarations of Independence PDFMichael Litwack100% (1)

- 3 D Vision Feedback For Nanohandling Monitoring in A Scanning Electron MicroscopeDokument24 Seiten3 D Vision Feedback For Nanohandling Monitoring in A Scanning Electron Microscopepurrab divakarNoch keine Bewertungen

- Manuscript v2 ShuaishuaiDokument9 SeitenManuscript v2 ShuaishuaiFarazNoch keine Bewertungen

- Experiments in 3D Measurements by Using Single Camera and Accurate MotionDokument6 SeitenExperiments in 3D Measurements by Using Single Camera and Accurate MotionĐường Khánh SơnNoch keine Bewertungen

- Object Tracking Based On Pattern MatchingDokument4 SeitenObject Tracking Based On Pattern Matchingeditor_ijarcsseNoch keine Bewertungen

- Moam - Info Object Distance Measurement by Stereo Vision World 59cdbe691723ddf765fc7471Dokument4 SeitenMoam - Info Object Distance Measurement by Stereo Vision World 59cdbe691723ddf765fc7471Trần NhậtNoch keine Bewertungen

- Object Distance Measurement by Stereo VisionDokument5 SeitenObject Distance Measurement by Stereo Visionvctorvargas9383Noch keine Bewertungen

- Distance Measurement System Using Binocular Stereo Vision Approach IJERTV2IS121134Dokument4 SeitenDistance Measurement System Using Binocular Stereo Vision Approach IJERTV2IS121134Michelle BrownNoch keine Bewertungen

- Build 3 DScanner Systembasedon Binocular Stereo VisionDokument7 SeitenBuild 3 DScanner Systembasedon Binocular Stereo Vision会爆炸的小米NoteNoch keine Bewertungen

- Automatic Segmentation Based On Statistical Parameters For Infrared ImagesDokument10 SeitenAutomatic Segmentation Based On Statistical Parameters For Infrared ImagesDr. Vishwanath ShervegarNoch keine Bewertungen

- Camera Object Tracking Fast AlgorithmDokument7 SeitenCamera Object Tracking Fast AlgorithmRadenkovicNoch keine Bewertungen

- Evaluation of The Kinect™ Sensor For 3-D Kinematic Measurement in The WorkplaceDokument5 SeitenEvaluation of The Kinect™ Sensor For 3-D Kinematic Measurement in The WorkplaceergoshaunNoch keine Bewertungen

- January 10, 2018Dokument22 SeitenJanuary 10, 2018Darshan ShahNoch keine Bewertungen

- Research Article: CLEAN Technique To Classify and Detect Objects in Subsurface ImagingDokument7 SeitenResearch Article: CLEAN Technique To Classify and Detect Objects in Subsurface ImagingBilge MiniskerNoch keine Bewertungen

- Report V1.1.4.finalDokument17 SeitenReport V1.1.4.finalDiego CorreaNoch keine Bewertungen

- Region Covariance Based Object Tracking Using Monte Carlo MethodDokument4 SeitenRegion Covariance Based Object Tracking Using Monte Carlo MethodVasuhi SamyduraiNoch keine Bewertungen

- Terrestrial Photogrammetry and Application To Modeling Architectural ObjectsDokument13 SeitenTerrestrial Photogrammetry and Application To Modeling Architectural ObjectsMuhriz MazaniNoch keine Bewertungen

- Close Range Digital Photogrammetry Applied To Topography and Landslide MeasurementsDokument6 SeitenClose Range Digital Photogrammetry Applied To Topography and Landslide Measurementsugo_rossiNoch keine Bewertungen

- ITS Master 15963 Paper PDFDokument6 SeitenITS Master 15963 Paper PDFAbdelrahim Hussam MoubayedNoch keine Bewertungen

- Autonomous Robot OperationDokument16 SeitenAutonomous Robot OperationDiego CorreaNoch keine Bewertungen

- Webcam Motion Detection 2007Dokument4 SeitenWebcam Motion Detection 2007anon-773497Noch keine Bewertungen

- Object TrackDokument5 SeitenObject TrackEngr EbiNoch keine Bewertungen

- Calculating Distance To Tomato Using Stereo Vision For Automatic HarvestingDokument9 SeitenCalculating Distance To Tomato Using Stereo Vision For Automatic HarvestingÁnh Phạm NgọcNoch keine Bewertungen

- Object Tracking Using High Resolution Satellite ImageryDokument15 SeitenObject Tracking Using High Resolution Satellite ImageryashalizajohnNoch keine Bewertungen

- Mikulski Stanislaw Laser 11Dokument7 SeitenMikulski Stanislaw Laser 11NuegatteNoch keine Bewertungen

- Finding 3 D Positions From 2 D ImagesDokument4 SeitenFinding 3 D Positions From 2 D ImagesaseNoch keine Bewertungen

- Edge Detection-Application of (First and Second) Order Derivative in Image ProcessingDokument11 SeitenEdge Detection-Application of (First and Second) Order Derivative in Image ProcessingBhuviNoch keine Bewertungen

- Analysis of Small Tumours in Ultrasound Breast Echography: AbstractDokument4 SeitenAnalysis of Small Tumours in Ultrasound Breast Echography: AbstractDimona MoldovanuNoch keine Bewertungen

- Research of Multi-Object Detection and Tracking Using Machine Learning Based On Knowledge For Video Surveillance SystemDokument10 SeitenResearch of Multi-Object Detection and Tracking Using Machine Learning Based On Knowledge For Video Surveillance SystemBredino LolerNoch keine Bewertungen

- Moving Body/object Detection and TrackingDokument4 SeitenMoving Body/object Detection and TrackingInternational Journal of Application or Innovation in Engineering & ManagementNoch keine Bewertungen

- Introduction To Image Processing: Dr. Shahrul Nizam YaakobDokument44 SeitenIntroduction To Image Processing: Dr. Shahrul Nizam YaakobNatasya AzmanNoch keine Bewertungen

- ImageDokument14 SeitenImageShanjuJossiahNoch keine Bewertungen

- Youn': Entrance Detection of A Moving Object Using Intensity Average Variation of Subtraction ImagesDokument6 SeitenYoun': Entrance Detection of A Moving Object Using Intensity Average Variation of Subtraction ImagesleninbNoch keine Bewertungen

- Tracking Human Motion Using SkelDokument5 SeitenTracking Human Motion Using SkelnavyataNoch keine Bewertungen

- Computer Vision Using Fuzzy Logic For ManipulatorDokument9 SeitenComputer Vision Using Fuzzy Logic For Manipulatortou kaiNoch keine Bewertungen

- Robot Vision: Y. ShiraiDokument28 SeitenRobot Vision: Y. ShiraiRogelio Portillo VelezNoch keine Bewertungen

- PP 34-40 Real Time Implementation ofDokument7 SeitenPP 34-40 Real Time Implementation ofEditorijset IjsetNoch keine Bewertungen

- Modelling Scattering Distortion in 3D Range CameraDokument6 SeitenModelling Scattering Distortion in 3D Range CameraMuhammad Ahmad MalikNoch keine Bewertungen

- Real-Time Image Processing Algorithms For Object and Distances Identification in Mobile Robot Trajectory PlanningDokument6 SeitenReal-Time Image Processing Algorithms For Object and Distances Identification in Mobile Robot Trajectory PlanningamitsbhatiNoch keine Bewertungen

- Human Motion Detection and Video Surveillance Using MATLAB: Sapana K. Mishra, Kanchan .S BhagatDokument4 SeitenHuman Motion Detection and Video Surveillance Using MATLAB: Sapana K. Mishra, Kanchan .S BhagatRazvanHaragNoch keine Bewertungen

- 4 Laser Systems 2Dokument17 Seiten4 Laser Systems 2aelhosary770Noch keine Bewertungen

- (IJET-V1I6P15) Authors: Sadhana Raut, Poonam Rohani, Sumera Shaikh, Tehesin Shikilkar, Mrs. G. J. ChhajedDokument7 Seiten(IJET-V1I6P15) Authors: Sadhana Raut, Poonam Rohani, Sumera Shaikh, Tehesin Shikilkar, Mrs. G. J. ChhajedInternational Journal of Engineering and TechniquesNoch keine Bewertungen

- 3D Face Matching Using Passive Stereo VisionDokument13 Seiten3D Face Matching Using Passive Stereo VisionPaban SarmaNoch keine Bewertungen

- A Study of The Necessity For Cooling of Chargecoupled Devices in Xray Imaging SystemsDokument5 SeitenA Study of The Necessity For Cooling of Chargecoupled Devices in Xray Imaging SystemsulaganathanNoch keine Bewertungen

- Iran I 93 MotionDokument24 SeitenIran I 93 MotionAlejandro PerezNoch keine Bewertungen

- Robot-Sensor System Integration by Means Vision Ultrasonic Sensing For Localization and Recognition ObjectsDokument7 SeitenRobot-Sensor System Integration by Means Vision Ultrasonic Sensing For Localization and Recognition ObjectsRavi SharmaNoch keine Bewertungen

- Format Will Be Similar To That of Sample Quiz.: 4. What Is Resolution?Dokument6 SeitenFormat Will Be Similar To That of Sample Quiz.: 4. What Is Resolution?Santosh Reddy ChadaNoch keine Bewertungen

- Polarimetric Imaging System For Automatic Target Detection and RecognitionDokument20 SeitenPolarimetric Imaging System For Automatic Target Detection and RecognitionChetan MruthyunjayaNoch keine Bewertungen

- Swati's Paper On RobotrackerDokument19 SeitenSwati's Paper On RobotrackerpamswatiNoch keine Bewertungen

- Liu2017 PDFDokument13 SeitenLiu2017 PDFChaithra LakshmiNoch keine Bewertungen

- Synthetic Image Generation With and Aperture Camera ModelDokument24 SeitenSynthetic Image Generation With and Aperture Camera ModelAnis HadjariNoch keine Bewertungen

- Hierarchical Vertebral Body Segmentation Using Graph Cuts and Statistical Shape ModellingDokument5 SeitenHierarchical Vertebral Body Segmentation Using Graph Cuts and Statistical Shape ModellingijtetjournalNoch keine Bewertungen

- Robust Model-Based 3D Object Recognition by Combining Feature Matching With TrackingDokument6 SeitenRobust Model-Based 3D Object Recognition by Combining Feature Matching With TrackingVinoth GunaSekaranNoch keine Bewertungen

- Photogrammetry Mathematics 080116Dokument128 SeitenPhotogrammetry Mathematics 080116firosekhan100% (2)

- Determination The Resolving Power of A TelescopeDokument6 SeitenDetermination The Resolving Power of A TelescopeAsa mathewNoch keine Bewertungen

- Motion Estimation For Moving Target DetectionDokument9 SeitenMotion Estimation For Moving Target DetectionSatyam ShandilyaNoch keine Bewertungen

- Discrete FiltersDokument7 SeitenDiscrete FiltersFilip AndreiNoch keine Bewertungen

- "Hands-Free PC Control" Controlling of Mouse Cursor Using Eye MovementDokument5 Seiten"Hands-Free PC Control" Controlling of Mouse Cursor Using Eye MovementTg WallasNoch keine Bewertungen

- Digital Image Processing IntroductionDokument33 SeitenDigital Image Processing IntroductionAshish KumarNoch keine Bewertungen

- Industrial X-Ray Computed TomographyVon EverandIndustrial X-Ray Computed TomographySimone CarmignatoNoch keine Bewertungen

- Standard and Super-Resolution Bioimaging Data Analysis: A PrimerVon EverandStandard and Super-Resolution Bioimaging Data Analysis: A PrimerNoch keine Bewertungen

- Programming of HC900 Controller Based Speed Control System For D.C. MotorDokument8 SeitenProgramming of HC900 Controller Based Speed Control System For D.C. MotorBrijesh SrivastavNoch keine Bewertungen

- Asco Temperature SeriesDokument4 SeitenAsco Temperature SeriesJhon PaulNoch keine Bewertungen

- Merlin Gerin SF1Dokument30 SeitenMerlin Gerin SF1BARAJASMEDINA100% (1)

- 150 C85NBD 1294071956Dokument24 Seiten150 C85NBD 1294071956Jhon PaulNoch keine Bewertungen

- Message Staging and Logging Options in Advanced Adapter Engine of PI - PO 7.3x - 7.4 - SAP Blogs PDFDokument26 SeitenMessage Staging and Logging Options in Advanced Adapter Engine of PI - PO 7.3x - 7.4 - SAP Blogs PDFSujith KumarNoch keine Bewertungen

- 17. ĐỀ SỐ 17 HSG ANH 9 HUYỆNDokument9 Seiten17. ĐỀ SỐ 17 HSG ANH 9 HUYỆNHồng Hoàn NguyễnNoch keine Bewertungen

- Carl Jung - CW 18 Symbolic Life AbstractsDokument50 SeitenCarl Jung - CW 18 Symbolic Life AbstractsReni DimitrovaNoch keine Bewertungen

- Labour WelfareDokument250 SeitenLabour WelfareArundhathi AdarshNoch keine Bewertungen

- Master SC 2015 enDokument72 SeitenMaster SC 2015 enNivas Kumar SureshNoch keine Bewertungen

- Ubd Food ChainDokument5 SeitenUbd Food Chainapi-313687749Noch keine Bewertungen

- Cleanliness LevelDokument4 SeitenCleanliness LevelArunkumar ChandaranNoch keine Bewertungen

- Flow Diagram: Equipment Identification Numbering SystemDokument24 SeitenFlow Diagram: Equipment Identification Numbering Systemmkpq100% (1)

- Exercise of English LanguageDokument2 SeitenExercise of English LanguageErspnNoch keine Bewertungen

- Storage Center: Command Set 4.1Dokument174 SeitenStorage Center: Command Set 4.1luke.lecheler5443Noch keine Bewertungen

- TGC 121 505558shubham AggarwalDokument4 SeitenTGC 121 505558shubham Aggarwalshubham.aggarwalNoch keine Bewertungen

- Skripsi Tanpa Bab Pembahasan PDFDokument67 SeitenSkripsi Tanpa Bab Pembahasan PDFaaaaNoch keine Bewertungen

- Transportation Hub - Sample WorkDokument17 SeitenTransportation Hub - Sample WorkMohamed LabibNoch keine Bewertungen

- Physics - DDPS1713 - Chapter 4-Work, Energy, Momentum and PowerDokument26 SeitenPhysics - DDPS1713 - Chapter 4-Work, Energy, Momentum and Powerjimmi_ramliNoch keine Bewertungen

- Absolute Duo 1 PDFDokument219 SeitenAbsolute Duo 1 PDFAgnieškaRužičkaNoch keine Bewertungen

- O.E.Lab - Docx For Direct Shear TestDokument14 SeitenO.E.Lab - Docx For Direct Shear TestAmirah SyakiraNoch keine Bewertungen

- Fruits Basket - MemoryDokument1 SeiteFruits Basket - Memorywane10132100% (1)

- Program - PPEPPD 2019 ConferenceDokument3 SeitenProgram - PPEPPD 2019 ConferenceLuis FollegattiNoch keine Bewertungen

- PhantasmagoriaDokument161 SeitenPhantasmagoriamontgomeryhughes100% (1)

- Innoventure List of Short Listed CandidatesDokument69 SeitenInnoventure List of Short Listed CandidatesgovindmalhotraNoch keine Bewertungen

- Technical Report No. 1/12, February 2012 Jackknifing The Ridge Regression Estimator: A Revisit Mansi Khurana, Yogendra P. Chaubey and Shalini ChandraDokument22 SeitenTechnical Report No. 1/12, February 2012 Jackknifing The Ridge Regression Estimator: A Revisit Mansi Khurana, Yogendra P. Chaubey and Shalini ChandraRatna YuniartiNoch keine Bewertungen

- Dürer's Rhinoceros Springer Esteban JMDokument29 SeitenDürer's Rhinoceros Springer Esteban JMmiguelestebanNoch keine Bewertungen

- English II Homework Module 6Dokument5 SeitenEnglish II Homework Module 6Yojana DubonNoch keine Bewertungen

- HT 02 Intro Tut 07 Radiation and ConvectionDokument46 SeitenHT 02 Intro Tut 07 Radiation and ConvectionrbeckkNoch keine Bewertungen

- Part 1 - Install PfSense On ESXi - Calvin BuiDokument8 SeitenPart 1 - Install PfSense On ESXi - Calvin Buiandrei2andrei_3Noch keine Bewertungen

- Practice Revision Questions Number SystemsDokument1 SeitePractice Revision Questions Number SystemsRavi Prasaath IXNoch keine Bewertungen

- IMS - General MBA - Interview QuestionsDokument2 SeitenIMS - General MBA - Interview QuestionsRahulSatputeNoch keine Bewertungen

- Waqas Ahmed C.VDokument2 SeitenWaqas Ahmed C.VWAQAS AHMEDNoch keine Bewertungen