Beruflich Dokumente

Kultur Dokumente

4.1 Computer Memory System Overview

Hochgeladen von

mtenay1Originalbeschreibung:

Originaltitel

Copyright

Verfügbare Formate

Dieses Dokument teilen

Dokument teilen oder einbetten

Stufen Sie dieses Dokument als nützlich ein?

Sind diese Inhalte unangemessen?

Dieses Dokument meldenCopyright:

Verfügbare Formate

4.1 Computer Memory System Overview

Hochgeladen von

mtenay1Copyright:

Verfügbare Formate

William Stallings Computer Organization and Architecture 6th Edition Chapter 4 Cache Memory

Overview IN this chapter we will study 4.1 COMPUTER MEMORY SYSTEM OVERVIEW 4.2 CACHE MEMORY PRINCIPLES 4.3 ELEMENTS OF CACHE DESIGN

2004-09-30

4.1 COMPUTER MEMORY SYSTEM OVERVIEW

Location CPU Internal External

Characteristics of memory system

Location Capacity Unit of transfer Access method Memory hierarchy Performance Physical type

Capacity Word size

The natural unit of organization

Unit of Transfer Internal

Usually governed by data bus width

Number of words

or Bytes

External

Usually a block which is much larger than a word

Addressable unit

Smallest location which can be uniquely addressed Word internally

Access Methods (1) Sequential

Access Methods (2) Random

Individual addresses identify locations exactly Access time is independent of location or previous access e.g. RAM

Direct

Start at the beginning and read through in order Access time depends on location of data and previous location e.g. tape Individual blocks have unique address Access is by jumping to vicinity plus sequential search Access time depends on location and previous location e.g. disk

Associative

Data is located by a comparison with contents of a portion of the store Access time is independent of location or previous access e.g. cache

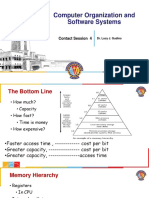

Memory Hierarchy Registers

In CPU

Memory Hierarchy - Diagram

Internal or Main memory

May include one or more levels of cache RAM

External memory

Backing store

Hierarchy List Registers L1 Cache L2 Cache Main memory Disk cache Disk Optical Tape

Performance Access time (latency in case of non-random)

Time between presenting the address and getting the valid data

Memory Cycle time (only to random access)

Time may be required for the memory to recover before next access Cycle time is access + recovery

Transfer Rate

Rate at which data can be moved

Physical Types Semiconductor

RAM

The Bottom Line How much?

Capacity

Magnetic

Disk & Tape

How fast?

Time is money

Optical

CD & DVD

How expensive?

So you want fast? It is possible to build a computer which uses only static RAM (see later) This would be very fast This would need no cache

How can you cache cache?

4.2 CACHE MEMORY PRINCIPLES Locality of reference What is cache? Cache operation Cache organization

This would cost a very large amount

Locality of Reference During the course of the execution of a program, memory references tend to cluster e.g. loops

What is Cache ? Small amount of fast memory Sits between normal main memory and CPU May be located on CPU chip or module

Cache Operation CPU requests contents of memory location Check cache for this data If present, get from cache (fast) If not present, read required block from main memory to cache Then deliver from cache to CPU Cache includes tags to identify which block of main memory is in each cache slot

Cache Organization

4.3 ELEMENTS OF CACHE DESIGN Cache size Mapping function Replacement algorithms Write policy Line size (block size) Number of caches

Cache size Cost

More cache is expensive

Speed

More cache is faster (up to a point) Checking cache for data takes time

Mapping Function Cache of 64kByte Cache block of 4 bytes

i.e. cache is 16k (214) lines of 4 bytes

1. Direct Mapping Each block of main memory maps to only one cache line

i.e. if a block is in cache, it must be in one specific place

16MBytes main memory 24 bit address

(224=16M)

Direct mapping Associative mapping Set associative mapping

Address is in two parts Least Significant w bits identify unique word Most Significant s bits specify one memory block The MSBs are split into a cache line field r and a tag of s-r (most significant)

Direct Mapping Cache Organization

Direct Mapping Example

Direct Mapping Address Structure

Tag s-r 8 Line or Slot r 14 Word w 2

Direct Mapping Cache Line Table Cache line 0 1 m-1 Main Memory blocks held 0, m, 2m, 3m2s-m 1,m+1, 2m+12s-m+1 m-1, 2m-1,3m-12s-1

24 bit address 2 bit word identifier (4 byte block) 22 bit block identifier

8 bit tag (=22-14) 14 bit slot or line

No two blocks in the same line have the same Tag field Check contents of cache by finding line and checking Tag

Direct Mapping Summary

Address length = (s + w) bits Number of addressable units = 2s+w words or bytes Block size = line size = 2w words or bytes Number of blocks in main memory = 2s+ w/2w = 2s Number of lines in cache = m = 2r Size of tag = (s r) bits

Direct Mapping pros & cons Simple Inexpensive Fixed location for given block

If a program accesses 2 blocks that map to the same line repeatedly, cache misses are very high

2. Associative Mapping A main memory block can load into any line of cache Memory address is interpreted as tag and word Tag uniquely identifies block of memory Every lines tag is examined for a match Cache searching gets expensive

Fully Associative Cache Organization

Associative Mapping Example

Associative Mapping Address Structure

Tag 22 bit

Word 2 bit

22 bit tag stored with each 32 bit block of data Compare tag field with tag entry in cache to check for hit Least significant 2 bits of address identify which 16 bit word is required from 32 bit data block e.g.

Address FFFFFC Tag FFFFFC Data 24682468 Cache line 3FFF

Associative Mapping Summary

Address length = (s + w) bits Number of addressable units = 2s+w words or bytes Block size = line size = 2w words or bytes Number of blocks in main memory = 2s+ w/2w = 2s Number of lines in cache = undetermined Size of tag = s bits

3. Set Associative Mapping Cache is divided into a number of sets Each set contains a number of lines A given block maps to any line in a given set

e.g. Block B can be in any line of set i

e.g. 2 lines per set

2 way associative mapping A given block can be in one of 2 lines in only one set

Two Way Set Associative Cache Organization

Two Way Set Associative Mapping Example

Set Associative Mapping Example 13 bit set number Block number in main memory is modulo 213 000000, 00A000, 00B000, 00C000 map to same set

Set Associative Mapping Address Structure

Word 2 bit

Tag 9 bit

Set 13 bit

Use set field to determine cache set to look in Compare tag field to see if we have a hit e.g

Address 1FF 7FFC 001 7FFC Tag 1FF 001 Data 12345678 11223344 Set number 1FFF 1FFF

10

Set Associative Mapping Summary

Address length = (s + w) bits Number of addressable units = 2s+w words or bytes Block size = line size = 2w words or bytes Number of blocks in main memory = 2d Number of lines in set = k Number of sets = v = 2d Number of lines in cache = kv = k * 2d Size of tag = (s d) bits

Replacement Algorithms (1) Direct mapping No choice Each block only maps to one line Replace that line

Replacement Algorithms (2) Associative & Set Associative Hardware implemented algorithm (speed) Least Recently used (LRU) e.g. in 2 way set associative

Which of the 2 block is lru?

Write Policy Must not overwrite a cache block unless main memory is up to date Multiple CPUs may have individual caches I/O may address main memory directly Write through Write back

First in first out (FIFO)

replace block that has been in cache longest

Least frequently used

replace block which has had fewest hits

Random

11

Write through All writes go to main memory as well as cache Multiple CPUs can monitor main memory traffic to keep local (to CPU) cache up to date Lots of traffic Slows down writes Remember bogus write through caches!

Write back Updates initially made in cache only Update bit for cache slot is set when update occurs If block is to be replaced, write to main memory only if update bit is set I/O must access main memory through cache

Contents of memory and cache are different

12

Das könnte Ihnen auch gefallen

- What SF6 Gas TestingDokument4 SeitenWhat SF6 Gas TestingAnonymous V1oLCBNoch keine Bewertungen

- William Stallings Computer Organization and Architecture 7th Edition Cache MemoryDokument57 SeitenWilliam Stallings Computer Organization and Architecture 7th Edition Cache MemoryMashail AliNoch keine Bewertungen

- Ieee 450Dokument2 SeitenIeee 450ilmvmvm11Noch keine Bewertungen

- Doosan Operation Manual PJM05Dokument3 SeitenDoosan Operation Manual PJM05Nim100% (1)

- Transient Stability Report For Beneban (Taqa) Report R05Dokument125 SeitenTransient Stability Report For Beneban (Taqa) Report R05dhananjay_gvit2207100% (1)

- Access Control TestingDokument9 SeitenAccess Control Testingiwan100% (2)

- Chapter 5Dokument87 SeitenChapter 5supersibas11Noch keine Bewertungen

- Cache MemoryDokument72 SeitenCache MemoryMaroun Bejjany67% (3)

- Computer Organization and Architecture: Cache MemoryDokument57 SeitenComputer Organization and Architecture: Cache MemoryRyan R. Sarco100% (1)

- YORK VRF Old Fashion Wired Remote Controller JCWA10NEWQ - Installation & Operation Manual - H7W02215 PDFDokument12 SeitenYORK VRF Old Fashion Wired Remote Controller JCWA10NEWQ - Installation & Operation Manual - H7W02215 PDFFadul Chamie TietjenNoch keine Bewertungen

- BRC1C62 0 PDFDokument7 SeitenBRC1C62 0 PDFHan Van KhaNoch keine Bewertungen

- Fuel CellDokument14 SeitenFuel CellJohnNeilBiberaNoch keine Bewertungen

- William Stallings Computer Organization and Architecture 6th Edition Cache MemoryDokument55 SeitenWilliam Stallings Computer Organization and Architecture 6th Edition Cache Memorydarwinvargas2011Noch keine Bewertungen

- 04 - Cache Memory (Compatibility Mode)Dokument12 Seiten04 - Cache Memory (Compatibility Mode)John PhanNoch keine Bewertungen

- William Stallings Computer Organization and Architecture 6th Edition Cache MemoryDokument45 SeitenWilliam Stallings Computer Organization and Architecture 6th Edition Cache MemoryFatima ZehraNoch keine Bewertungen

- 04 - Cache MemoryDokument47 Seiten04 - Cache MemoryuabdulgwadNoch keine Bewertungen

- William Stallings Computer Organization and Architecture 7th Edition Cache MemoryDokument57 SeitenWilliam Stallings Computer Organization and Architecture 7th Edition Cache MemorySumaiya KhanNoch keine Bewertungen

- 04 Cache MemoryDokument75 Seiten04 Cache MemorySangeetha ShankaranNoch keine Bewertungen

- William Stallings Computer Organization and Architecture 8th Edition Cache MemoryDokument71 SeitenWilliam Stallings Computer Organization and Architecture 8th Edition Cache MemoryRehman HazratNoch keine Bewertungen

- William Stallings Computer Organization and Architecture 7th Edition Cache MemoryDokument57 SeitenWilliam Stallings Computer Organization and Architecture 7th Edition Cache MemoryDurairajan AasaithambaiNoch keine Bewertungen

- William Stallings Computer Organization and Architecture 7th Edition Cache MemoryDokument67 SeitenWilliam Stallings Computer Organization and Architecture 7th Edition Cache Memorydilshadsabir8911Noch keine Bewertungen

- Characteristics Location Capacity Unit of Transfer Access Method Performance Physical Type Physical Characteristics OrganisationDokument53 SeitenCharacteristics Location Capacity Unit of Transfer Access Method Performance Physical Type Physical Characteristics OrganisationMarkNoch keine Bewertungen

- Cache Memory-Associative MappingDokument23 SeitenCache Memory-Associative MappingEswin AngelNoch keine Bewertungen

- William Stallings Computer Organization and Architecture 8th Edition Cache MemoryDokument71 SeitenWilliam Stallings Computer Organization and Architecture 8th Edition Cache MemoryBum TumNoch keine Bewertungen

- Cache Memory-Direct MappingDokument30 SeitenCache Memory-Direct MappingEswin AngelNoch keine Bewertungen

- Lecture 4Dokument35 SeitenLecture 4Meena ShahNoch keine Bewertungen

- Computer Arch 06Dokument41 SeitenComputer Arch 06NaheedNoch keine Bewertungen

- Computer Org and Arch: R.MageshDokument48 SeitenComputer Org and Arch: R.Mageshmage9999Noch keine Bewertungen

- Cache MemoryDokument39 SeitenCache MemoryhariprasathkNoch keine Bewertungen

- William Stallings Computer Organization and Architecture 7th EditionDokument57 SeitenWilliam Stallings Computer Organization and Architecture 7th EditionAthreyaNoch keine Bewertungen

- Characteristics Location Capacity Unit of Transfer Access Method Performance Physical Type Physical Characteristics OrganisationDokument53 SeitenCharacteristics Location Capacity Unit of Transfer Access Method Performance Physical Type Physical Characteristics Organisationapi-26594847Noch keine Bewertungen

- Lecture 06Dokument43 SeitenLecture 06Meena ShahNoch keine Bewertungen

- Computer Organization & Architecture: Cache MemoryDokument52 SeitenComputer Organization & Architecture: Cache Memorymuhammad farooqNoch keine Bewertungen

- CH 4.ppt Type IDokument60 SeitenCH 4.ppt Type Ilaraibnawaz86Noch keine Bewertungen

- William Stallings Computer Organization and Architecture 8th Edition Cache MemoryDokument71 SeitenWilliam Stallings Computer Organization and Architecture 8th Edition Cache MemoryRudi GunawanNoch keine Bewertungen

- Cache MappingDokument44 SeitenCache MappingxoeaeoxNoch keine Bewertungen

- Lecture 5Dokument24 SeitenLecture 5Meena ShahNoch keine Bewertungen

- Characteristics Location Capacity Unit of Transfer Access Method Performance Physical Type Physical Characteristics OrganisationDokument53 SeitenCharacteristics Location Capacity Unit of Transfer Access Method Performance Physical Type Physical Characteristics OrganisationRonel Salazar BlanzaNoch keine Bewertungen

- Unit 1 Part 2 (Chapter 4) Cache MemoryDokument53 SeitenUnit 1 Part 2 (Chapter 4) Cache MemoryNITHIYA PAUL 1847244Noch keine Bewertungen

- COSS - Contact Session - 4Dokument34 SeitenCOSS - Contact Session - 4Prasana VenkateshNoch keine Bewertungen

- Module 4: Memory System Organization & ArchitectureDokument97 SeitenModule 4: Memory System Organization & ArchitectureSurya SunderNoch keine Bewertungen

- 04 - Cache MemoryDokument71 Seiten04 - Cache MemoryDoc TelNoch keine Bewertungen

- Memory OrganizationDokument57 SeitenMemory OrganizationvpriyacseNoch keine Bewertungen

- Chap 6Dokument48 SeitenChap 6siddhiNoch keine Bewertungen

- William Stallings Computer Organization and Architecture 7th Edition Cache MemoryDokument51 SeitenWilliam Stallings Computer Organization and Architecture 7th Edition Cache MemoryAndi Didik Wira PutraNoch keine Bewertungen

- 04 Cache MemoryDokument71 Seiten04 Cache MemoryPutu Wisnu Ardia Chandra Undiksha 2019Noch keine Bewertungen

- Lecture-04, Adv. Computer Architecture, CS-522Dokument39 SeitenLecture-04, Adv. Computer Architecture, CS-522torabgullNoch keine Bewertungen

- Lecture-04 & 05, Adv. Computer Architecture, CS-522Dokument63 SeitenLecture-04 & 05, Adv. Computer Architecture, CS-522torabgullNoch keine Bewertungen

- William Stallings Computer Organization and Architecture 7th Edition Cache MemoryDokument64 SeitenWilliam Stallings Computer Organization and Architecture 7th Edition Cache Memoryrooop sagar GaimnaniNoch keine Bewertungen

- Computer Organization: Lec #10: Cache Memory Bnar MustafaDokument22 SeitenComputer Organization: Lec #10: Cache Memory Bnar Mustafazara qojo (زارا)Noch keine Bewertungen

- Memory Hierarchy: REG Cache Main SecondaryDokument37 SeitenMemory Hierarchy: REG Cache Main SecondaryHimeshParyaniNoch keine Bewertungen

- COSS - Contact Session - 4 - With AnnotatonDokument44 SeitenCOSS - Contact Session - 4 - With Annotatonnotes.chandanNoch keine Bewertungen

- 11 Cache Memory, Internal, ExternalDokument102 Seiten11 Cache Memory, Internal, Externalbezelx1Noch keine Bewertungen

- Lect11 Cache BasicsDokument22 SeitenLect11 Cache BasicsVishal MittalNoch keine Bewertungen

- William Stallings Computer Organization and Architecture 8th Edition Cache MemoryDokument71 SeitenWilliam Stallings Computer Organization and Architecture 8th Edition Cache MemoryAqib abdullahNoch keine Bewertungen

- Chapter ThreeDokument63 SeitenChapter ThreesileNoch keine Bewertungen

- Lecutre-8 Cache MemoryDokument25 SeitenLecutre-8 Cache MemoryMd. Firoz HasanNoch keine Bewertungen

- William Stallings Computer Organization and Architecture 8th Edition Cache MemoryDokument72 SeitenWilliam Stallings Computer Organization and Architecture 8th Edition Cache Memoryanon_540203628Noch keine Bewertungen

- Cache + Associations Ch-4Dokument52 SeitenCache + Associations Ch-4ahsan2020azizNoch keine Bewertungen

- 3 - Cache MemoryDokument35 Seiten3 - Cache MemoryAliaa TarekNoch keine Bewertungen

- William Stallings Computer Organization and Architecture 7th Edition Cache MemoryDokument66 SeitenWilliam Stallings Computer Organization and Architecture 7th Edition Cache MemorySanket GawdeNoch keine Bewertungen

- Elements of Cache Design Pentium IV Cache OrganizationDokument43 SeitenElements of Cache Design Pentium IV Cache OrganizationAhmadChoirulNoch keine Bewertungen

- Cache and Memory SystemsDokument100 SeitenCache and Memory SystemsJay JaberNoch keine Bewertungen

- Cache Memory - January 2014Dokument90 SeitenCache Memory - January 2014Dare DevilNoch keine Bewertungen

- From William Stalling 8 Edition + OthersDokument75 SeitenFrom William Stalling 8 Edition + OthersTirusew AbereNoch keine Bewertungen

- Accurate and Dependable Roll Hardness TestingDokument2 SeitenAccurate and Dependable Roll Hardness TestingJabranYounasNoch keine Bewertungen

- 5kVA Dry Type Transformer SpecsDokument1 Seite5kVA Dry Type Transformer SpecsKillua X Ma'rufNoch keine Bewertungen

- C5 1-1Dokument7 SeitenC5 1-1Hikari HoshiNoch keine Bewertungen

- Umar Farooq CVDokument2 SeitenUmar Farooq CVhussaintariqueNoch keine Bewertungen

- Expression Mr200Dokument6 SeitenExpression Mr200Alexandra JanicNoch keine Bewertungen

- 04 Laboratory Exercise 4 (Full Permission)Dokument2 Seiten04 Laboratory Exercise 4 (Full Permission)DomsNoch keine Bewertungen

- Valliammai Engineering College: SRM Nagar, Kattankulathur - 603 203Dokument7 SeitenValliammai Engineering College: SRM Nagar, Kattankulathur - 603 203Siva SankarNoch keine Bewertungen

- Mpfi Vs CrdiDokument7 SeitenMpfi Vs CrdiArun KumarNoch keine Bewertungen

- Amf9 PDFDokument63 SeitenAmf9 PDFmohammad hazbehzadNoch keine Bewertungen

- Assignment For EconomicsDokument5 SeitenAssignment For EconomicstarekNoch keine Bewertungen

- BRD8063 D PDFDokument24 SeitenBRD8063 D PDFrammerDankovNoch keine Bewertungen

- SWR sm-400s Owners ManualDokument5 SeitenSWR sm-400s Owners Manualsquidman100% (1)

- ATENA TroubleshootingDokument63 SeitenATENA TroubleshootingAlvaro Hernandez LopezNoch keine Bewertungen

- DATA PIN IC TA1223ANimpDokument2 SeitenDATA PIN IC TA1223ANimpjfran0002100% (1)

- Sri Vasavi Engineering College: 132/33 KV Substation YernagudemDokument34 SeitenSri Vasavi Engineering College: 132/33 KV Substation Yernagudemsathya naiduNoch keine Bewertungen

- XY Plotter V2.02 Software ManualsDokument13 SeitenXY Plotter V2.02 Software Manualsluizcaldeira13Noch keine Bewertungen

- Stag-4 Qbox, Qnext, Stag-300 Qmax - Manual Ver1 7 6 (25!04!2016) enDokument61 SeitenStag-4 Qbox, Qnext, Stag-300 Qmax - Manual Ver1 7 6 (25!04!2016) ensamserbanexNoch keine Bewertungen

- X-Ray Imaging TechniquesDokument15 SeitenX-Ray Imaging TechniquesMuhamad Richard MenarizkiNoch keine Bewertungen

- VLSI Job OpportunitiesDokument3 SeitenVLSI Job OpportunitiesKulbhushan ThakurNoch keine Bewertungen

- Group8 SectionA Case3Dokument6 SeitenGroup8 SectionA Case3Nikhil S 23Noch keine Bewertungen

- 10113163Dokument36 Seiten10113163Anonymous hDKqasfNoch keine Bewertungen

- Introduction (V1)Dokument24 SeitenIntroduction (V1)مصطفى حمدىNoch keine Bewertungen