Beruflich Dokumente

Kultur Dokumente

Cache Nptel

Hochgeladen von

janeprice0 Bewertungen0% fanden dieses Dokument nützlich (0 Abstimmungen)

94 Ansichten3 SeitenThis course covers program optimization techniques for multi-core architectures. It discusses issues with writing code for multi-core processors and techniques for developing software for these architectures. Topics include introduction to parallel computers and multi-core architectures, shared memory programming, chip multiprocessors, program optimization, operating system support, and parallel programming tools like OpenMP. The course aims to help students optimize programs to take advantage of multi-core hardware.

Originalbeschreibung:

Cache Nptel

Copyright

© © All Rights Reserved

Verfügbare Formate

PDF, TXT oder online auf Scribd lesen

Dieses Dokument teilen

Dokument teilen oder einbetten

Stufen Sie dieses Dokument als nützlich ein?

Sind diese Inhalte unangemessen?

Dieses Dokument meldenThis course covers program optimization techniques for multi-core architectures. It discusses issues with writing code for multi-core processors and techniques for developing software for these architectures. Topics include introduction to parallel computers and multi-core architectures, shared memory programming, chip multiprocessors, program optimization, operating system support, and parallel programming tools like OpenMP. The course aims to help students optimize programs to take advantage of multi-core hardware.

Copyright:

© All Rights Reserved

Verfügbare Formate

Als PDF, TXT herunterladen oder online auf Scribd lesen

0 Bewertungen0% fanden dieses Dokument nützlich (0 Abstimmungen)

94 Ansichten3 SeitenCache Nptel

Hochgeladen von

janepriceThis course covers program optimization techniques for multi-core architectures. It discusses issues with writing code for multi-core processors and techniques for developing software for these architectures. Topics include introduction to parallel computers and multi-core architectures, shared memory programming, chip multiprocessors, program optimization, operating system support, and parallel programming tools like OpenMP. The course aims to help students optimize programs to take advantage of multi-core hardware.

Copyright:

© All Rights Reserved

Verfügbare Formate

Als PDF, TXT herunterladen oder online auf Scribd lesen

Sie sind auf Seite 1von 3

NPTEL Syllabus

Program Optimization for Multi-core

Architectures - Web course

COURSE OUTLINE

This is an advanced undergraduate/postgraduate course on compiler

optimization for multi-core architectures. The course will cover the following

broad topics.

What are multi-core architectures

Issues involved in writing code for the multi-core architectures

How to develop software for these architectures

What are the program optimization techniques

How to build some of these techniques in compilers

OpenMP and parallel programming libraries

Contents:

Introduction to parallel computers: Instruction Level Parallelism (ILP) vs. Thread

Level Parallelism (TLP); performance issues: brief introduction to cache

hierarchy and communication latency.

Shared memory multiprocessors: general architecture and the problem of cache

coherence; synchronization primitives: atomic primitives; locks: TTS, tickets,

array; barriers: central and tree; performance implications in shared memory

programs.

Chip multiprocessors: why CMP (Moore's law, wire delay) ; shared L2 vs. tiled

CMP; core complexity; power/performance; snoopy coherence: invalidate vs.

update, MSI, MESI, MOESI, MOSI;memory consistency models: SC; chip

multiprocessor case studies: Intel Montecito and dual core Pentium 4, IBM

power4, Sun Niagara.

Introduction to program optimization: overview of parallelism, shared memory

programming; introduction to OpenMP; data flow analysis, pointer analysis, alias

analysis, data dependence analysis, solving data dependence equations

(integer linear programming problem); loop optimizations; memory hierarchy

issues in code optimization.

Operating system issues for multiprocessing: need for pre-emptive OS,

scheduling techniques: usual OS scheduling techniques, threads, distributed

scheduler, multiprocessor scheduling , gang scheduling; communication

between processes, message boxes, shared memory; sharing issues and

synchronization, sharing memory and other structures.

Sharing I/O devices, distributed semaphores, monitors spin locks,

implementation techniques for multi-cores; case studies from applications: digital

signal processing, image processing, speech processing.

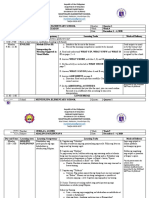

COURSE DETAIL

S.No Topics

1 Introduction to multi-core architectures

NPTEL

http://nptel.iitm.ac.in

Computer Science

and Engineering

Pre-requisites:

Undergraduate computer organization

and architecture, undergraduate

compilers, undergraduate operating

systems.

Coordinators:

Dr. Mainak Chaudhuri

Department of Computer Science and

EngineeringIIT Kanpur

Prof. Rajat Moona

Department of Computer Science and

EngineeringIIT Kanpur

Prof. Sanjeev K Aggarwal

Department of Computer Science and

EngineeringIIT Kanpur

2 Virtual memory and caches

3 Introduction to parallel programming

4 Cache coherence and memory consistency models

5 Hardware support for synchronization

6 Case studies of chip-multiprocessor

7 Introduction to program optimization

8 Control-flow analysis

9 Data-flow analysis

10 Compilers for high-performance architectures

11 Data dependence analysis

12 Loop optimizations

13 CPU scheduling

14 OS support for synchronization

15 Multi processor scheduling

16 Security issues

17 A tutorial on OpenMP

18 A tutorial on Intel threading tools

References:

1. J. L. Hennessy and D. A. Patterson. Computer Architecture: A Quantitative

Approach. Morgan Kaufmann publishers.

2. D. E. Culler, J. P. Singh, with A. Gupta. Parallel Computer Architecture: A

Hardware/Software Approach. Morgan Kaufmann publishers.

3. Steven S. Muchnick. Advanced Compiler Design and Implementation.

Morgan Kaufmann publishers.

4. Wolfe. Optimizing Supercompilers for Supercomputers. Addison-Wesley

publishers.

5. Allen and Kennedy. Optimizing Compilers for Modern Architectures.

Morgan Kaufmann publishers.

6. A. S. Tanenbaum. Distributed Operating Systems. Prentice Hall.

7. Coulouris, Dollimore, and Kindberg. Distributed Systems Concept and

Design. Addison- Wesley publishers.

8. Silberschatz, Galvin, and Gagne. Operating Systems Principles. Addison-

Wesley publishers.

A joint venture by IISc and IITs, funded by MHRD, Govt of India http://nptel.iitm.ac.in

Das könnte Ihnen auch gefallen

- Cse314 Advanced-computer-Architecture TH 1.10 Ac26Dokument2 SeitenCse314 Advanced-computer-Architecture TH 1.10 Ac26netgalaxy2010Noch keine Bewertungen

- 1.6 Final Thoughts: 1 Parallel Programming Models 49Dokument5 Seiten1.6 Final Thoughts: 1 Parallel Programming Models 49latinwolfNoch keine Bewertungen

- 2-INTRODUCTION TO PDC - MOTIVATION - KEY CONCEPTS-03-Dec-2019Material - I - 03-Dec-2019 - Module - 1 PDFDokument63 Seiten2-INTRODUCTION TO PDC - MOTIVATION - KEY CONCEPTS-03-Dec-2019Material - I - 03-Dec-2019 - Module - 1 PDFANTHONY NIKHIL REDDYNoch keine Bewertungen

- Comp422 534 2020 Lecture1 IntroductionDokument49 SeitenComp422 534 2020 Lecture1 IntroductionSadia MughalNoch keine Bewertungen

- High Performance Parallelism Pearls Volume One: Multicore and Many-core Programming ApproachesVon EverandHigh Performance Parallelism Pearls Volume One: Multicore and Many-core Programming ApproachesNoch keine Bewertungen

- Syllabus CS-0000 V Sem All SubjectDokument11 SeitenSyllabus CS-0000 V Sem All Subject12ashivangtiwariNoch keine Bewertungen

- CSE5006 Multicore-Architectures ETH 1 AC41Dokument9 SeitenCSE5006 Multicore-Architectures ETH 1 AC41;(Noch keine Bewertungen

- Cse2001 Computer-Architecture-And-Organization TH 1.0 37 Cse2001Dokument2 SeitenCse2001 Computer-Architecture-And-Organization TH 1.0 37 Cse2001devipriyaNoch keine Bewertungen

- ACA DefinitealgortihmDokument3 SeitenACA DefinitealgortihmRoxane holNoch keine Bewertungen

- 301OperatingsystemConcepts PDFDokument2 Seiten301OperatingsystemConcepts PDFsubhash221103Noch keine Bewertungen

- Comp422 2011 Lecture1 IntroductionDokument50 SeitenComp422 2011 Lecture1 IntroductionaskbilladdmicrosoftNoch keine Bewertungen

- Multicore Software Development Techniques: Applications, Tips, and TricksVon EverandMulticore Software Development Techniques: Applications, Tips, and TricksBewertung: 2.5 von 5 Sternen2.5/5 (2)

- Introduction To Parallel ComputingDokument30 SeitenIntroduction To Parallel ComputingAldo Ndun AveiroNoch keine Bewertungen

- Parallel and Distributed ComputingDokument10 SeitenParallel and Distributed ComputingRaunak Sharma50% (2)

- The Landscape of Parallel Computing ResearchDokument56 SeitenThe Landscape of Parallel Computing ResearchFeharaNoch keine Bewertungen

- Advanced Computer ArchitectureDokument2 SeitenAdvanced Computer Architecturesatraj5Noch keine Bewertungen

- Mcse 04 PDFDokument24 SeitenMcse 04 PDFJetesh DevgunNoch keine Bewertungen

- M.Tech (MVD)Dokument2 SeitenM.Tech (MVD)rishi tejuNoch keine Bewertungen

- Comp Arch Syllabus 6-29-2017Dokument4 SeitenComp Arch Syllabus 6-29-2017Karthikeya MamillapalliNoch keine Bewertungen

- Programming Massively Parallel Processors: A Hands-on ApproachVon EverandProgramming Massively Parallel Processors: A Hands-on ApproachNoch keine Bewertungen

- Scalable Parallel ComputingDokument11 SeitenScalable Parallel ComputingKala VishnuNoch keine Bewertungen

- Rahul Bansal 360 Parallel Computing FileDokument67 SeitenRahul Bansal 360 Parallel Computing FileRanjeet SinghNoch keine Bewertungen

- Introduction To Parallel ComputingDokument34 SeitenIntroduction To Parallel ComputingJOna Lyne0% (1)

- Flinkcl: An Opencl-Based In-Memory Computing Architecture On Heterogeneous Cpu-Gpu Clusters For Big Data AbstractDokument3 SeitenFlinkcl: An Opencl-Based In-Memory Computing Architecture On Heterogeneous Cpu-Gpu Clusters For Big Data AbstractBaranishankarNoch keine Bewertungen

- Berkeley ViewDokument54 SeitenBerkeley Viewshams43Noch keine Bewertungen

- High Performance ComputingDokument164 SeitenHigh Performance Computingtpabbas100% (2)

- Stream Processing in General-Purpose ProcessorsDokument1 SeiteStream Processing in General-Purpose Processorszalmighty78Noch keine Bewertungen

- LDokument3 SeitenLNarendra KumarNoch keine Bewertungen

- A Survey of Parallel Programming Models and Tools in The Multi and Many-Core EraDokument18 SeitenA Survey of Parallel Programming Models and Tools in The Multi and Many-Core Eraramon91Noch keine Bewertungen

- LLNL Introduction To Parallel ComputingDokument39 SeitenLLNL Introduction To Parallel ComputingLogeswari GovindarajuNoch keine Bewertungen

- Week 5 - The Impact of Multi-Core Computing On Computational OptimizationDokument11 SeitenWeek 5 - The Impact of Multi-Core Computing On Computational OptimizationGame AccountNoch keine Bewertungen

- Parallel Algorithm and ProgrammingDokument4 SeitenParallel Algorithm and ProgrammingMahmud MankoNoch keine Bewertungen

- Understanding The Impact of Multi-Core Architecture in Cluster Computing: A Case Study With Intel Dual-Core SystemDokument8 SeitenUnderstanding The Impact of Multi-Core Architecture in Cluster Computing: A Case Study With Intel Dual-Core SystemhfarrukhnNoch keine Bewertungen

- DSMP WhitepaperDokument10 SeitenDSMP WhitepaperaschecastilloNoch keine Bewertungen

- Operating System SyllabusDokument9 SeitenOperating System SyllabusAnuraj SrivastavaNoch keine Bewertungen

- Parallel Computing - Major Elective - IIIDokument3 SeitenParallel Computing - Major Elective - IIImuninder ITNoch keine Bewertungen

- Complete Lessonplan Aca 12 UnkiDokument19 SeitenComplete Lessonplan Aca 12 UnkiSantosh DewarNoch keine Bewertungen

- Lecture1 Introduction PDFDokument43 SeitenLecture1 Introduction PDFDeepesh MeenaNoch keine Bewertungen

- Parallel and Distributed Computing - Zhang Zhiguo.2009w-1Dokument9 SeitenParallel and Distributed Computing - Zhang Zhiguo.2009w-1feliugarciaNoch keine Bewertungen

- Semester - 8 GTU SyllabusDokument9 SeitenSemester - 8 GTU SyllabusPanchal Dhaval MNoch keine Bewertungen

- 3rd CSE IT New Syllabus 2019 20Dokument19 Seiten3rd CSE IT New Syllabus 2019 20Harsh Vardhan HBTUNoch keine Bewertungen

- Print OutDokument11 SeitenPrint OutKamlesh SilagNoch keine Bewertungen

- Basics of Parallel Programming: Unit-1Dokument79 SeitenBasics of Parallel Programming: Unit-1jai shree krishnaNoch keine Bewertungen

- ParallelDokument4 SeitenParallelShanntha JoshittaNoch keine Bewertungen

- 2009, Multi-Core Programming For Medical Imaging PDFDokument163 Seiten2009, Multi-Core Programming For Medical Imaging PDFAnatoli KrasilnikovNoch keine Bewertungen

- Research Paper Computer Architecture PDFDokument8 SeitenResearch Paper Computer Architecture PDFcammtpw6100% (1)

- Introduction To Parallel Co...Dokument44 SeitenIntroduction To Parallel Co...ZeiTwigNoch keine Bewertungen

- Embedded System DesignDokument2 SeitenEmbedded System DesignspveceNoch keine Bewertungen

- Node Node Node Node: 1 Parallel Programming Models 5Dokument5 SeitenNode Node Node Node: 1 Parallel Programming Models 5latinwolfNoch keine Bewertungen

- Computer Organization and Architecture: Kau Cpcs 214 2008Dokument1 SeiteComputer Organization and Architecture: Kau Cpcs 214 2008Arghyadeep SarkarNoch keine Bewertungen

- SylabiiDokument6 SeitenSylabiiashit kumarNoch keine Bewertungen

- Achieving High Performance ComputingDokument58 SeitenAchieving High Performance ComputingIshamNoch keine Bewertungen

- Subject Code Subject Name Credits: Overview of Computer Architecture & OrganizationDokument4 SeitenSubject Code Subject Name Credits: Overview of Computer Architecture & Organizationanon_71491248Noch keine Bewertungen

- Te ItDokument20 SeitenTe ItameydoshiNoch keine Bewertungen

- Rubio Campillo2018Dokument3 SeitenRubio Campillo2018AimanNoch keine Bewertungen

- Boosting Single-Thread Performance in Multi-Core Systems Through Fine-Grain Multi-ThreadingDokument10 SeitenBoosting Single-Thread Performance in Multi-Core Systems Through Fine-Grain Multi-ThreadingshahpinkalNoch keine Bewertungen

- GSM Door Control ArduinoDokument20 SeitenGSM Door Control ArduinojanepriceNoch keine Bewertungen

- BSNL Je PDFDokument39 SeitenBSNL Je PDFjanepriceNoch keine Bewertungen

- Electronics and Communication Engineering 5th Feb 2017 Session1 180 100Dokument23 SeitenElectronics and Communication Engineering 5th Feb 2017 Session1 180 100Devendra ChauhanNoch keine Bewertungen

- ESP8266 Wi-Fi DatasheetDokument31 SeitenESP8266 Wi-Fi DatasheetMert Solkıran100% (2)

- MQ 6Dokument2 SeitenMQ 6api-3850017Noch keine Bewertungen

- A Survey of Iot Cloud Platforms: SciencedirectDokument12 SeitenA Survey of Iot Cloud Platforms: SciencedirectjanepriceNoch keine Bewertungen

- Big Data AnalyticsDokument31 SeitenBig Data Analyticsjaneprice100% (1)

- Recognition Handwritten Malayalam CharactersDokument7 SeitenRecognition Handwritten Malayalam CharactersjanepriceNoch keine Bewertungen

- MQ 3Dokument2 SeitenMQ 3Farshad yazdiNoch keine Bewertungen

- Zigb Contrld DumpsterDokument5 SeitenZigb Contrld DumpsterjanepriceNoch keine Bewertungen

- SPI ProtocolDokument8 SeitenSPI Protocolpraveenbc100% (2)

- Scalable Elliptic Curve Cryptosystem FPGA Processor For NIST Prime CurvesDokument4 SeitenScalable Elliptic Curve Cryptosystem FPGA Processor For NIST Prime CurvesjanepriceNoch keine Bewertungen

- HC-SR04 Ultrasonic PDFDokument3 SeitenHC-SR04 Ultrasonic PDFjanepriceNoch keine Bewertungen

- Optimizing The Implementation of SEC-DAEC Codes in FPGAsDokument5 SeitenOptimizing The Implementation of SEC-DAEC Codes in FPGAsjanepriceNoch keine Bewertungen

- Core E4Dokument5 SeitenCore E4janepriceNoch keine Bewertungen

- ESP8266 Wi-Fi DatasheetDokument31 SeitenESP8266 Wi-Fi DatasheetMert Solkıran100% (2)

- A Combined SDC-SDF Architecture For Normal I/O Pipelined Radix-2 FFTDokument5 SeitenA Combined SDC-SDF Architecture For Normal I/O Pipelined Radix-2 FFTjanepriceNoch keine Bewertungen

- I2c RTCDokument4 SeitenI2c RTCjanepriceNoch keine Bewertungen

- Vector Quantization TechniquesDokument48 SeitenVector Quantization Techniqueschelikanikeerthana_2Noch keine Bewertungen

- 2015 LDPCDokument11 Seiten2015 LDPCjanepriceNoch keine Bewertungen

- FIFO NoCDokument7 SeitenFIFO NoCjanepriceNoch keine Bewertungen

- MQ 3Dokument2 SeitenMQ 3Farshad yazdiNoch keine Bewertungen

- Design of Priority Encoding Based Reversible ComparatorsDokument4 SeitenDesign of Priority Encoding Based Reversible ComparatorsjanepriceNoch keine Bewertungen

- HT9170B/HT9170D DTMF Receiver: FeaturesDokument14 SeitenHT9170B/HT9170D DTMF Receiver: FeaturesjanepriceNoch keine Bewertungen

- Ultrasonic Ranging Module HC - SR04: Product FeaturesDokument3 SeitenUltrasonic Ranging Module HC - SR04: Product FeaturesjanepriceNoch keine Bewertungen

- A Usb 2.0 C Processo Ontroller For An ARM7 or Implemented in FPG 7TDM-S GADokument4 SeitenA Usb 2.0 C Processo Ontroller For An ARM7 or Implemented in FPG 7TDM-S GAjanepriceNoch keine Bewertungen

- Ieee-Concurrent Error Detection of EncoderDokument9 SeitenIeee-Concurrent Error Detection of EncoderjanepriceNoch keine Bewertungen

- Lab 5Dokument17 SeitenLab 5amreshjha22Noch keine Bewertungen

- Lab 5Dokument17 SeitenLab 5amreshjha22Noch keine Bewertungen

- Cedric VillaniDokument18 SeitenCedric VillaniJe Suis TuNoch keine Bewertungen

- USMLE StepDokument1 SeiteUSMLE StepAscend KannanNoch keine Bewertungen

- Government of The Punjab Primary & Secondary Healthcare DepartmentDokument2 SeitenGovernment of The Punjab Primary & Secondary Healthcare DepartmentzulnorainaliNoch keine Bewertungen

- Nationalism Research PaperDokument28 SeitenNationalism Research PaperCamille CatorNoch keine Bewertungen

- Butuan Doctors' College: Syllabus in Art AppreciationDokument12 SeitenButuan Doctors' College: Syllabus in Art AppreciationMark Jonh Polia-Abonates GotemNoch keine Bewertungen

- A Project Report ON A Study On "Effectiveness of Social Media Marketing Towards Unschool in Growing Sales"Dokument55 SeitenA Project Report ON A Study On "Effectiveness of Social Media Marketing Towards Unschool in Growing Sales"Je Lamare100% (3)

- Guidelines For Radio ProgramDokument4 SeitenGuidelines For Radio ProgramTirtharaj DhunganaNoch keine Bewertungen

- Special Report: Survey of Ph.D. Programs in ChemistryDokument6 SeitenSpecial Report: Survey of Ph.D. Programs in ChemistryPablo de TarsoNoch keine Bewertungen

- Theoretical BackgroundDokument7 SeitenTheoretical BackgroundRetarded EditsNoch keine Bewertungen

- Learning Action Plan: Advancing Basic Education in The PhilippinesDokument3 SeitenLearning Action Plan: Advancing Basic Education in The PhilippinesSalvador Morales Obusan100% (5)

- Math 9 Q1 M12Dokument14 SeitenMath 9 Q1 M12Ainee Grace DolleteNoch keine Bewertungen

- UntitledDokument593 SeitenUntitledSuzan ShanNoch keine Bewertungen

- CV HelinDokument4 SeitenCV HelinAchille Tatioti FoyemNoch keine Bewertungen

- Lesson Plan - My IndiaDokument7 SeitenLesson Plan - My Indiaapi-354866005100% (2)

- First Day Lesson Plan - 4709Dokument3 SeitenFirst Day Lesson Plan - 4709api-236604386Noch keine Bewertungen

- LIS 510 Reflection PaperDokument5 SeitenLIS 510 Reflection PaperrileyyelirNoch keine Bewertungen

- Tieng Anh 6 Global Success Unit 6 Skills 2Dokument4 SeitenTieng Anh 6 Global Success Unit 6 Skills 2Phương Thảo ĐặngNoch keine Bewertungen

- Administrative Law Syllabus.2nd Draft - Morant 2020 (10) DfsDokument8 SeitenAdministrative Law Syllabus.2nd Draft - Morant 2020 (10) Dfsjonas tNoch keine Bewertungen

- HUM01-Philosophy With LogicDokument4 SeitenHUM01-Philosophy With LogicJulioRamilloAureadaMercurioNoch keine Bewertungen

- Letter of Intent HEtEHE gRAHAMDokument3 SeitenLetter of Intent HEtEHE gRAHAMDan FredNoch keine Bewertungen

- So v. Republic (2007)Dokument17 SeitenSo v. Republic (2007)enggNoch keine Bewertungen

- Non-Government Teachers' Registration & Certification Authority (NTRCA)Dokument2 SeitenNon-Government Teachers' Registration & Certification Authority (NTRCA)Usa 2021Noch keine Bewertungen

- Colorado Environmental Education Master PlanDokument31 SeitenColorado Environmental Education Master Planapi-201129963Noch keine Bewertungen

- Higher Studies in USA (MS-PHD)Dokument32 SeitenHigher Studies in USA (MS-PHD)Graphic DesignerNoch keine Bewertungen

- 6 DunitreadingassessmentDokument14 Seiten6 Dunitreadingassessmentapi-377784643Noch keine Bewertungen

- Anantrao Pawar College of Achitecture, Pune.: Analysis of Courtyard Space in Architectural InstituteDokument8 SeitenAnantrao Pawar College of Achitecture, Pune.: Analysis of Courtyard Space in Architectural InstituteJayant Dhumal PatilNoch keine Bewertungen

- WEEEKLY TEST IN MUSIC For Blended and Modular Class 2020 2021Dokument3 SeitenWEEEKLY TEST IN MUSIC For Blended and Modular Class 2020 2021QwertyNoch keine Bewertungen

- Rizal Street, Poblacion, Muntinlupa City Telefax:8828-8037Dokument14 SeitenRizal Street, Poblacion, Muntinlupa City Telefax:8828-8037Marilou KimayongNoch keine Bewertungen

- MPH@GW CurriculumDokument8 SeitenMPH@GW CurriculumHenry SeamanNoch keine Bewertungen

- Josaa Iit MandiDokument1 SeiteJosaa Iit MandiRevant SinghNoch keine Bewertungen