Beruflich Dokumente

Kultur Dokumente

MCQGeog RPT

Hochgeladen von

fayazalamalig0 Bewertungen0% fanden dieses Dokument nützlich (0 Abstimmungen)

14 Ansichten27 SeitenMCQGeogRpt

Originaltitel

MCQGeogRpt

Copyright

© © All Rights Reserved

Verfügbare Formate

RTF, PDF, TXT oder online auf Scribd lesen

Dieses Dokument teilen

Dokument teilen oder einbetten

Stufen Sie dieses Dokument als nützlich ein?

Sind diese Inhalte unangemessen?

Dieses Dokument meldenMCQGeogRpt

Copyright:

© All Rights Reserved

Verfügbare Formate

Als RTF, PDF, TXT herunterladen oder online auf Scribd lesen

0 Bewertungen0% fanden dieses Dokument nützlich (0 Abstimmungen)

14 Ansichten27 SeitenMCQGeog RPT

Hochgeladen von

fayazalamaligMCQGeogRpt

Copyright:

© All Rights Reserved

Verfügbare Formate

Als RTF, PDF, TXT herunterladen oder online auf Scribd lesen

Sie sind auf Seite 1von 27

USING MULTIPLE CHOICE QUESTION TESTS

IN THE SCHOOL OF GEOGRAPHY

A Report by the MCQ Working Party

[Jim Hogg (Chairman), Alan Grainger, and Joe Holden]

TABLE OF CONTENTS

1. INTRODUCTION 1

2. TOWARDS A NEW FRAMEWORK FOR LEARNING ASSESSMENT 2

2.1 Catalysts for Change 2

2.2 Principles of a New Framework for Learning Assessment 4

2.3 Implementing the New Framework 7

2.4 How to Test for Diferent Learning Outcomes Using MCQs 10

2.5 Interactions Between Module Design and Assessment Design 11

2.6 Recommendations to the School Learning and Teaching Committee 11

3 A CODE OF PRACTICE FOR USING MULTIPLE CHOICE QUESTION TESTS 12

3.1 General Aims 12

3.2 Pedagogical Aims 13

3.3 A Framework for the Use of MCQ Tests in Geography 13

3.4 Obligations to Students Prior to the Test 14

3.5 Design of Test Question Papers 14

3.6 Design of Questions 15

3.7 Procedures for Tests 15

3.8 Processing of MCQ Cards 16

3.9 Feedback to Students 18

3.10 Evaluation and Moderation 19

3.11 Software for Assessing MCQs 19

3.12 Provision of Sample MCQs for Each Module 19

3.13 Tapping Into Existing Banks of MCQs for Ideas 20

3.14 Sharing Best Practice in the Use of MCQs in Geography 20

3.15 Recommendations to the School Learning and Teaching Committee 20

1. INTRODUCTION

Over the last six months the School has been rethinking its whole approach to teaching, in order to

rationalize programmes within a framework that meets universal benchmarks, and in anticipation of

a continuing rise in student numbers. The changes agreed so far will be accompanied by

modifications to the forms and timing of formative and summative assessment with which we

1

monitor the development of student capabilities. Significant changes in student learning are also

inevitable, though we have not yet discussed these in detail, and this too will affect our assessment

methods. If we are concerned enough about rising student numbers to overhaul our approach to

teaching, then we must surely give careful consideration to the use of Multiple Choice Question

(MCQ) tools that can cut the time needed to mark tests of large classes and provide assessment that

can be both targeted and broad in coverage.

However, there is far more to the debate about when and how to use MCQ tools. For until now we

have not paid much attention to identifying which forms of assessment are appropriate to measuring

the learning outcomes of particular modules and levels. One reason for this is that we have not

specified the learning outcomes very precisely either. If we do not do this how can we possibly

know which are the most appropriate forms of assessment to employ? It does not make sense to

continue along a path determined only by tradition and organizational inertia. It is just as wrong to

choose traditional assessment tools, such as essay exams, by default as it is to choose MCQ

assessment merely to save time. We seem to have become accustomed to the fact that most students

at Level 1 will inevitably achieve low grades, instead of considering whether it is not the students

that are at fault but our assessment systems. What we should be aiming at is a more rational

approach to the design of learning and assessment, albeit one that allows for behavioural factors too.

This should lead to the introduction of an appropriate assessment regime for each level and module,

which involves the use of an integrated set of assessment tools that are best suited to monitoring all

anticipated learning outcomes. MCQ tools have an important role to play in such a regime. But is

not only their use which needs to be justified - the same applies to all forms of assessment for every

module.

The report has two main parts. Part 1 proposes a comprehensive Framework for Learning

Assessment in general and identifes the place of MCQ tests within it. Part 2 is a Code of

Practice for designing and implementing MCQ tests within this framework.

2. TOWARDS A NEW FRAMEWORK FOR LEARNING ASSESSMENT

As professional educators, we all try when delivering our modules to inculcate in our students a

knowledge and understanding of specific aspects of our chosen subject and to promote their

acquisition of generic academic and transferable skills. Assessment is essential to monitor the

development of these two main types of capabilities, and Multiple Choice Question (MCQ) tests

have an important role to play in this. Unfortunately, there is still widespread misunderstanding

about what they can and cannot offer. This is often linked to a tendency to favour traditional

assessment methods, such as essay exams, and a lack of appreciation of what is to be assessed and

the best way to do this. This part of the report argues that instead of making decisions on the use of

MCQs in relation to a subjective choice of essay exams as a gold standard assessment tool, we

should adopt a comprehensive assessment framework that can be applied to all modules, and for

each module choose the set of assessment tools that is most appropriate within this framework.

2.1 Catalysts for Change

2.1.1 Moves Toward More Precise and Objective Assessment

It is difficult to overstate the tremendous changes that have taken place in the last ten years in the

required standards for module descriptions. We are now required to specify in advance the

2

objectives of the modules that we offer, and the particular academic and transferable skills which

students will acquire. Yet this has not yet been matched by a requirement to make our assessment

methods more precise, so we can monitor the extent to which these capabilities are actually

acquired. This situation cannot last for ever, so we would be wise to anticipate future demands for

more precise assessment.

2.1.2 A New Focus on Progression

Another feature of the learning process that will become increasingly important is an ability to

define specific trends in progression in student learning. This has three main parts.

1. Ensuring that a sequence of modules in a given subject strand shows a clear progression

in sophistication from Level 1 to Level 3. The school has already taken steps in this direction by

clearly differentiating between our expectations of what students should achieve in each year:

'exploration and inspiration' in Level 1, 'analysis and criticism' in Level 2 , and 'creativity and

scholarship' in Level 3. We now need to choose assessment tools to match these expectations.

2. Monitoring the progression of individual students in acquiring an increasingly

sophisticated set of academic and transferable skills in moving from Level 1 to Level 3. This is an

entirely understandable requirement in its own right, but it assumes added political significance in

the light of government pressures for widening access to higher education and increasing the value

added to student performance. Suitable assessment tools will be needed for this purpose as well.

3. Monitoring progression within a module, as a student gains more confidence in

acquiring and applying the skills, concepts and tools that are taught in it. Using formative

assessment tools while a module is under way can provide valuable feedback to both students and

staff. The results of objective formative tests can be a valuable guide for evaluating the reliability of

summative assessments, such as essay examinations which are marked more subjectively.

2.1.3 Monitoring Long Term Trends in Teaching and Learning Quality

The School may also come under pressure from the University or external bodies to adopt a more

sophisticated approach in monitoring trends in the quality of its overall performance in teaching

and learning. The current focus is on ensuring that we have adequate systems and processes for

quality control and appropriate information records to support this. Yet these requirements, however

onerous, cover only a small fraction of the factors that determine the quality of our performance. In

principle, trends in quality should be apparent in trends in mean or median marks or in the grade

distributions of classes of graduating students. Yet marking regimes have changed over time in

response to our own changing expectations (e.g. previously various references to the literature were

seen as essential for the award of a 2.i grade) and to outside advice on how our standards compare

with those of other institutions. We surely need more objective measures that are unaffected by

subjective policy changes and provide more reliable indicators of long-term trends in the quality of

our teaching. Objective MCQ test results have a key role to play in charting these long-term trends.

2.1.4 Past Responses to the Challenges Posed by Large Classes

Several of us have been forced over the years by the dramatic growth in class sizes to change our

preconceived notions about MCQs, and recognize that they are a highly efficient and pragmatic way

3

to assess learning in large classes of 200 to 300 students, and more, for which traditional essay

question exams are impractical. A number of approaches have been adopted:

1. Some of us have not taken this decision after a rational choice of the most appropriate

means of assessment, but have simply identified the method that can be used to examine a large

class and produce marks as rapidly as possible, within the short time allowed before submission

deadlines.

2. Others have looked at the matter in a broader context and decided that written exams are

simply too elaborate a mechanism to apply at Level 1, when it is only necessary to divide a class of

students into Pass and Fail categories. Both this group of colleagues and the first one may have

opted to assess 100% of the module mark by an MCQ Exam at Level 1.

3. Another group has taken the view that MCQ Exams or Tests are best used as part of an

integrated assessment approach and so have complemented them with written tests, presentations

etc. which assess other capabilities and account for a share of the total module mark.

2.1.5 Adapting Assessment to the New Learning Environment

Our discussions over the last few months have been long and complicated. Inevitably, we have had

to give priority to reaching agreement on how to change our teaching. Relatively little attention has

been paid to discussing how these innovations in teaching will impact on the student learning

process. Yet cutting by half the number of lectures used to deliver a similar amount of material as

before will inevitably require not only more independent learning, but also changes in the character

of the learning process to ensure that the quality of learning is maintained, if not increased. Two

innovations in learning, in particular, will have consequences for assessment:

1. We can expect to see a greater use of informal active learning groups to provide

mutual support and stimulation for individual learning and to partly substitute for reductions in

group activity in formal workshops. Module convenors could assess informal group work by

including individual reports/term papers based on the outputs of group discussions.

2. More emphasis will be placed on providing online support for individual learning, and

this may need to be accompanied by online formative assessment. MCQs have proven utility in this

regard and the School must decide whether to use such formative assessment simply to provide

informal feedback to students or whether it will contribute to the final module mark. Technologies

and practices are well established in this area, but if we choose the second option then this raises a

number of issues, including the mechanism of mark delivery to School databases and how to avoid

plagiarism, which will need to be explored by a further investigation by the MCQ Working Party.

2.2 Principles of a New Framework for Learning Assessment

2.2.1 Integrated and Appropriate Assessment

At the core of a new framework for learning assessment is the need to select an integrated set of

appropriate assessment tools. Appropriate assessment involves using the assessment tool best

suited to the particular student capability that we wish to measure. For any given module it is likely

that we will need to measure a number of different capabilities, and so we shall need a number of

appropriate assessment tools, each of which measures one or more these capabilities. An integrated

4

assessment regime combines the appropriate assessment tools needed to measure the complete set

of capabilities required, so the strengths of one tool complement the strengths of the others.

2.2.2 A Rational Choice of Assessment Tools

In principle, every module convenor should identify the student capabilities they are trying to

enhance. Consequently, they should be able to justify in a rational way the set of tools needed to

assess these capabilities. Over-assessment has featured regularly in our discussions over the last few

months, and is a consequence of not rationally choosing appropriate assessment tools. This can

occur in two ways:

1. We may assess too many individual pieces of work.

2. We may use an inappropriate assessment tool.

a. The most obvious instance of this is to set an essay exam to test for the

acquisition, understanding and retention of knowledge, when essays are best suited to

assessing more advanced capabilities. This can have a detrimental efect on the overall

grade distribution, because the marks we award for essays are inevitably infuenced just as

much by how students structure their basic analysis and argument as it is by their display of

knowledge. Since the latter quality is under-developed in Level 1 students it is no wonder

that modules at Level 1 which rely heavily on essay exams achieve lower marks than those

which use other assessment tools. For example, in 2000-01, the proportions of classes

gaining 2.i grades in two Level 1 modules assessed entirely by MCQ exams were 25% and

15%. In contrast, only 1% of the class taking a module assessed entirely by essay exam

gained a 2.i.

b. Another instance is when the composition of a single assessment tool is

inappropriate. For example, when a Short Answer Question at Level 1 is graded according

to analytical ability as well as the recall of knowledge. Alternatively, an MCQ test may

contain too many questions which rely on the recall of specifc facts, compared with other

types of questions which assess other learning outcomes. A good example of this is

provided by a Level 1 MCQ exam in which the frst half had only 25% of all questions

testing factual recall, while in the second half 50% of all questions fell into this category.

The mean mark for the frst half was 58.4 while that for the second half was 41.8.

2.2.3 Allowing for Behavioural Factors

While a rational choice of assessment tools should be our first priority it is important to recognize

the value of behavioural elements in the learning and teaching process.

1. Students will rarely undertake or put much effort into an assignment unless they receive

marks for it that contribute towards their final module mark. This in itself is one of the causes of

over-assessment, as it can lead to too many pieces of assessed work per module.

5

2. There is more to learning than sitting in lectures, absorbing the information, and

undergoing tests to see how well this has been achieved. Many other things are needed to make the

learning process enjoyable and fulfilling, and this can make some redundancy in assessment

unavoidable.

3. Students try various strategies to optimize the effort they have to exert to obtain a

desirable level of marks, e.g. by learning answers to anticipated exam questions in relatively few

areas of module content and then adapting them to fit the questions that are actually set. Essay

exams are seldom designed to combat this by testing breadth of knowledge across the whole of

module content. This problem can be partially overcome if a knowledge of two different areas of a

module is needed to answer any one essay exam question, though it is not a perfect solution.

4. Students often do not plan their studies effectively. So they do not gain a proper

understanding of the basic concepts and theories presented in a module while teaching is taking

place. They delay a lot of learning until their revision period and so are ill-prepared for the final

exam. They then rely more on regurgitation of lecture material than on the analysis of concepts,

evaluation of theories etc. In this respect formative MCQ tests during a module can motivate

students to learn basic concepts and theories earlier. As a result, they are better prepared for the final

summative examination which tests the full range of their capabilities and not simply their

knowledge. Short answer question tests can also be used for this purpose, but marking them is more

expensive and time-consuming.

5. Feedback from formative tests within a module can also provide a powerful stimulus to

students to enhance their learning to improve their grades. Good results can encourage students to do

even better or slacken off, but poor grades will motivate students to work harder. Ignorance of ones

true level of performance, on the other hand, can only perpetuate learning activities that ensure that

students fail to realize their potential.

2.2.4 Controlling Diversity in Assessment When Choosing Integrated Sets of Tools

If we employ an ideal set of multiple assessment tools we should not expect the same mark

distributions as we get from using just one tool and one piece of work. Instead, mark distributions

may tend to be more bunched. There are three reasons for this:

1. Since every student is different and no one is perfect, we should expect most students to

better in one form of assessment than they do in others. So their best marks will be offset by poorer

ones.

2. Low marks in formative assessment conducted early in a module will give

students an incentive to work harder to improve their performance in later assessments.

3. High marks in formative assessment conducted early in a module may give

other students the excuse to take it easy in later assessments so they only do just

enough to pass

There is no magical solution to this problem. An ideal set of assessment tools will measure

individual performance in identifed learning outcomes and no more. But a greater diversity

6

of tools and a greater number of assessed pieces of work may lead to more bunching of

marks than is desirable.

Every module convenor should therefore be responsible for choosing the judicious balance

of assessment which they regard as best for their module, within limits set by the School

Learning and Teaching Committee (SLTC). This should be based on a rational appraisal of

the appropriate assessment tools needed to monitor learning outcomes, tempered by a

proper allowance for behavioural factors, and an awareness of the need to control diversity

in assessment. The key point to emphasize is that we are proposing that identifying an

integrated set of appropriate assessment tools should be seen as essential for all modules,

whether or not MCQ assessment is used.

2.2.5 Blooms Taxonomy of Learning Outcomes

Bloom's Taxonomy of Learning Outcomes provides a simple conceptual framework for

identifying the appropriate assessment tools needed for particular modules and for

particular levels of a degree course. It portrays the development of intellectual skills in

terms of a progression from the lowest stage of Knowledge Recall (Stage 6 below) to the

highest stage of Evaluation (Stage 1). The defnitions below are based on those by Bloom.

1. Evaluation: Judges, criticizes, compares, justifies, concludes, discriminates, supports.

Requires the ability to judge the value of given material for a specific purpose and with respect to

criteria stated or devised by the student.

2. Synthesis: Combines, creates, formulates, designs, composes, constructs, rearranges,

revises. Requires the ability to assemble various elements to create a new whole, in the form of a

structured analysis of a question, a plan (such as a research proposal), or a taxonomy.

3. Analysis: Differentiates between parts, diagrams, estimates, separates, infers, orders,

sub-divides. Requires the ability to break down material into parts and understand how they are

organized.

4. Application: Demonstrates, computes, solves, modifies, arranges, operates, relates.

Requires the ability to use learned rules, methods, concepts, laws, theories etc. in new and concrete

situations.

5. Comprehension: Classifies, explains, summarizes, converts, predicts, distinguishes

between. Requires the ability to understand and/or interpret the meaning of textual or graphical

information

6. Knowledge Recall: Identifies, names, defines, describes, lists, matches, selects, outlines.

Requires the recall of previously learned material.

2.2.6 A Progression of Desired Learning Outcomes During Each Degree Programme

7

The priority learning outcomes for every module should ideally be framed within the general

progression of learning outcomes throughout our degree programmes. Our discussions over the last

few months have provided a basis for identifying these. For example,

At Level 1, The Year of Exploration and Inspiration' : Knowledge Recall, Comprehension and

Application.

At Level 2, The Year of Analysis and Criticism' : Knowledge Recall, Comprehension, Application,

Analysis (and Evaluation).

At Level 3, The Year of Creativity and Scholarship' : Knowledge Recall, Comprehension,

Application, Analysis, Synthesis and Evaluation.

2.2.7 Matching Assessment Tools to the Progression of Learning Outcomes

It therefore makes sense to devise a general assessment policy for each level that matches the above

progression in learning outcomes throughout each degree programme. This will involve:

1. Identifying the assessment tools which are suitable for monitoring desired learning

outcomes.

2. Choosing typical proportions of final module marks which can be provided by different

assessment tools at particular levels.

3. Specifying appropriate biases in the design of diferent assessment tools used

at particular levels to match the dominant learning outcomes at those levels.

At the moment such decisions are made more by intuition and tradition than rational

design. It is likely that much of what we are doing now could prove to be justifed if

subjected to detailed analysis. On the other hand, if some of our assessment is redundant

and/or inappropriate, as also seems likely, then we may be spending more time on

assessment than we should be, and receiving less information on student performance

than we could get by employing a more appropriate system.

2.2.8 Promoting Progression of Skills When Designing the Progression of Assessment

If we do change the balance between assessment tools employed early in a degree programme then

we must ensure that students have adequate skills to cope with more demanding assessment tools at

higher levels. This is particularly true in the case of essays. Fortunately, we do give intensive

personal tuition on essay writing skills in tutorials at Levels 1 and 2. So if we were to stop holding

essay exams at Level 1 then this should not have a detrimental effect on student performance at

Levels 2 and 3. The area which is perhaps most in need of attention in this regard is over-relying on

Short Answer Questions in some Level 2 modules and then expecting students to make a smooth

transition to achieving high marks in essay examinations in Level 3 modules.

2.3 Implementing the New Framework

8

2.3.1 General Guidelines for Using Particular Assessment Tools at Each Level

1. At Level 1, for which desired learning outcomes are on the lower stages of the Bloom

Taxonomy, appropriate assessment tools will be those that predominantly measure performance in

knowledge recall, comprehension and application, supplemented by tools that assess other

transferable skills, such as presentations and posters. Consequently, marks derived from MCQ, Short

Answer and Practical Class assessment could account for over a half of the total module marks, and

such tools can be used for both formative and summative assessment.

2. At Levels 2 and 3, when higher stage learning outcomes are more important, MCQ tools

should be restricted to formative assessment and provide less than 50% of the total module mark.

Logically, Short Answer Questions used in summative assessment should also be capped in this

way.

2. MCQ tests at all three levels should contain a mixture of different question styles that

test learning outcomes at various stages of the Bloom Taxonomy. However, in proceeding from

Level 1 to Level 3, there should be an increase in the proportion of the questions that test learning

outcomes at middle or high stages of the taxonomy.

3. Short Answer Questions, which are also best suited to assessing knowledge and basic

explanatory capabilities, can also be used for summative assessment at Level 1. At higher levels they

should be restricted to a set maximum percentage of the total module mark, whether they are used

for formative or summative assessment.

4. Essay Questions, which are best suited to assessing higher learning outcomes, should

account for the majority of the total module marks at Levels 2 and 3. There is a strong argument for

not using them for summative assessment at Level 1, because the potential for students to reveal

their knowledge, comprehension and application capabilities is constrained by their poorly

developed skills in structuring arguments, and this will limit mean module marks. A more rational

approach - albeit a radical one - would be to limit the use of Essay Questions at Level 1 to formative

assessment and only allow them to account for less than half of the total module mark.This should

help to increase marks in Level 1 modules. However, proper safeguards should be introduced, as

suggested above, to ensure a proper progression in essay-writing skills in proceeding from Level 1 to

Level 3.

2.3.2 Guidelines for Matching Assessment Tools to Learning Outcomes and Other Goals

1. Identify Priority Learning Outcomes

Every module convenor should identify the learning outcomes which are the priority for

assessment in a given module. Under the new teaching system all desired learning

outcomes should already be listed in the module outline. However, they are still rather

general and could beneft from further refnement. Then they need to be ranked in order of

priority.

2. Match Tools to Assess Priority Learning Outcomes

9

An integrated set of appropriate assessment tools should then be selected to monitor priority

learning outcomes. Each tool has a different capacity to assess learning outcomes at different stages

of the taxonomy (Table 1). Generally, essays are best suited to assessing higher stage outcomes,

while MCQs and Short Answer Questions are more suited low to middle stage outcomes - though

MCQs can assess skills in evaluation and, to a lesser extent, synthesis. Bias against MCQs often

results from ignorance of their potential, and an inability to see that all tools have advantages and

disadvantages.

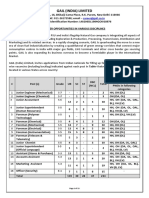

Table 1. The Suitability of Three Tools for Assessing Diferent Learning Outcomes

Measures: MCQs Short Essays

Answers

1. Evaluation v x v

2. Synthesis x/v x/v v

3. Analysis v v v

4. Application v v v

5. Comprehension v v v

6. Knowledge v v x

3. Justify the Choice of Assessment Tools With Reference to Key Goals

In an integrated set of tools, therefore, the merits of one tool are complemented by those of others.

MCQs offer totally objective assessment, in contrast to the subjectivity involved in marking essay

questions. Short answer questions are of intermediate objectivity (Table 2). While MCQs are prone

to blind guessing, essay questions are vulnerable to bluffing, which in practice makes it difficult to

award a fail mark to answers of a reasonable length. MCQs and essay questions complement each

other in meeting other goals. MCQs can sample student knowledge and capabilities across a wide

range of module material, while essays are best suited to assessing depth of knowledge. MCQs can

identify learning errors, but essays are excellent for assessing original thinking (Table 3).

Table 2. The Objectivity of Three Assessment Tools

MCQs Short Essays

Answers

1. Scoring is objective v x/v x

2. Eliminates bluffing v x x

3. Eliminates blind guessing x v v

Table 3. The Merits of Three Assessment Tools in Meeting Other Goals

MCQs Short Essays

Answers

1. Samples broadly v x/v x

2. Tests for depth of knowledge x x/v v

2. Pinpoints learning errors v v x

3. Assessing originality x x v

10

4. Refine the Choice of Tools to Assess Other Student Attributes

Tests completed by individual students under examination conditions cannot assess the ability of

students in other areas, such as team work, presentation skills and graphical skills. The School

already employs other forms of assessment to monitor these, such as individual presentations, group

presentations, and posters. However, these have disadvantages as sole assessment tools, e.g. group

presentations are inadequate for assessing individual attainment, and the ranking of outcomes higher

than Knowledge Recall is confused by varying skill in presentation techniques. Posters are good at

assessing knowledge and graphical skills but not higher stage outcomes. As examination essay

questions are not perfect for allowing full individual creativity to blossom, this has given rise to the

use of term papers completed in a students own time. But even these may not in practice reveal the

true extent of student creative and synthetic capabilities, owing to behavioural reasons, e.g. students

allocate insufficient time to their preparation. It is also difficult to eliminate the effects of

collaboration.

5. Further Refine the Choice of Tools to Fit Within Time Constraints

The ideal set of tools may not be appropriate in view of time constraints, such as those imposed by

the need to assess very large classes or complete marking within a very short time period. MCQs do

take longer to devise than short answer and essay questions, but they can be used again in later years

and they take much less time to mark (Table 4). It is possible to save time by using multiple

demonstrators to mark formative Short Answer Question tests, yet even if the most detailed crib is

supplied considerable checking by the course convenor is required to prevent inconsistency between

different markers.

Table 4. Ease of Use of Three Assessment Tools Within Time Constraints

MCQs Short Essays

Answers

1. Easy to devise x v v

2. Easy to repeat v x x

3. Easy to mark v x x

6. Further Refine the Choice and Timing of Tools to Allow for Behavioural Factors

It is often necessary to assess more than can be rationally justified in order to allow for behavioural

factors. Some of these were listed above. In addition, group work may need to be given a high

priority because it increases enjoyment of a module and catalyses the individual learning process.

2.3.3 Selecting an Integrated Set of Appropriate Assessment Tools

MCQ assessment is therefore best viewed as just one of a group of assessment options, each with its

own set of advantages and disadvantages and suitability for assessing different stages of learning

11

outcomes. An ideal integrated set of appropriate assessment tools will complement the merits and

demerits of one tool by those of others, fit within time constraints and allow for behavioural factors.

MCQ assessment has the following advantages:

a. It can be set at a range of degrees of difficulty and cognitive levels on the Bloom

Taxonomy.

b. It can test for breadth of knowledge across module content.

c. It provides no opportunities for students to gain marks for material which is not on the

question set or is only loosely related to it - as happens often in essay questions.

d. It serves an important behavioural function by encouraging students to remember,

interpret and use the ideas of others. After taking MCQ tests students should be better prepared for

essay exams which test their ability to organize and integrate concepts and express their own ideas.

The objectivity and breadth of MCQ assessment complements the subjectivity and depth of essay

exams. While essay questions are often treated as the 'gold standard', we tend to forget that the

reason why we need extensive moderation of essay exams is that they are highly prone to

subjectivity in marking, however much time examiners devote to trying to be as objective as

possible.

As long as we recognize the inherent criticisms and limitations of MCQ assessment we can use it

selectively and efficiently in place of, and to complement, essays. We have seen in the last few

months that the School is giving a high priority to reducing the time allocated to teaching and

administration, in order to free more time for research. Similar opportunities are also available to

reduce time spent in assessment. Some colleagues have already taken advantage of this option by

using MCQ methods. There is scope for the wider use of MCQs in the School, but this should be

part of a holistic approach to assessment that does not focus purely on saving time.

2.4 How to Test for Different Learning Outcomes Using MCQs

There are still widely held misconceptions about MCQ assessment. For example, MCQ

tests are only pub quizzes that test knowledge of specifc facts, and MCQ tests only

assess what students dont know, not what they do know. Such statements are rather

ironic given that some colleagues still award substantial marks for Level 3 essay exam

questions that merely involve the recall of factual information. To prevent further

misunderstanding, this section includes examples of how MCQ tools can assess

capabilities at all but one of the six stages of learning outcomes in the Bloom Taxonomy.

6. Knowledge Recall

MCQ tests can be used to assess whether a student can recall specifc pieces of

information learnt during the course. This is not necessarily a bad thing, because students

cannot demonstrate their ability to satisfy higher stage learning outcomes if they do not

have a good knowledge and understanding of the basic concepts and theories which are

needed for this. It should also be remembered that students actually like to learn pieces of

information, and in Level 1 the expansion of student knowledge is an important desired

12

learning outcome. So a judicious proportion of knowledge-based questions is justifed in a

Level 1 MCQ assessment.

a. Identify - choose the correct term, concept, theory, person, organization etc.

b. Define - choose the correct definition or correctly complete a definition.

c. Match - choose the correct sequence of items in List B to match the list of items

in List A.

5. Comprehension

However, even Level 1 MCQ tests should include questions that do not depend on the memory of

specific facts, but instead assess the general understanding of what has been learned during the

module. Comprehension-style questions are highly suitable in this regard.

a. Distinguish between - read a passage and identify which of a list of concepts are/are not

represented there as examples.

b. Distinguish between/explain - choose the correct explanation of a given problem or

phenomenon from a number of alternatives.

c. Summarize - interpret a given chart or map and choose the correct summary

evaluation of its meaning.

d. Convert - using a given map and other information supplied, choose which one

of a list of alternative maps will result from adding this information to the existing spatial

information on the map.

4. Application

Another type of question that does not require knowledge of specifc facts is the application

question, in which students need only know a specifc formula and procedure so they can

apply it to a practical example. In most modules formulae and procedures are explained in

detail, often with practical examples in the lecture or class, so they should be frmly

embedded in the minds of students.

a. Computes - given appropriate statistical or numerical information, apply a

particular method to calculate the correct value of a given term.

b. Relates - relate previously acquired knowledge to a practical example and select the

correct technical term for the phenomenon described.

3. Analysis

Higher stage learning outcomes also build on the basic knowledge and competences of students to

perform sophisticated analysis of theoretical or practical problems.

a. Separate - break down a passage into its component parts and select which of a list of

terms correctly describes a given combination.

13

b. Diferentiate - read a passage which erroneously describes the basic principles

of a given theory and identify which one of a list of words must be changed to ensure that

the passage ofers a correct description.

c. Order - use 4-6 different key statistics for three different countries to identify which

countries are referred to, by ranking them according to previously learned non-specific knowledge.

2. Synthesis

Although MCQ questions are not perfectly suited to this stage they can in principle be used

to test ability in choosing the best sequence of a set of items.

a. Combine/design - choose which one of a list of activities should represent the

frst stage of a research project. Repeat this for fve subsequent questions, each dealing

with subsequent stages.

b. Rearrange - read a list of 7 bullet points which constitute the main ideas conveyed in an

essay, and decide which of the following adjustments of the order of ideas would ensure greater

coherency.

1. Evaluation

It is also possible to use MCQ tools to assess the highest of all learning outcomes, evaluation.

a. Judges - read a specimen answer to a given question and evaluate its merits according

to stated criteria, e.g. regarding the level of accuracy of content and the ordering of stated terms.

2.5 Interactions Between Module Design and Assessment Design

Just as the choice of appropriate assessment tools must take account of desired module learning

outcomes, so the selection of module content may also need to take account of the types of

assessment to be employed. This is self-evident when, for example, a lecture is needed to brief

students on the topic chosen for a subsequent workshop, so it should not be unexpected that similar

interactions may be needed at other times. Human geographers, for example, may be less keen than

physical geographers to use MCQ assessment, believing the tool to be less suited to discursive

lectures than to lectures that convey many formulae. But it is not difficult to assess any social

science module effectively with MCQ tools, as long as the lectures are rich in concepts and theories.

The obstacle to good MCQ assessment of social science lectures is not their qualitative approach,

but simply the low density of concepts and prevalence of factual information. So if it is simply not

practical to continue examining a large class by essay exams, it may be necessary to increase the

density of concepts and theories communicated in lectures in order to make them easier to examine

by MCQ tools.

2.6 Recommendations

2.6.1. The Working Party was requested to provide a Code of Practice for using MCQ

assessment tools, but we concluded that it was impossible to consider the use of MCQ assessment in

isolation from other tools. There is scope for the wider use of MCQs in the School, but it should

be part of a more holistic approach to assessment that does not focus purely on saving time.

14

2.6.2. The School should look more carefully than hitherto at all the forms of assessment

which are used for every module. All assessment should be justified rationally, while allowing for

behavioural factors. It is just as wrong to choose traditional assessment tools, such as essay exams,

by default as it is to choose MCQ assessment merely to save time.

2.6.3. The School should identify for each level of a degree programme general learning

outcomes, and priority learning outcomes for each module should be identified within this

framework that can be linked to specific assessment tools.

2.6.4. Module convenors should be asked to identify an integrated set of assessment tools

which are appropriate to measuring the stated learning outcomes of their modules. MCQ assessment

is just one of a group of assessment options, each with its own set of advantages and disadvantages

and suitability for assessing different stages of learning outcomes. In an ideal integrated set of

appropriate assessment tools the merits and demerits of one tool are complemented and enhanced by

those of others, while assessment is designed to fit within time constraints and allow for behavioural

factors.

2.6.5. Our impending switch to a new teaching system is a suitable moment to re-evaluate our

assessment regime. The School should consider how to adapt its present regime to the needs of the

new learning environment associated with the new teaching system, in particular, the likely switch to

more informal group activity and online support for independent learning.

2.6.6. We would also be wise to anticipate future external demands for the use of more precise

assessment tools. Objective MCQ test results have a key role to play in charting long-term trends

for an external audience in the quality of the Schools performance in teaching and learning.

2.6.7. Marks derived from MCQ assessment tools can account for over half of the total mark

of Level 1 modules, for which desired learning outcomes are on the lower stages of the Bloom

Taxonomy, and such tools can be used for both formative and summative assessment. At Levels 2

and 3 MCQ tools should be restricted to formative assessment and provide less than 50% of the total

module mark.

2.6.8. MCQ tests at all three levels should contain a mixture of different question styles that

test learning outcomes at various stages of the Bloom Taxonomy. In proceeding from Level 1 to

Level 3 there should be an increase in the proportion of the questions that test learning outcomes at

middle or high stages of the taxonomy.

2.6.9. We recommend seeking comments on these proposals from members of SLTC, external

examiners, learning and teaching experts within the university, and experts in other universities,

before this report is formally adopted. The aim should be to ensure that assessment frameworks in

the School, and the use of MCQ tools within these frameworks, conforms with norms within this

University and in other universities, in Geography and in other disciplines.

3 A CODE OF PRACTICE FOR USING MULTIPLE CHOICE QUESTION

TESTS

15

Three main concerns led the School Learning and Teaching Committee to originally establish this

Working Party to devise a Code of Practice for the using MCQ tools in module assessment. First,

that the results obtained from MCQ tests were somehow inferior to those obtained from written tests

and exams. We hope that the first part of this report will have quelled these concerns by showing the

continuity of various assessment tools within the framework provided by the Bloom Taxonomy.

Second, that questions in MCQ tests were not set in a rigorous way. Third, that methods used for

automated marking of MCQ cards were flawed. In the second part of our report we focus on the last

two of these concerns and show that such problems can be considerably reduced, if not entirely

eliminated, if tests are designed and implemented in proper ways.

3.1 General Aims

3.1.1 The School should continue to improve and refine its existing methods for assessing

student performance and to search for innovative methods that will add to these.

3.1.2 Our assessment procedures should be seen by students as being rational, fair and

unbiased and open to scrutiny, if necessary.

3.1.3 We should make effective use of MCQ and other automated methods for student

assessment when this is deemed appropriate, effective and efficient but we must maintain diversity

in our methods of assessing students by different criteria.

3.2 Pedagogical Aims

3.2.1 A comprehensive, appropriate and integrated system of assessing students is important

for satisfying the broad pedagogical objectives of the School.

3.2.2 The MCQ test should be seen as part of a comprehensive, appropriate and integrated

system of assessing students.

3.2.3 To provide a framework for defining the role of MCQ tests within such a

comprehensive system of assessment, the School Learning and Teaching Committee (SLTC) should

define pedagogical aims for each level of study. Our proposals for such a Framework are outlined in

Part 1.

3.2.4 A basis for this framework could be Bloom's Taxonomy, which attempts to divide

cognitive objectives into subdivisions ranging from the simplest behaviour to the most complex (see

Part 1).

3.2.5 Since we would expect a gradual progression in knowledge and skills of students from

Levels 1 to 3, the assessment tools used for each year, and the type and complexity of questions,

should reflect this progression.

3.2.6 Progression in cognitive objectives should be reflected in the types of assessment that

we use:

Level 1 Fundamental principles and knowledge. Elementary quantitative techniques.

16

Level 2 Development and evaluation of skills and engagement with the literature.

Intermediate analysis and evaluation of knowledge. Intermediate quantitative techniques.

Level 3 Critical synthesis, and advanced analysis and evaluation of knowledge to achieve given

objectives. Advanced quantitative techniques.

3.2.7 As our contribution to this exercise we would suggest that::

a. MCQs have the primary functions of assessing: (i) acquired knowledge from lectures,

set texts and other stated resources; (ii) evaluation of theoretical principles, and the ability to use

them in practical applications and analysis; (iii) understanding of and ability to apply quantitative

techniques.

b. Essays have the primary function of assessing knowledge, critical synthesis, analysis

and evaluation in a structured and well-argued written form.

c. Short answer questions are an effective way to assess acquired knowledge and writing

skills.

3.2.8 Within this framework, we consider it to be entirely justified to use MCQ tests for

summative assessment at Level 1, and for formative assessment at Levels 2 and 3. It would seem

entirely logical for SLTC to devise similar guidelines for the use of other assessment tools.

3.3 A Framework for the Use of MCQ Tests in Geography

3.3.1 Any given module in Geography should be able to use a number of different methods

for assessing students, as are deemed appropriate to the learning outcomes of the module (see Part

1). This may include the use of MCQ tests, provided this is consistent with Item 3.2.8.

3.3.2 The goals, objectives, methods, times and percentage mark for assessing each module

should be stated explicitly in the module outline.

3.3.3 MCQ tests can be used in various ways in a module, e.g. as a number of regular short

(15 minute) tests or as 1-2 longer (45 minute) tests. If the latter option is chosen then the likelihood

of a poorer overall performance in the first test can be reduced by the prior use of short practice

tests.

3.4 Obligations to Students Prior to the Test

3.4.1 The course handout for each module should include the proportions of the total mark

provided by each form of assessment, e.g. essay exam, MCQ exam, course essays, MCQ tests,

workshops, presentations etc.

3.4.2 At the start of each module, students should be given a hand-out and a verbal

explanation of procedures for completing an MCQ test.

3.4.3 This handout should include examples of the types of MCQ used in each test, to show

students what they can expect.

17

3.4.4 Published MCQs should include answers. If appropriate they may include explanations

of why each option is correct or wrong.

3.4.5 It is also good practice to prepare students for MCQ tests by holding very short (e.g. 5

minute) snap tests in the middle of lectures. Students can be given the answers and allowed to mark

their own question sheets. The tests are informal and marks are not collected.

3.5 Design of Test Question Papers

3.5.1 Each MCQ should conform to a high standard for clarity of expression, design, layout

and marking, but academics should be free to explore innovative questions as in any other

examination.

3.5.2 Questions should be checked carefully for errors, omissions and ambiguities and other

problems in both the question stem and the five (detractor) options for answering it. The use of five

detractors is the universal standard, and we recommend it be adopted unless exceptional

circumstances prevent this.

3.5.3 Module convenors are encouraged to request feedback from colleagues on the design

and composition of tests. Ideally, checking for potential pitfalls is best done by several people rather

than just one person.

3.5.4 The start of each MCQ Question Paper should include written instructions on how to

complete the computer card satisfactorily (see Section 3.7).

3.5.5 Each MCQ Question Paper should include instructions as to the type of marking used,

e.g. negative marking, no negative marking etc., and preferably also information on the proportion

that the test contributes to the module assessment as a whole. The School should formulate a policy

on negative marking, after receiving expert guidance on the link between this and mark distributions.

3.5.6 Each MCQ Question Paper should be printed on brightly coloured paper. This will

ensure that any other unauthorised paper on desktops is easily recognized. It will also help to prevent

question papers being removed from the room by students.

3.6 Design of Questions

3.6.1 Question stems should be phrased as clearly as possible, leaving no room for ambiguity.

3.6.2 The detractors to each question should all appear equally plausible to someone with a

poor knowledge of module content, but only one answer should be unambiguously correct to those

who have a good knowledge of module content. To reduce the possibility of students finding the

correct answer by guesswork, ensure that all the detractors are internally consistent, they are of

similar length, and the position of the correct answer (i.e. a, b, c. etc.) varies.

18

3.6.3 There must be only one correct answer to a question with five options. If one option is

correct, then students have a 20% chance of selecting the correct answer.

3.6.4 We should aim to use a variety of different types of MCQ in Geography tests. These

should include questions involving the recall of knowledge, interpretations of graphics, images,

mathematical calculations, judgement, comparisons, comprehension, and evaluation. Experience

suggests that students will perform better in tests that involved a mixture of different types of

questions than in tests that are solely reliant on the recall of knowledge. Typical types of questions

include, in order of increasing difficulty and ascending order in the Bloom Taxonomy:

6. Knowledge Recall

a. Fact

b. Defne a term

5. Comprehension

a. Explain terms in a passage

b. Explain a technique

4. Application

a. Apply a term

b. Apply a theory

c. Apply a technique

d. Calculate

1. Evaluation

a. Evaluate a technique

b. Evaluate a theory

c. Compare terms

3.6.5 Other examples of the major generic types of questions for MCQ tests are given in Part

1.

3.7 Procedures for Tests

3.7.1 Before each test the Module Convenor should ensure that he or she has collected from

the School (a) the correct number of MCQ Cards and (b) the Schools stock of HB lead pencils (see

item 3.7.5). If the test is to be taken during a University Exam Period the Convenor should check

with Exams Office and/or the School Coordinator to see that these requirements have been met.

3.7.2 To minimize possibilities for cheating, where a test is to take place during a module, the

Module Convenor should (a) ensure on entering the room that students are well spaced apart, with at

least one empty seat between them; (b) remind students they are under examination conditions and

that talking is not allowed until all the cards and question papers have been handed in at the end of

the test. Other possible strategies, such as having different question papers with different sequences

of the same questions, would be difficult to mark and could be quite confusing for students.

19

3.7.3 At the start of each test the Module Convenor or Examination Invigilator should read to

the class a standardized set of instructions for completing the test satisfactorily. These are listed

below as Items 3.7.5 to 3.7.9. These instructions, which repeat those printed at the top of the

question paper, should avoid many of the problems associated with card marking.

3.7.4 If the test is to be taken during a University Exam Period then either the Convenor

should attend to read out these instructions, or he or she should ensure that the Invigilator has been

properly briefed on their importance and the need to read them out.

3.7.5 The computer cards must be marked correctly using an HB lead pencil and no other

implement or type of pencil. Otherwise the card reader, which is very sensitive to the density of

graphite on the card, will reject the card. Students without an HB pencil should be invited to borrow

one.

3.7.6 Students should first fill in their University Student Identification Number by filling in

boxes on the card. They should also write their name and number (a) on the top of the card in case

the computer cannot read it; and also (b) on the top of the question paper (to serve as a backup and

to emphasize to students that the question paper must not be taken away afterwards).

3.7.7 Students should fill each box that they select by shading the box with an HB lead pencil.

If they simply put a tick, cross or dot in the box, the card reader will reject the card. The person

responsible for processing the cards then has to shade over the boxes before the card reader will

accept it.

3.7.8 If a student makes a mistake when filling in an answer and attempts to rub out a mark,

they should take rub out all the pencil mark and not smudge it over several boxes. The card reader

will reject any card that is smudged badly. Students without erasers should put a light diagonal slash

through the incorrect selection, so it can be rubbed out later by the person who processes the cards.

3.7.9 To reduce the chance of smudging at the correction stage, students should be advised to

make their first selection by using a light shading, and only make it as dark as possible when they

are sure that this is the selection they really want to retain.

3.7.10 The question paper should be collected immediately after an MCQ test, to avoid it going

into general circulation among students. This is particularly important if an MCQ test is used in a

University Examination.

3.8 Processing of MCQ Cards

3.8.1 The Module Convenor should take full responsibility for understanding and supervising

the procedures for processing MCQ cards. The most frequent practical problems that are

encountered involve the computer-marked card reader and the computer software for processing

cards. The problems are usually blamed on the card reader, software or some other feature, but they

usually boil down to unfamiliarity with these.

3.8.2 The processing of MCQ cards is actually a relatively simple matter to those familiar

with the system. But there are a number of pitfalls which can cause problems and which often lead

colleagues to regard the system as useless. However, with proper training and support the system

can work well in the School, as it does in many other departments of this and other universities.

20

3.8.3 Before taking the cards for marking by the card reader, the Module Convenor should

check every card to see that the student has followed the correct procedure in the test. There are two

main types of incorrect completion:

a A student has used a pencil with the wrong density lead. To remedy this the Convenor

must darken all completed boxes on the card with an HB pencil, so the card reader will read the

card.

b Ink, biro, felt tip pen or liquid pencil has been used. These cards must be copied onto a

new card by the Convenor using an HB lead pencil.

3.8.4 The Module Convenor should produce, using an HB pencil, a Master Card containing

the correct answers to all the MCQs.

3.8.5 Two types of card-marking software are now in general use in the University: Wincards

(which is installed on the Schools system) and Javacards 2002 (installed on the FLDU system).

3.8.6 To mark cards using the Wincards software:

a. The Convenor should obtain from the Student Information System (Banner) a list of

students registered for a module. The list is usually provided as a Microsoft Excel file. This contains

the names and student identification number of each student. This file must then be transformed

manually and saved in Lotus 123 (.WKS) file format. The software for processing MCQ cards will

then read this Class File (it will not accept any other file format) and use it to link the cards to

students. The Module Convenor should also enquire as to any other special requirements of the

present version of the card marking software (e.g. that all zeros at the beginning of the Student

Number should be removed).

b. The Master Card is fed into the card reader. A visual display of the Master Card is

shown on screen and this is checked to make sure that the Master Card is correct. As all other cards

are compared to the master card, it is essential that the Master Card is correct.

c The card for each student is then fed into the card reader twice. The first reading is

checked against the second reading. If they are the same, then the students number, name and marks

appear in a list on the display. If there is a difference between the first and second reading of a card,

an error is signalled showing where it occurs on a card. This error is typically a smudge, partly filled

box or omitted detail. The person processing the cards then has to make an appropriate correction to

the card.

3.8.7 To mark cards using the Javacards 2002 software (which will work with any version of

Windows from Windows 95 upwards, including Windows XP):

a. Obtain class lists by

i. Logging in to Boddington Common

ii. Going to the User Directory in the Information Floor of the Boddington Common

Gatehouse.

iii. Select Find Users (Advanced).

21

iv. In the "Search Within Group" list select leeds_uni.registry. subjects.geog .

v. In the "Search by Name or Unique Identifier" list select user name, i.e. GEOGxxxx.

vi. Type * into the Search For box.

vii. Select the XML file radio button.

viii. Click on the Search push button.

ix. Wait for the complete page of results to appear.

x. Use the Save or Save as command in your Web browser to save the fle, ending

with. ".xml" and not "html". Save it in the m:carddata directory. Before saving be sure to

click on the window containing the class list, otherwise the correct fle will not be saved.

b. Enter details in the set-up dialogue box

i. Select a class list fle by using the second Browse push button.

ii. Enter the module code and test identifier (e.g. 2 = Test 2)

iii. Press the OK button.

c. Press the New Master button and pass your Master Card through the card reader. Check

it carefully on the screen.

d. Pass the cards through the reader. Cards only have to be passed through once. But you

must compare the card visually with the image displayed on the screen. If there is only one correct

answer to each question the software automatically assumes that you are not using negative marking.

e. One of the innovations in the new software is that if the card has been misread, or the

card has problems owing to faulty completion, you can use the mouse to edit the card on the screen.

So if the card reader shows as a valid selection a smudged box which the student tried to rub out,

then you can remove this on the screen and you do not need to either use an eraser on the original

card or prepare an entirely new copy of the card in order to process it. This saves a lot of time. As a

check, the full details of the card are stored in a file, so you can see which boxes were manually

removed. You can also use the "Edit comments for student" command to add notes to each card file

in order to make a record of the reason for your edits .

f. The new software does not have an automatic Save function, so you need to save the

results file regularly, e.g. every ten cards, and when you have read the last card.

g. When you have finished reading the cards the software will automatically generate a

series of files, so you will find these files in the directory:

i. geog.xxxx.xml (class list)

ii. geog.xxxx_2.xml (raw data file)

iii. geog.xxxx.stats2.bxt (contains the Facility Index for each question, which is the

number of correct answers for each question relative to the total number of students in the

group.)

iv. geog.xxxx.stats.csv (contains the Frequency Measure, which shows how many students

answered each question)

v. geog.xxxx.sumy.tsv (results fle in tabbed text form - can be opened in Excel)

22

h. Copy all these fles onto your foppy disk so they can be then copied onto your

own computer.

3.8.8 Marks should be processed as soon as possible following the test and an assessment of

the results should be made by the convenor. This assessment must identify any questions that a

majority of students have been answered correctly or have not been able to answer correctly. The

MCQ test software can assist convenors in this task as it generates a Facility Index, a Frequency

Measure, and a Discrimination Index (see Section 3.10.1) for each answer. The test results are

available on request from Wincards, but are produced automatically by Javacards 2002 as separate

files.

3.9 Feedback to Students

3.9.1 We should aim to give students feedback as a matter of routine in all of our MCQ tests.

This converts the MCQ test from a summative to a formative assessment method. Students

generally welcome feedback and more readily accept the outcome if questions and answers are

explained and seen to be reasonable, fair and part of a module.

3.9.2 If it is subsequently found that mistakes have been made in setting questions then we

should admit the error, explain how it came about and inform students that the question will be

changed in future. We should inform students that the question has been withdrawn from the current

examination (if this is appropriate) so that no one will be jeopardised. In the same way, questions

could be withdrawn if they are subsequently found to be answered correctly by only a small

proportion (e.g. 5%) of the class.

3.9.3 Posting results on the student notice board is highly recommended (if allowed by the

examination procedures). Students like to know where they stand and MCQ tests give them one

measure. It generates competition and interest and can lead to improvements in performance in

subsequent MCQ tests. Remember to use grade labels (i.e. A, B) if the results are provisional as

they will contribute to a total module mark.

3.9.4 Course convenors should aim to give students the results of a test within two weeks of it

being held, in accordance with School policy.

3.10 Evaluation and Moderation

3.10.1 When the MCQ cards have been processed, the software can generate useful statistics

for each MCQ Test. These include:

a. A Facility Index, which is simply the number of correct answers for each

question relative to the total number of students in the group. This measures how easy or

difcult a question was.

b. A Frequency Measure which shows how many students answered each

question. A high frequency of wrong answers indicates a problem, as does a high

frequency of omitted answers.

23

c. A Discrimination Index that measures the proportion of students confused by each

question.

3.10.2 These statistics are extremely useful for evaluating an MCQ test. They should be used

routinely by convenors and moderators to identify any problems with a test. (Note that the new

JavaCards 2002 software does not generate as much information as the older WinCards (see section

3.8.7).

3.10.3 Using the marks stored in an Excel spreadsheet the Module Convenor can generate

further statistics, including the mean, standard deviation and upper and lower quartile marks for the

group.

3.10.4 The SLTC should take a policy decision on the desirability of the Convenor and/or the

Moderator adjusting the overall marks of a particular MCQ Test or Exam. The general policy at

present is not to adjust marks unless the Moderator and/or Convenor feel there are exceptional

circumstances.

3.10.5 Since the computer marked cards provide a permanent record of a test they should be

securely stored in a box together with the Master Card. This enables an audit to be undertaken if

desired. Comparing results from sequential years can also provide valuable information about

relative performance, even when the questions differ. We recommend that sets of cards for Level 1

Exams and Tests be retained for 1 year and those for Level 2 and Level 3 Exams and Tests for 2

years.

3.11 Software for Assessing MCQs

3.11.1 The WinCards software for processing of MCQ cards was developed in the University

by Andrew Booth and the new JavaCards 2002 software by John Hodrien and Andrew Booth. Both

run on an IBM PC compatible computer in Microsoft Windows. The software is relatively easy to

learn and use effectively and WinCards is installed on a computer connected to a card reader in the

Schools Taught Courses Office.

3.11.2 MCQ tests may also be taken in computer laboratories using online MCQs. Question

papers should be prepared in the usual manner but taken to Dr Andrew Booth who is currently

Director of the Flexible Learning Development Unit in the University. He will make the MCQs

available over the university network using custom software he has developed. Students select

answers to MCQs online. Answers are processed automatically and the results are returned to

Modules Convenors by email.

3.12 Provision of Sample MCQs for Each Module

3.12.1 The design and writing of good multiple-choice questions to cover the material in a

module takes time, practice and patience but the reduction in time spent on exam marking can be

significant, especially for large classes of students. Investing time in researching different types of

multiple-choice questions and answers can yield benefits in terms of the speed and time spent of

marking.

24

3.12.2 Convenors should aim to create a bank of MCQs to cover the material in their module.

The MCQs should generally be of different types and meet different cognitive objectives of the

module.

3.12.3 Students should be given a sample of typical MCQs for each module. The sample

should provide answers to each question, indicating why some answers are correct and some are

wrong with appropriate explanations. Lecturers should generally go over the sample questions

during a class so that students become familiar with what will be expected on them during an MCQ

test.

3.13 Tapping Into Existing Banks of MCQs for Ideas

3.13.1 Lecturers can also explore existing banks of MCQs for ideas on how to structure their

own questions. There are many books containing sets of sample questions from other disciplines.

Banks of questions are available in EndNote, Access and other software formats. Their chief benefit

is to give ideas on how to construct different types of MCQs, not to use them as such. The Internet

contains a wealth of sources of MCQ tests in various disciplines, including some aspects of

geography.

3.13.2 Some MCQ tests prepared and tested in other disciplines and available in texts might

also be of interest to geographers.

3.14 Sharing Best Practice in the Use of MCQs in Geography

14.1 To improve practice in the School, outside speakers should be invited to give a

workshop on the use of MCQ tests in Geography and be encouraged to cover all aspects of using

MCQ tests.

3.14.2 Diverse procedures and computer systems for MCQ tests are now used in universities in

the UK and USA. These should be monitored and evaluated to determine if we might benefit from

them.

3.15 Recommendations to the School Learning and Teaching Committee

3.15.1 MCQ assessment should be seen as part of a comprehensive system of selecting an

integrated set of appropriate assessment tools for each module at a given level. No particular tool

should be seen as inferior or superior to any other, but merely evaluated as to whether it is

appropriate, and how it can complement other tools. Recommendations on how to do this are made

at the end of Part 1 of this report.

3.15.2 To provide an outline framework for defining the role of MCQ tests within such a

comprehensive system of assessment, the SLTC should define pedagogical aims for each level of

study.

3.15.3 As we should expect a gradual progression in knowledge and skills of students from

Levels 1 to 3, the assessment tools used for each level, and the type and complexity of questions set

in each assessment tool, should reflect this progression.

25

3.15.4 Within this framework, we consider it to be entirely justified to use MCQ tests for

summative assessment at Level 1, and for formative assessment at Levels 2 and 3.

3.15.5. Marks derived from MCQ assessment tools can account for over half of the total mark

of Level 1 modules, for which desired learning outcomes are on the lower stages of the Bloom

Taxonomy, and such tools can be used for both formative and summative assessment. At Levels 2

and 3 MCQ tools should be restricted to formative assessment and provide less than 50% of the total

module mark.

3.15.6. MCQ tests at all three levels should contain a mixture of different question styles that

test learning outcomes at various stages of the Bloom Taxonomy. In proceeding from Level 1 to

Level 3 there should be an increase in the proportion of the questions that test learning outcomes at

middle or high stages of the taxonomy.

3.15.7 Students should be fully informed beforehand of the content of MCQ tests and their role

in completing them, and be given prompt feedback on results.

3.15.8 Colleagues employing MCQ assessment should be asked to follow this Code of

Practice, particularly as it relates to the design, implementation and processing of tests. This should

avoid, if not eliminate, many of the problems that have occurred until now.

3.15.9 The SLTC should take a policy decision on the desirability of whether the Convenor

can, on the advice or with the agreement of the Moderator, adjust the overall marks of a particular

MCQ Test or Exam. The general policy at present is not to adjust marks unless the Moderator and/or

Convenor feel there are exceptional circumstances. We recommend that this policy be maintained,

as the need for moderation is predominantly a result of the subjectivity associated with the marking

of essay exams.

3.15.10 The SLTC should discuss whether it should take a policy decision on the desirability of

the use of negative marking, or leave it to the individual module convenor to decide.

3.15.11. The SLTC should appoint an MCQ Coordinator with a responsibility to: (a) Keep this

Code of Practice under review; (b) Provide advice to members of staff on MCQ design and

implementation and card reader operation procedures; (c) Report to the Head of Department when

hardware or software problems render the MCQ marking system non-viable and remedial action is

required; (d) Liaise with the Director of Learning and Teaching, the Examinations Officer and the

Departmental Coordinator; (e) Advise the School on improvements in best practice within the

University and outside.

3.15.12 We commend this Code of Practice to the SLTC, and would welcome any comments

and suggestions for amending it, from the committee, external examiners, learning and teaching

experts within the university, and experts in other universities, before it is formally adopted. The aim

should be to ensure that the use of MCQ tools in the School conforms with norms within this

University and in other universities, in Geography and other disciplines.

26

27

Das könnte Ihnen auch gefallen

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeVon EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeBewertung: 4 von 5 Sternen4/5 (5794)

- The Yellow House: A Memoir (2019 National Book Award Winner)Von EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Bewertung: 4 von 5 Sternen4/5 (98)

- LR StrikesDokument3 SeitenLR Strikesmeenugoyal7Noch keine Bewertungen

- LR StrikesDokument3 SeitenLR Strikesmeenugoyal7Noch keine Bewertungen

- LR StrikesDokument3 SeitenLR Strikesmeenugoyal7Noch keine Bewertungen

- MCQ Industrial RelationsDokument5 SeitenMCQ Industrial Relationssanjaydubey380% (5)

- 53rd To 55th BPSC (Main) Exam 2012 LSW Paper - IDokument4 Seiten53rd To 55th BPSC (Main) Exam 2012 LSW Paper - IfayazalamaligNoch keine Bewertungen

- Robbins9 ppt16Dokument43 SeitenRobbins9 ppt16allanrnmanalotoNoch keine Bewertungen

- LR StrikesDokument3 SeitenLR Strikesmeenugoyal7Noch keine Bewertungen