Beruflich Dokumente

Kultur Dokumente

A Characteristics-Based Bandwidth Reduction Technique For Pre-Recorded Videos

Hochgeladen von

alokcena0070 Bewertungen0% fanden dieses Dokument nützlich (0 Abstimmungen)

33 Ansichten4 SeitenThis document proposes a characteristics-based bandwidth reduction technique for pre-recorded lecture videos. It captures lectures using a single video camera and exploits unique characteristics of the videos, like periods of static screen content, to reduce bandwidth beyond standard compression techniques. It detects shot boundaries to identify frames where significant changes occur. It then extracts key frames and associated audio segments to create a slideshow presentation in SMIL format, reducing bandwidth to 4-13% of standard encodings while retaining lecture content and quality. This approach aims to make distance learning more practical by addressing bandwidth limitations.

Originalbeschreibung:

ieee paper

Originaltitel

00871111

Copyright

© © All Rights Reserved

Verfügbare Formate

PDF, TXT oder online auf Scribd lesen

Dieses Dokument teilen

Dokument teilen oder einbetten

Stufen Sie dieses Dokument als nützlich ein?

Sind diese Inhalte unangemessen?

Dieses Dokument meldenThis document proposes a characteristics-based bandwidth reduction technique for pre-recorded lecture videos. It captures lectures using a single video camera and exploits unique characteristics of the videos, like periods of static screen content, to reduce bandwidth beyond standard compression techniques. It detects shot boundaries to identify frames where significant changes occur. It then extracts key frames and associated audio segments to create a slideshow presentation in SMIL format, reducing bandwidth to 4-13% of standard encodings while retaining lecture content and quality. This approach aims to make distance learning more practical by addressing bandwidth limitations.

Copyright:

© All Rights Reserved

Verfügbare Formate

Als PDF, TXT herunterladen oder online auf Scribd lesen

0 Bewertungen0% fanden dieses Dokument nützlich (0 Abstimmungen)

33 Ansichten4 SeitenA Characteristics-Based Bandwidth Reduction Technique For Pre-Recorded Videos

Hochgeladen von

alokcena007This document proposes a characteristics-based bandwidth reduction technique for pre-recorded lecture videos. It captures lectures using a single video camera and exploits unique characteristics of the videos, like periods of static screen content, to reduce bandwidth beyond standard compression techniques. It detects shot boundaries to identify frames where significant changes occur. It then extracts key frames and associated audio segments to create a slideshow presentation in SMIL format, reducing bandwidth to 4-13% of standard encodings while retaining lecture content and quality. This approach aims to make distance learning more practical by addressing bandwidth limitations.

Copyright:

© All Rights Reserved

Verfügbare Formate

Als PDF, TXT herunterladen oder online auf Scribd lesen

Sie sind auf Seite 1von 4

A CHARACTERISTICS-BASED BANDWIDTH REDUCTION TECHNIQUE

FOR PRE-RECORDED VIDEOS

Wallapak Tavanapong Srikanth Krishnamohan

Department of Computer Science

Iowa State University

Ames, IA 5001 1-1040

Email: {tavanapo,srikk} @cs.iastate.edu

ABSTRACT

Advances in networking infrastructures, computing capabili-

ties, multimedia processing technologies, and the World Wide Web

have brought about real-time delivery of multimedia content through

the Intemet. While more communication bandwidth is expected in

the near future, it is either costly or unavailable to a large number

of users. For important applications such as distance leaming to be

successful, bandwidth limitations must be taken into account.

In this paper, we propose a novel approach using unique char-

acteristics of lecture videos to mitigate their bandwidth requirement

for distance leaming applications. The experimental results on our

test videos demonstrate that when using our approach, the band-

width requirement is reduced down to only about 4% of the video

encoded in MPEG and about 13% of the same video in Real format.

1. INTRODUCTION

Advances in networking infrastructures, computing capabilities, mul-

timedia processing technologies, and the World Wide Web have

made delivery of multimedia content through the Intemet a reality.

Recent years have witnessed much progress of multimedia deliv-

ery in electronic commerce such as the ubiquitous mp3 music and

online news videos; nevertheless, incorporating video and audio in

course materials for distance leaming has not been as successful due

to two major problems.

1. Limited bandwidth at the users end: Despite the fact that

the bandwidth of the backbone network is approaching giga-

bit rates, each individual user is unlikely to enjoy this huge

bandwidth since it is cost prohibitive. Thus, end users op-

tions for higher bandwidth over modem rates are via tech-

nologies such as ADSL and cable modem, with higher cost

of ownership. For distance leaming to reach a large number

of audience, such environment cannot be assumed.

2. Inadequate and complicated authoring tools: Multimedia au-

thoring still remains a challenging research problem. While

several tools [ 1, 2] have been developed, they require a con-

siderable amount of computer-related knowledge and prepa-

ration efforts making multimedia authoring a time consum-

ing and labor intensive process.

The first problem has been rigorously investigated as evident by

developments of various compression techniques [3, 41and client-

server technologies [5, 6, 71to utilize the limited bandwidth effec-

0-7803-6536-41001$10.00 (c) 2000 IEEE

tively. Current solutions for the second problem involve adminis-

trative supports to offer some incentives to lecturers who develop

online courses. In this paper, we propose an altemative solution to

address both problems. While existing solutions can produce high

to very high quality multimedia presentations, they require either

multiple capturing devices, changes in the lecturers teaching style,

or preloading of course materials in some format such as Power-

Point. In most cases, these techniques are not concemed with the

bandwidth consumption of the presentation.

Toreduce the amount of preparation efforts, we capture lectures

using a single camera. Without losing any lecture content or degrad-

ing the lecture quality, we exploit the unique characteristics of the

videos to reduce the bandwidth consumption of the presentation be-

yond that obtained using well known compression techniques such

as MPEG or Realvideo. The output of our approach is a slide-show

presentation in SMIL (Synchronized Multimedia Integration Lan-

guage) [8] format. The quality of our presentation varies depending

on the capability of the video camera. The unique characteristics

of the lecture videos are such that a discussion over an issue pre-

sented on the screen often lasts for several minutes in which there

are no change in the screen content. Displaying one high quality

image of the screen and streaming only the associated audio seg-

ment results in the minimum bandwidth. Additional benefit of the

proposed technique over advance preparations of online materials is

that interactions between the lecturer and hisher audience are also

captured, which can later be shared with other remote audience.

The remainder of this paper is organized as follows. Section 2

presents related work to make the paper self-contained. Wedescribe

our proposed technique and quantify the benefits of our approach in

Section 3 and Section 4, respectively. Our concluding remarks are

discussed in Section 5.

2. RELATED WORK

To constrain the bandwidth requirement of a video within the capa-

ble rate of the users accessing devices, video quality is often com-

promised. Frequently used techniques are reductions i n frame sizes,

frame rates, and pixel depths (number of bits per pixel). While these

techniques may be suitable for some types of videos such as news

clips, they do not fit well for lectures or seminars. Since text and

pictures presented on the screen are important to the understanding

of the lectures, reductions in frame sizes are not acceptable. Using

lower frame rate or less number of bits per pixel do not result in the

minimum bandwidth.

1751

Notable research projects developed for distance leaming are

the Experience-on-Demand (EOD) project [9], the Classroom 2000

(C2K) [I O], the Comell Lecture Browser [ 111, the AutoAudito-

rium [12], STREAMS [13], and the techniques by Minneman et.

al. [ 141. Wepresent important features which distinguish these sys-

tems from one another in the following.

0 Hardware requirements: Capturing devices range from a cou-

ple of video cameras to a specialized room equipped with a

variety of devices such as video cameras, electronic white-

boards, and sensoring devices.

0 Capturing techniques: Lecturers can either conduct their pre-

sentation normally (e.g., the Comell Lecture Browser, the

EOD, STREAMS) or they are required to aid the capturing

activities such as preparing their materials using Powerpoint

slides or teach using electronic whiteboards (e.g., C2K, and

0 End results: The results of these systems are either presenta-

tions (e.g., the Comell Lecture Browser, C2K), videos with

various resolutions (e.g., STREAMS) or tape (e.g., AutoAu-

ditorium).

~41).

Using the same criteria, our system differs from each of these

systems in at least one aspect. In summary, our proposed technique

requires a single video camera, passively captures the lecture, and

produces a slide-show presentation as end result. Most importantly,

the proposed technique focuses on reducing bandwidth requirement

for the presentation.

3. CHARACTERISTICS-BASED BANDWIDTH

REDUCTION TECHNIQUE

To identify video frames in which significant changes occur, we

adapt a shot boundary detection technique proposed in the litera-

ture. A cut is a joining point between two different shots without

the insertion of any special effect. Special effects such as fades, dis-

solves, or wipes are typically inserted between two cuts and result

in gradual transitions.

The algorithm takes a captured lecture video encoded in MPEG-

1 format as input. In the ideal case, the algorithm outputs a set of

J PEG images, audio segments, and a SMIL document which defines

the layout, the presentation order, and the association between im-

ages and audio segments. The proposed technique consists of two

phases as follows.

Learningphase : This phase consists of several steps to derive

color differences of consecutive frames. Video frames are

extracted from the MPEG file and passed through the fol-

lowing steps.

1. Channel Separation: In this step, the red components

of the RGB color are extracted. Since there are none

or few changes in the background during one lecture,

one color component is sufficient for shot boundary

detections. We note that other color models such as

YUV or HSV can also be used.

2. Edge Detection: The red frame is then filtered to re-

duce noise. Canny edge detection algorithm is then

applied to the frame to extract edges used in the next

step.

0-7803-6536-4/00/$10.00 (c) 2000 IEEE

3.

4.

Cut Phase

1752

Content Region Detection: To help reduce a trigger

of new images due to the lecturers habits such as the

use of fingers to move the slide up and down, we do

not consider the movements outside the margins of the

content portion (e.g., outside the text area). Such mar-

gins are determined from the edge detected frame as

follows. For each horizontal line, we first identify pos-

sible candidates for the left margin and the right mar-

gin. For the left margin, we start from the leftmost

pixel and search for the first edge pixel that seems to

bepart of the first character from the left (i.e., several

edge pixels following this pixel in the same horizontal

line). Candidates for the right margin are detected sim-

ilarly except that the search for the candidates starts

from the rightmost pixel. We choose both margins

from their corresponding candidates that result in the

narrowest content region. Since detecting the margins

as proposed is time consuming and the margins do not

change often, we perform this procedure once and use

them for other frames in the video.

Frame Difference Calculation: We use a 2-bin his-

togram to capture the distribution of the pixel values of

a red frame. If the pixel value is below 128, the pixel

color is approximated as black; otherwise, i t is approx-

imated as white. Wedefine D( i , i+l) as a color differ-

ence between frame i and i +1. Let B( i ) and W( i ) be

the number of black pixels and white pixels in frame i,

respectively. D(i , i +1) can be expressed mathemati-

cally as

D( i , i +1) = p( i ) - B( i +1)1+

IW(i) - W( i +1)l (1)

Wenote that one can use more number of histogram

bins if the lecture video is more colorful.

: In this phase, we use the calculated frame differences

to determine which images to generate. A sharp cut is usu-

ally detected when the difference between two consecutive

frames is significantly larger than a pre-defined threshold ( T)

and much smaller frame differences immediately follow af-

ter the cut point. A gradual transition is generally detected

when there is a gradual increasing in frame differences fol-

lowed by a gradual decreasing of frame differences. For

gradual transitions, the difference between two consecutive

frames is not very high; however, the accumulated difference

of all frame differences between the beginning of the gradual

transition and the middle of the transition is large.

Since we observe from our lecture videos that movements of

the lecturers exhibit two properties: (i) large frame differ-

ences as found in traditional cuts and (ii) gradual changes in

these differences as found in gradual transitions, we employ

the following techniques to generate the output.

Threshold Calculation: Let ,U and (T be the mean and

the standard deviation of the frame differences, respec-

tively. Weselect T to be as large as ,U +4 * U due to

the property (i ). 4 * U is determined experimentally.

Representative Frame Selection: Wefirst record frames

whose differences are larger than T. These frames are

boundary frames. Due to the gradual change property,

these frames are in the middle of the changes and not

suitable as representative frames. We currently choose

the frame with smallest difference among its boundary

frames as the representative frame. We think that tech-

niques based on cross-modal relationships between au-

dio and text can be used to select a better representative

frame.

It is possible that the boundary frames occur near each

other (within one second apart) when the lecturer fre-

quently moves the slides or accidentally blocks the

camera view. In this case, instead of generating several

images, which makes it difficult for synchronization

with audio segments, we encode the boundary frames

and frames in between as one MPEG file.

3. Media Synchronization: Once the representative frames

are selected, they are encoded in J PEG format. The

playout times of the representative frames are extracted

and audio packets with the playout time between the

playout times of the two consecutive representative

frames are taken from the MPEG system file and re-

encoded. PE G is chosen as an image file format be-

cause it offers a good compression-ratio and it is ac-

ceptable by most SMIL viewers. SMIL is an XML-

based language being developed by W3C. SMIL spec-

ification allows integration and synchronization of sev-

eral media elements such as text, videos, and audio.

Presentation layout and timing relationships among

these elements can be specified.

testvid4

testvid5

4. PERFORMANCE STUDY

In this section, we discuss the experimental setup and the experi-

mental results. Weassess the effectiveness of our technique using

bandwidth consumption ratio (BC,) defined as follows.

Bandwidth Consumption Ratio (BC,):

total s i z e ( i n by t e s ) of fi l es generated

r ef er ence size

BC, =

Reference size is the size of the original video encoded in MPEG-1

format in bytes. Wemeasure the bandwidth consumption ratio us-

ing our approach (Characteristics-Based) (CB - BC,), Real format

with standard quality (Real BC,), and Real format with slide show

quality (Real Slide BC,). Wecompare those to Optimal BC,

defined as the ratio of the size of the files selected manually to the

reference size. The best technique i s the one which has the band-

width consumption ratio closest to the optimal consumption ratio.

The target bitrate for both Real formats was set to 28.8kbps.

I

I ,

I

1000

I 844,134 I 3

5000

I 3,572,256 I 7

4.1. Test Videos

Weused five videos, each was captured from a lecture at 29.97 fps.

The videos were later encoded into MPEG-1 system files using an

MPEG-I hardware encoder. An MPEG demultiplexer was used to

separate the video and audio components. Wepresent the results

that account for the video component only. The captured frame size

was 352x240 pixels. The position of the video camera was fixed

throughout the entire capturing process and its focus was set to the

overhead projected screen.

The characteristics of the videos are presented in Table 1. It

shows the number of frames in each test video and the optimal num-

ber of manually selected frames. These frames represent all the con-

tent of the video. To compute the reference size as shown in Table 1 ,

0-7803-6536-4/00/$10.00 (c) 2000 IEEE

Table 1: Characteristics of Test Videos

[ Video I Frames I Referencesize I OptimalFrames I

I testvidl I 5000 I 4.319.924 I 9 I

I I

, ,

testvid2 I 5000 I 4,274,344 I 3

testvid3 1 5500 I 4.782.729 I 12 I

we extracted a number of consecutive frames from the MPEG sys-

tem file and encoded them back using the Berkeley MPEG software

encoder. Wenote that the number of frames chosen to create each

test video is small since it is time consuming to manually identify

the best representative images for each video. Nevertheless, these

short videos are sufficient to demonstrate the performance of our

technique, which can be seen shortly.

4.2. Experimental Results

Table 2 demonstrates the bandwidth consumption ratios using var-

ious techniques. For each video, we present the number of J PEG

and MPEG files generated using our technique. It can be seen that

using our technique, the consumption ratios are around 4% for all

the videos except testvid4. Compared with the optimal BC,, our

technique has at most four times more bandwidth while Real for-

mats require at most twenty-five times more bandwidth. The band-

width consumption ratios for videos in Real format are at least 21%

for slide show quality and 29% for standard video quality. Though

the frame size is reduced in Real video format, the Real BC, is

still high compared to the optimal BC,.. In our technique, the

frame sizes remain the same and there is no compromise in qual-

ity. The high values of Real BC, can be attributed to noise present

in the frames. The noise can be due to lecturers hand movement

etc. Noise can cause large frame differences between consecutive

frames though the useful content of these frames is similar. As a re-

sult, the benefit of interframe coding used in standard compression

techniques is reduced. Wenote that the small MPEG files obtained

from our technique were encoded using the same configuration for

the Berkeley MPEG encoder as in the reference video file.

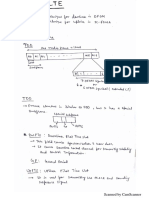

The distribution of frame differences calculated using Equa-

tion l is shown in Figure l for testvid5. The straight line shows

the threshold T used in the Cut phase. Figure 1 demonstrates the

three locations of the frames which constitute the three small MPEG

files (the points where clustered frames have frame differences over

the threshold line). These files contain the lecturers movements to

change the position of the cover paper up and down. Wefound that

the content-region detection step in Section 3 has lessen the effects

of finger movements outside the content region in several frames

such as frame 433 to 435 and frame 654 to 727.

5. CONCLUDING REMARKS

Wehave presented an altemative approach to reduce bandwidth re-

quirements for lecture-like videos for distance learning applications

using the unique characteristics of the lecture videos. In most cases,

our technique can reduce the bandwidth requirement down to only

around 4% of the video encoded in MPEG format and about 13%

of the videos in Real format. The amount of savings in the opti-

mal case is extremely attractive. The proposed technique requires

about four times more bandwidth than the optimal case. Weare cur-

1753

. Video No. JPEG Files No. MPEG Files Optimal BCr CB BCr Real BC,

testvidl 8 5 0.015 0.044 0.297

testvid2 14 3 0.013 0.039 0.311

testvid3 11 3 0.026 0.041 0.303

testvid4 2 1 0.032 0.122 0.327

testvid5 5 3 0.017 0.042 0.354

(Standard Video Quality)

Figure 1: Distribution of Frame Differences for testvid5

Real-Slide BC,

(Slide Show)

0.232

0.329

0.311

0.337

0.217

rently developing a new technique to further reduce the bandwidth

for lecture videos and extending the idea for videos generated from

sensoring devices observing real-world tasks such as those of surg-

eries and scientific experiments.

6. REFERENCES

R. Baecker, A. J . Rosenthal, N. Friedlander, E. Smith, and

A. Cohen. A multimedia system for authroing motion pic-

tures. In Proc. of ACM Multimedia!%, pages 3142, Boston,

MA, November 1996.

D. C. A. Bulterman, L. Hardman, J. J ansen, K. S . Mullender,

and L. Rutlege. GRiNS: A Graphical INterface for Creating

and Playing SMIMocuments. Computer Networks and ISDN

Systems, September 1998.

K. R. Rao and J. J . Hwang. Prentice-Hall PTR, Upper Saddle

River, NJ, 1996.

ISOlIEC J TCl/SC29/WGlI. J tcl/sc29/wgll

n2995 coding of moving pictures and audios. In

http://drogo. cselt. stet. it/mpeg/standards/mpeg-4/mpeg-4. htm,

October 1999.

W. Tavanapong, K. A. Hua, and J . 2. Wang. A framework for

supporting previewing and vcr operations in a low bandwidth

environment. In Proc. of ACM Multimedia97, pages 303-

312, Seattle, WA, November 1997.

K. A. Hua, W. Tavanapong, and J . Z. Wang. 2psm: An effi-

cient framework for searching video information in a limited

bandwidth environment. ACMMultimedia Systems, 7(5):396-

408, September 1999.

Realplayer. In http://www. real.com.

W3C. W3c working draft: Synchronized multime-

dia integration language (smil) boston specification. In

http://www. w3.orgflR/smil-bostod, November 1999.

Education on demand project. In

http:/hvww. informedia.cs.cmu.eddeo&.

G. Abowd et. al. Teaching and learning as multimedia author-

ing: The classroom 2000 project. In Proc. of ACM Multime-

dia96, pages 187-198, Boston, MA, November 1996.

Sugata Mukhopadhyay and Brian Smith. Passive capture and

structuring of lectures. In Proc. of ACMMultimedia99, pages

477487, Orlando, FL, October 1999.

M. Bianchi. Autoauditorium: A fully automatic, multi-

camera system to televise auditorium presentations. In Joinr

DARPA/NIST Smart Spaces Workshop, Gaithersburg, MD,

J uly 1998.

R. Cruz and R. Hill. Capturing and playing multimedia events

with streams. In Proc. of ACM Multimedia94, pages 193-

200, San Francisco, CA, October 1994.

S . Minneman, S . Harrison, B. J anssen, T. Moran, G. Kurten-

bach, and 1. Smith. A confederation of tools for capturing and

accessing collaborative activitiy. In Proc. of ACM Multime-

dia95, pages 523-534, San Francisco, CA, November 1995.

0-7803-6536-4/00/$10.00 (c) 2000 IEEE

1754

Das könnte Ihnen auch gefallen

- Multidimensional Signal, Image, and Video Processing and CodingVon EverandMultidimensional Signal, Image, and Video Processing and CodingNoch keine Bewertungen

- Embedded Deep Learning: Algorithms, Architectures and Circuits for Always-on Neural Network ProcessingVon EverandEmbedded Deep Learning: Algorithms, Architectures and Circuits for Always-on Neural Network ProcessingNoch keine Bewertungen

- Error Detection and Data Recovery Architecture For Motion EstimationDokument63 SeitenError Detection and Data Recovery Architecture For Motion Estimationkasaragadda100% (1)

- Delivery of High Quality Uncompressed Video Over ATM To Windows NT DesktopDokument14 SeitenDelivery of High Quality Uncompressed Video Over ATM To Windows NT DesktopAdy AndyyNoch keine Bewertungen

- SCAN Chain Based Clock Gating For Low Power Video Codec DesignDokument7 SeitenSCAN Chain Based Clock Gating For Low Power Video Codec DesignSaravanan NsNoch keine Bewertungen

- MpegdocDokument30 SeitenMpegdocveni37Noch keine Bewertungen

- Digital Video Transcoding: Jun Xin, Chia-Wen Lin, Ming-Ting SunDokument14 SeitenDigital Video Transcoding: Jun Xin, Chia-Wen Lin, Ming-Ting SundarwinNoch keine Bewertungen

- Digital Video Transcoding: Proceedings of The IEEE February 2005Dokument15 SeitenDigital Video Transcoding: Proceedings of The IEEE February 2005María Gabriela Danieri AndaraNoch keine Bewertungen

- Performance Evaluation of MPEG-4 Video Transmission Over IP-NetworksDokument12 SeitenPerformance Evaluation of MPEG-4 Video Transmission Over IP-NetworksAlexander DeckerNoch keine Bewertungen

- A Hybrid Image and Video Compression of DCT and DWT Techniques For H.265/HEVCDokument5 SeitenA Hybrid Image and Video Compression of DCT and DWT Techniques For H.265/HEVCmuneeb rahmanNoch keine Bewertungen

- 06videoOFDM CSVTaccept0608Dokument36 Seiten06videoOFDM CSVTaccept0608simona.halungaNoch keine Bewertungen

- Scalable Rate ControlDokument17 SeitenScalable Rate Controlc.sowndaryaNoch keine Bewertungen

- Cascaded Mpeg Rate Control For Simultaneous Improvement of Accuracy andDokument40 SeitenCascaded Mpeg Rate Control For Simultaneous Improvement of Accuracy andsklove123Noch keine Bewertungen

- An Introduction To Multimedia Processors: Stephan SossauDokument11 SeitenAn Introduction To Multimedia Processors: Stephan SossauHyder LutfyNoch keine Bewertungen

- Prediction Methods For Mpeg-4 and H.264 Video Transmission: Filip Pilka - Milo S OravecDokument8 SeitenPrediction Methods For Mpeg-4 and H.264 Video Transmission: Filip Pilka - Milo S OravecThành PhạmNoch keine Bewertungen

- Itc - Mpeg Case StudyDokument12 SeitenItc - Mpeg Case StudyTanmay MehtaNoch keine Bewertungen

- Itc - Mpeg Case StudyDokument29 SeitenItc - Mpeg Case StudyTanmay MehtaNoch keine Bewertungen

- Image Compression: by Artificial Neural NetworksDokument14 SeitenImage Compression: by Artificial Neural NetworksvasuvlsiNoch keine Bewertungen

- Scalable Internet Video Using MPEG-4: Hayder Radha, Yingwei Chen, Kavitha Parthasarathy, Robert CohenDokument32 SeitenScalable Internet Video Using MPEG-4: Hayder Radha, Yingwei Chen, Kavitha Parthasarathy, Robert CohenVaqar HyderNoch keine Bewertungen

- Implementation of P-N Learning Based Compression in Video ProcessingDokument3 SeitenImplementation of P-N Learning Based Compression in Video Processingkoduru vasanthiNoch keine Bewertungen

- Image Compression Using Wavelet TransformDokument43 SeitenImage Compression Using Wavelet Transformrachelpraisy_2451432Noch keine Bewertungen

- An Algorithm For Image Compression Using 2D Wavelet TransformDokument5 SeitenAn Algorithm For Image Compression Using 2D Wavelet TransformIJMERNoch keine Bewertungen

- A Hybrid Algorithm For Effective Lossless Compression of Video Display FramesDokument10 SeitenA Hybrid Algorithm For Effective Lossless Compression of Video Display FramesHelder NevesNoch keine Bewertungen

- Video Coding and A Mobile Augmented Reality ApproachDokument10 SeitenVideo Coding and A Mobile Augmented Reality Approachvfotop1Noch keine Bewertungen

- Lossless Compression in MPEG4 Videos: K.Rajalakshmi, K.MaheshDokument4 SeitenLossless Compression in MPEG4 Videos: K.Rajalakshmi, K.MaheshShakeel RanaNoch keine Bewertungen

- The H.263 Video Coding StandardDokument4 SeitenThe H.263 Video Coding StandardPawan Kumar ThakurNoch keine Bewertungen

- Ijecet: International Journal of Electronics and Communication Engineering & Technology (Ijecet)Dokument9 SeitenIjecet: International Journal of Electronics and Communication Engineering & Technology (Ijecet)IAEME PublicationNoch keine Bewertungen

- Wavelet-Based Image Compression: By: Heriniaina Andrianirina #1817662 Akakpo Agbago #1817699Dokument19 SeitenWavelet-Based Image Compression: By: Heriniaina Andrianirina #1817662 Akakpo Agbago #1817699Raja DineshNoch keine Bewertungen

- New Trends in Image and Video CompressionDokument7 SeitenNew Trends in Image and Video CompressionSrinivasan JeganNoch keine Bewertungen

- Video Compression's Quantum Leap: DesignfeatureDokument4 SeitenVideo Compression's Quantum Leap: DesignfeaturebengaltigerNoch keine Bewertungen

- A Different Approach For Spatial Prediction and Transform Using Video Image CodingDokument6 SeitenA Different Approach For Spatial Prediction and Transform Using Video Image CodingInternational Organization of Scientific Research (IOSR)Noch keine Bewertungen

- A Low Complexity Embedded Compression Codec Design With Rate Control For High Definition VideoDokument14 SeitenA Low Complexity Embedded Compression Codec Design With Rate Control For High Definition Videodev- ledumNoch keine Bewertungen

- Video Transmission in 3G TelephonyDokument6 SeitenVideo Transmission in 3G TelephonygattupallikNoch keine Bewertungen

- A Review Enhancement of Degraded VideoDokument4 SeitenA Review Enhancement of Degraded VideoEditor IJRITCCNoch keine Bewertungen

- A Survey On The Techniques For The Transport of Mpeg-4 Video Over Wireless NetworksDokument11 SeitenA Survey On The Techniques For The Transport of Mpeg-4 Video Over Wireless Networksismail_aaliNoch keine Bewertungen

- An Adaptive Compressed Video Steganography Based On Pixel-Value Differencing SchemesDokument6 SeitenAn Adaptive Compressed Video Steganography Based On Pixel-Value Differencing SchemesesrNoch keine Bewertungen

- Qoe-Based Transport Optimization For Video Delivery Over Next Generation Cellular NetworksDokument6 SeitenQoe-Based Transport Optimization For Video Delivery Over Next Generation Cellular NetworksrishikarthickNoch keine Bewertungen

- Robust Adaptive Data Hiding in Video Watermarking: S.Jagadeesan, Senior LecturerDokument9 SeitenRobust Adaptive Data Hiding in Video Watermarking: S.Jagadeesan, Senior LecturerMadan Kumar BNoch keine Bewertungen

- JPEG Standard, MPEG and RecognitionDokument32 SeitenJPEG Standard, MPEG and RecognitionTanya DuggalNoch keine Bewertungen

- Design and Implementation of An Optimized Video Compression Technique Used in Surveillance System For Person Detection With Night Image EstimationDokument13 SeitenDesign and Implementation of An Optimized Video Compression Technique Used in Surveillance System For Person Detection With Night Image EstimationChetan GowdaNoch keine Bewertungen

- An Optimization Theoretic Framework For Video Transmission With Minimal Total Distortion Over Wireless NetworkDokument5 SeitenAn Optimization Theoretic Framework For Video Transmission With Minimal Total Distortion Over Wireless NetworkEditor InsideJournalsNoch keine Bewertungen

- In Communications Based On MATLAB: A Practical CourseDokument10 SeitenIn Communications Based On MATLAB: A Practical Coursesakhtie1Noch keine Bewertungen

- 3G Video Cell PhoneDokument5 Seiten3G Video Cell Phonedoggy667Noch keine Bewertungen

- Intro To Systems Online Exam 17th May 2021Dokument10 SeitenIntro To Systems Online Exam 17th May 2021Hina ShahzadiNoch keine Bewertungen

- Lip2 Speech ReportDokument7 SeitenLip2 Speech Reportshivammittal200.smNoch keine Bewertungen

- Fast Encryption Algorithm For Streaming Video Over Wireless NetworksDokument4 SeitenFast Encryption Algorithm For Streaming Video Over Wireless NetworksInternational Journal of computational Engineering research (IJCER)Noch keine Bewertungen

- Digital Image ProcessingDokument11 SeitenDigital Image ProcessingSudeshNoch keine Bewertungen

- 4.3.1.1 It's Network Access Time Instructions RODELASDokument2 Seiten4.3.1.1 It's Network Access Time Instructions RODELASAldrian Hernandez50% (8)

- 52 DenoisingDokument13 Seiten52 DenoisingPrasanna MathivananNoch keine Bewertungen

- Haar Wavelet Based Approach For Image Compression and Quality Assessment of Compressed ImageDokument8 SeitenHaar Wavelet Based Approach For Image Compression and Quality Assessment of Compressed ImageLavanya NallamNoch keine Bewertungen

- RD-Optimisation Analysis For H.264/AVC Scalable Video CodingDokument5 SeitenRD-Optimisation Analysis For H.264/AVC Scalable Video Codingvol2no4Noch keine Bewertungen

- IJRAR1CSP053Dokument4 SeitenIJRAR1CSP053VM SARAVANANoch keine Bewertungen

- A Hybrid Transformation Technique For Advanced Video Coding: M. Ezhilarasan, P. ThambiduraiDokument7 SeitenA Hybrid Transformation Technique For Advanced Video Coding: M. Ezhilarasan, P. ThambiduraiUbiquitous Computing and Communication JournalNoch keine Bewertungen

- Offset Trace-Based Video Quality Evaluation After Network TransportDokument13 SeitenOffset Trace-Based Video Quality Evaluation After Network TransportRavneet SohiNoch keine Bewertungen

- Multimedia Over ATM: Progress, Status and Future: Bing Zheng Mohammed AtiquzzamanDokument8 SeitenMultimedia Over ATM: Progress, Status and Future: Bing Zheng Mohammed AtiquzzamanYandra JeneldiNoch keine Bewertungen

- Efficient and Robust Video Compression Using Huffman CodingDokument4 SeitenEfficient and Robust Video Compression Using Huffman CodingRizal Endar WibowoNoch keine Bewertungen

- H.264 Video Encoder Standard - ReviewDokument5 SeitenH.264 Video Encoder Standard - ReviewInternational Journal of Application or Innovation in Engineering & ManagementNoch keine Bewertungen

- An Image-Based Approach To Video Copy Detection With Spatio-Temporal Post-FilteringDokument10 SeitenAn Image-Based Approach To Video Copy Detection With Spatio-Temporal Post-FilteringAchuth VishnuNoch keine Bewertungen

- Digital Video TranscodingDokument13 SeitenDigital Video TranscodingKrishanu NaskarNoch keine Bewertungen

- Ip-Distributed Computer-Aided Video-Surveillance System: S Redureau'Dokument5 SeitenIp-Distributed Computer-Aided Video-Surveillance System: S Redureau'Payal AnandNoch keine Bewertungen

- TEQIP III Comprehensive ListDokument36 SeitenTEQIP III Comprehensive Listalokcena007Noch keine Bewertungen

- The Charge of The Light BrigadeDokument1 SeiteThe Charge of The Light Brigadealokcena007Noch keine Bewertungen

- Scanned by CamscannerDokument13 SeitenScanned by Camscanneralokcena007Noch keine Bewertungen

- PHD Brochure 2015 MarchDokument49 SeitenPHD Brochure 2015 Marchalokcena007Noch keine Bewertungen

- Broadband Stripline Fed Microstrip Patch Antennas For Mobile CommunicationsDokument5 SeitenBroadband Stripline Fed Microstrip Patch Antennas For Mobile Communicationsalokcena007Noch keine Bewertungen

- This: Study and Design Compact Wideband Microstrip AntennasDokument4 SeitenThis: Study and Design Compact Wideband Microstrip Antennasalokcena007Noch keine Bewertungen

- Printed BPF For L BandDokument3 SeitenPrinted BPF For L Bandalokcena007Noch keine Bewertungen

- Goals of True Broad Band's Wireless Next Wave (4G-5G)Dokument5 SeitenGoals of True Broad Band's Wireless Next Wave (4G-5G)alokcena007Noch keine Bewertungen

- Microwave Filters-1965Dokument20 SeitenMicrowave Filters-1965alokcena007Noch keine Bewertungen

- Installation Guide Ie3dDokument2 SeitenInstallation Guide Ie3dalokcena007100% (2)

- The Solitary Reaper (Wordsworth)Dokument1 SeiteThe Solitary Reaper (Wordsworth)alokcena007Noch keine Bewertungen

- Microwave Filters-Applications and TechnologyDokument12 SeitenMicrowave Filters-Applications and Technologyalokcena007Noch keine Bewertungen

- ICT - Multimedia 2Dokument1 SeiteICT - Multimedia 2Amirul ZackNoch keine Bewertungen

- COMMSCOPE L26 Filter E15V87P64Dokument4 SeitenCOMMSCOPE L26 Filter E15V87P64Carlos CostaNoch keine Bewertungen

- NPTEL Online Certification Courses Indian Institute of Technology KharagpurDokument10 SeitenNPTEL Online Certification Courses Indian Institute of Technology Kharagpurece gptplptNoch keine Bewertungen

- Decoding Alright by Kendrick LamarDokument4 SeitenDecoding Alright by Kendrick Lamargovind shankarNoch keine Bewertungen

- Confidential For Gospell Internal Use Only Confidential For Gospell Internal Use OnlyDokument75 SeitenConfidential For Gospell Internal Use Only Confidential For Gospell Internal Use OnlyEDSONNoch keine Bewertungen

- Tutorial - 13 - Wave Reflection and Transmission at Normal IncidenceDokument2 SeitenTutorial - 13 - Wave Reflection and Transmission at Normal IncidenceFaizzwan FazilNoch keine Bewertungen

- Iron Fist November 2018Dokument132 SeitenIron Fist November 2018glibertv100% (1)

- Elvis Presley Research Paper ThesisDokument4 SeitenElvis Presley Research Paper Thesisgw0w9j6d100% (1)

- 09PetTELUGU PDFDokument12 Seiten09PetTELUGU PDFEswara SaiNoch keine Bewertungen

- The Art of Solo GuitarDokument12 SeitenThe Art of Solo GuitarOlivier BenarrocheNoch keine Bewertungen

- Chords 2Dokument22 SeitenChords 2Rej JimenezNoch keine Bewertungen

- Hawaiian Christmas SongsDokument2 SeitenHawaiian Christmas Songsapi-234251053Noch keine Bewertungen

- Under The Bridge: Red Hot Chilli PeppersDokument1 SeiteUnder The Bridge: Red Hot Chilli PeppersMauro Dell'IsolaNoch keine Bewertungen

- Cita Horaria Intensivo 2010Dokument24 SeitenCita Horaria Intensivo 2010PropagandaceeNoch keine Bewertungen

- STK4-Soal Tajwid Kelas 4 Semester 2-NilaiDokument3 SeitenSTK4-Soal Tajwid Kelas 4 Semester 2-Nilaijuni watiNoch keine Bewertungen

- Jazz and LeadersDokument4 SeitenJazz and LeadersDanil PechorinNoch keine Bewertungen

- Ybus Formation by MadhuDokument19 SeitenYbus Formation by MadhuMadhu Tallapanenimadhu0% (1)

- Domestic Bliss and Other Oxymorons - YourperipheryDokument64 SeitenDomestic Bliss and Other Oxymorons - Yourperipheryspnmissingfic100% (4)

- We All Stand-ChorusDokument59 SeitenWe All Stand-Chorussersergio0% (1)

- Music Merit Badge WorksheetsDokument7 SeitenMusic Merit Badge WorksheetsAdam MenicucciNoch keine Bewertungen

- Document 1Dokument1.102 SeitenDocument 1Pcnhs SalNoch keine Bewertungen

- 2011 HWGK InsideDokument304 Seiten2011 HWGK InsideTracie WagmanNoch keine Bewertungen

- DataDokument9 SeitenDataIván Alexander Chero CabreraNoch keine Bewertungen

- Reglas para Añadir Al Verbo Principal: Am Is Are ReadDokument8 SeitenReglas para Añadir Al Verbo Principal: Am Is Are ReadBrandon Sneider Garcia AriasNoch keine Bewertungen

- Bapa Kami - Satria SamosirDokument5 SeitenBapa Kami - Satria SamosirTri SamosirNoch keine Bewertungen

- Trinity Gese Examinations Grade 5 School Practice TOPIC FORM (5 Min.)Dokument8 SeitenTrinity Gese Examinations Grade 5 School Practice TOPIC FORM (5 Min.)Néstor GarcíaNoch keine Bewertungen

- RG Cabble Losses ChartDokument7 SeitenRG Cabble Losses Chartsyr_rif11Noch keine Bewertungen

- BCR IRC APP 026964 V3 00 - Femto Parameter User Guide BCR3 0Dokument454 SeitenBCR IRC APP 026964 V3 00 - Femto Parameter User Guide BCR3 0leandre vanieNoch keine Bewertungen

- IngeteamDokument2 SeitenIngeteamBobot PadilloNoch keine Bewertungen

- Ovt Lime ChartsDokument25 SeitenOvt Lime ChartsMatteo GhittiNoch keine Bewertungen