Beruflich Dokumente

Kultur Dokumente

IPA 2005-03 ProoftoPrintMatch

Hochgeladen von

Azwan NazeriOriginalbeschreibung:

Copyright

Verfügbare Formate

Dieses Dokument teilen

Dokument teilen oder einbetten

Stufen Sie dieses Dokument als nützlich ein?

Sind diese Inhalte unangemessen?

Dieses Dokument meldenCopyright:

Verfügbare Formate

IPA 2005-03 ProoftoPrintMatch

Hochgeladen von

Azwan NazeriCopyright:

Verfügbare Formate

22-23, 26-29

3/21/05

8:35 PM

Page 22

STANDARDS

Print-to-Proof Match:

Whats Involved?

Understanding what is needed to use

measurement data to predict the quality

of a match between a proof and a print.

BY

D AVID Q. M C D OWELL

ne of the most frequent production decisions made in the printing industry is to

answer the question, Do the print and

proof match? Wherever it is madein

prepress, in the press room, by the purchaser of a

proofing system, by a trade association certifying

proofing systems, etc.it is a decision that today is

based on a visual comparison.

Many, particularly those trying to certify proofing

systems, would like to be able to use measurement

data to predict the quality of a match between a

proof and a print. The ultimate goal is to remove the

human element, the visual comparison, with all its

uncertainty and individual bias.

On a parallel note, recent discussions of digital

proofing at the February ICC (International Color Consortium) meeting in Orlando have identified the need

to describe variables involved in both making proofs

from digital data and using measurement data to verify

the match of proofs and aim characterization data.

These two issues are more intimately related than

one would guess at first glance. As part of the validation of any digital proofing system, it is important

to determine, and allocate, the variability involved in

making individual proofs. Many of these same variables are involved in the analytical comparison of

proof and print.

Lets assume that psychophysical testing can give

us some measure of the colorimetric variability that

can exist between images and still allow us to say

they match. We then must identify the sources of

variability between the proof and the aim characterization data, or a print made according to that characterization data, and apportion the allowed

variability among them. Of course, we must also

22

I PA B U L L E T I N M a rch / A p r i l 2 0 0 5

accept the possibility that there may be too much

variability to allow such a prediction to be made.

We will not know until we understand the

allowed tolerances based on psychophysical testing

and the variability associated with making and

measuring the proof.

The Scenario

Before we try to analyze the variables involved, we

need to put some boundaries on the problem. Lets

assume we are starting with a set of CMYK digital

data that have been prepared using CGATS TR 001

as a reference. (For those not familiar with TR 001,

it is a characterization data set that provides the relationship between CMYK data and the colors that are

measured on a sheet printed or proofed in accordance with the SWOP aimsa digital definition of

SWOP.) The goal is to proof the images represented

by this data and also print the same data on press

and compare the results to each other and to the

reference data set. To provide the necessary colorimetric data, we need to print a measurement target

at the same time we print the image data that is

used for visual comparison.

Making the Proof

In our proofing workflow, we will assume the native

behavior of the proofing system does not match the

TR 001 characterization and the image data must be

modified, using color management, to provide the

input data required by the proofer. The intent is that

the combination of the modified data and the

proofing systems calibrated response will simulate

the relationship between the file data and printed

color defined by TR 001.

22-23, 26-29

3/21/05

8:35 PM

Page 23

the Challenge:

Print-to-Proof Match

So What Are the Sources of Variability?

First, in order to use color management we need to

characterize the proofing device. This involves printing

a reference target, measuring the color printed for

each patch, and then creating a color profile using this

data. The first two sources of variability are:

1. Uncertainty in the colorimetric measurement of

the printed reference target used to characterize

the proofer.

2. The modeling errors in the proofer prole

building process.

Now we are ready to make our proof. The proofer

profile we made is used in combination with the profile that characterizes the reference printing condition (which is based on the TR 001 reference data)

to modify the image content data so it will provide

the proper input to the proofer. We have added three

new sources of variability.

3. The modeling errors in the TR 001 profile

building process (we assume that the TR 001 data

have no variability).

4. The computational errors in the color management system when it applies the profiles to

the data.

5. The variability of the proofer (changes in proong

system performance between the time the characterization data was printed and the proof is made).

Now that we have our proof, how close does the

target we printed along with the image data match

our reference data? To know that, we must measure

the target printed with the image data, which adds

another source of variability.

6. Uncertainty in the colorimetric measurement of

the target printed on the proong device along with

the image data.

Do We Have to Account for Other Variability?

Part of the answer depends on whether we are comparing the proof to the reference data or to a press

sheet that has been printed using a press that

matches the aim printing conditions (unrealistic, but

a simplification for this discussion).

If we are going to evaluate the match to a press

sheet, we account for the variability in the measurement of the press sheet.

The Printed Sheet

The printing process itself has variability; and from a

systems point of view, we should assign a tolerance

to the variability of printing. For now, lets leave that

out of the equation and assume that all we want to

do is see how well a particular printed sheet matches

the proof. Even in that scenario we need to add

another source of variability.

continued on page 26

M a rch / A p r i l 2 0 0 5 I PA B U L L E T I N

23

22-23, 26-29

3/21/05

8:35 PM

Page 26

STANDARDS

7. Uncertainty in the colorimetric measurement of

the reference target on the printed sheet.

The Comparison

When we do a comparison visually, we compare a

proof and a print and make a judgment about the

quality of that match. To try to evaluate and predict

the match for any other comparisons

of proof and print, we usually comPrint-to-Proof Match

pare several samples and make an

Sources of Variability

educated guess. If all of our compar1. Uncertainty in the colorimetric

isons look fairly good, we usually premeasurement of printed reference

target used to characterize proofer.

dict that others will also look good and

we judge the proofing system being

2. Modeling errors in proofer

prole building process.

evaluated as acceptable. Not real elegant but the best we have.

3. Modeling errors in the TR 001

When we move to an evaluation

prole building process.

based on measurement data, it

4. Computational errors in the color

becomes much more complicated.

management system when it

applies proles to data.

What we would like to do is predict,

based on colorimetric measurements,

5. Variability of the proofer.

the probability that a given proofing

6. Uncertainty in the colorimetric

system will consistently produce

measurement of the target printed

proofs that predict (match) a given set

on the proong device along with

image data.

of printing characterization data

and/or printing based on that charac7. Uncertainty in colorimetric

measurement of the reference

terization data. This means that we

target on the printed sheet.

must account for all of the variability

between the input data and the proof

and compare that to allowed tolerances that are based on visual comparison tests.

Lets start by looking at our seven

sources of variability and see what we

know about them.

Variability Estimates

Measurement Variability: Three of our seven sources

of variability (variables 1, 6, and 7 above) involve

the uncertainty in the colorimetric measurement

process. Frankly we do not have a good estimate of

the variability between instruments, either of the

same design or between instruments from different

manufacturers. At the ICC meeting, Dr. Danny Rich

reported that he is participating in a program with

Rochester Institute of Technology to try to get some

estimates of instrument variability. This should

begin to provide sorely needed data to allow the

variability of these steps to be properly estimated.

Some very coarse initial data suggests that the

26

I PA B U L L E T I N M a rch / A p r i l 2 0 0 5

50 percentile point (in a cumulative distribution

plot) for replicate measurements with the same

instrument on the same sheet at different times

(the reproducibility) may be on the order of 0.3 to

0.5 delta E. This number will certainly be larger

when multiple instruments are involved and still

greater when instruments from different manufacturers are included.

Proofer Variability: Most discussions of proofer variability identify within-sheet and between-sheet variability as key ingredients in any estimate. If standard

tests were established, this could be a parameter that

proofing system manufacturers were asked to provide as part of the specification of their units (variable 5 above). At this point in time, we neither have

a standard test nor even a coarse estimate of this

parameter. This is probably a task that CGATS or

TC130 should be asked to take on relatively quickly.

Some proofing materials experience considerable

change after they are initially made (similar to ink

drying effects) and they should be held for a specified time before they are used or measured. In

addition, some materials have a finite lifetime

during which their characteristics are stable. These

short- and long-term changes in reflection characteristics of proofing materials, and recommendations concerning recommended hold and/or

keeping times, are probably best included as part of

this source of variability. ISO/TC42 has a number

of standards that can be used as the basis for a stability/fading test for graphic arts materialsnone

are directly applicable. Again, CGATS and/or TC130

probably should develop such a standard specifically for graphic arts proofing.

Color Management System Variability: As noted,

the color management process itself contributes

additional sources of variability (variables 2, 3, and

4 above). Profile transforms (even the colorimetric

transforms that are used in our scenario) do not

perfectly describe the data-to-color relationships of

the measured characterization data. In addition,

applying these transforms to real data in a CMM

(color management modulethe name given to

the computational engine that applies transforms

to data) involves additional interpolation and

rounding errors.

There are no published estimates of the magnitude of the variability from these contributors. In our

scenario we build two profilesone from TR 001

data and one from data measured from the proofing

22-23, 26-29

3/21/05

8:35 PM

Page 27

system. We then apply these profiles to data in a

CMM or build a device link profile and apply that

to the data. (A device link profile is a special case

where the transforms from two profiles are combined to create a single set of transforms that may

also have added capabilities such as preserving the

characteristics of the black printing channel, etc.)

The ICC needs to be asked to provide estimates

of both these sources of variability.

The Bottom Line

The bottom line is that we have a good handle on

the sources of variability, but do not have any idea

about their magnitude. From statistics we can

probably predict that the way to sum their contribution (when we know what they are) is to say that

the square root of the sum of the squares should

not exceed our allowable tolerance. But we also

have to determine a metric that allows us to predict a matchwhich is our allowed tolerance.

How Good Does the Match Have to Be?

We have identified the major sources of variability,

which at this point are poorly quantified, and even

suggested some activities that may give us better

estimates. However, the bottom line ishow good

does it have to be?

Unfortunately, we do not know!

Some groups have suggested that if we use an

IT8.7/3 target or an ECI 2000 target and have an

average delta E (metric distance in CIELAB space

between two points) between the proof and print of

2 and a max of 5 or 6 that is good enough. Others

have pointed out that these targets do not sample

color space equally. Because of the large numbers

of patches in the IT8.7/3 or ECI 2000 target that call

for black ink, the data set is biased toward the

darker, less chromatic colors.

From the photographic industry, we know color

differences in the paper, or Dmin area, of as little as

0.5 delta E can be objectionable.

The CIE has done a lot of work in the area of

color difference calculation. Unfortunately, virtually all the work has been concentrated on the

detectability of differences in large patches under

D65 illumination using the 10-degree observer. The

textile and fabric industry has been the motivating

force for this work. What we are concerned about

is acceptability of differences in complex images

viewed under D50 and assuming a 2-degree

observer. A whole different ball game.

Technical Committee 8-02 of CIE Division 8 was

set up to study the issue of color differences in complex images. It is not an easy task. To date they have

developed some metrics to relate color and spatial

effects and some suggested studies that will lead to

more understandingbut no answers.

CGATS/SC3/TF1 has been looking at some of the

SWOP and GRACoL proofing system evaluations,

but have not yet been able to derive correlations

between acceptability and delta E.

We have identified the major sources of

variability, which at this point are poorly

quantified, and even have suggested some

activities that may give us better estimates.

The bottom line ishow good does it have

to be? Unfortunately, we do not know!

Whats Next?

Is it hopeless? Not at all.

We think we know what has to be determined.

We know some of the groups that are in the best

position to find the answers. We just have to find a

way to all pull in the same direction and share the

necessary knowledge.

Some Proposed Assignments

Lets pretend that all of the groups that we have

mentioned were part of some larger organization

and we could assign tasks to them individually or

in groups. I would suggest that the following set of

assignments might get us moving in the right direction and would provide some working tolerances.

ICC-I would assign the ICC the task of providing

an estimate of the average error (and associated

statistics about the error distribution) between

characterization data and data predicted by colorimetric transforms in a typical CMYK profile. I

would also ask them to provide an estimate of the

error (and associated statistics about the error distribution) associated with typical CMM processing

of transform data (variables 2, 3, and 4).

ISO/TC130-I would ask TC130 to develop standard

tests to measure the within-sheet and between-

M a rch / A p r i l 2 0 0 5 I PA B U L L E T I N

27

22-23, 26-29

3/21/05

8:35 PM

Page 28

STANDARDS

sheet variability of a proofing system such that an

estimate could be provided of the expected variability between a sheet used for characterization

and a sheet printed sometime in the future.

It would be beneficial if TC130 also were to

develop standardized tests for short- and longerterm image stability. This could build on the imagekeeping work of TC 42/WG5 but should focus on

issues related to the time required after printing to

reach a stability plateau and the length of this

plateau (with very tight tolerances on allowable

change) as a function of storage and viewing conditions. Here, long term is weeks to months vs. the

years typically studied by TC42.

The individual device manufacturers would need

to conduct tests based on these standards and

report appropriate data for their systems. These are

all part of variable 5.

CIE, TC130, or ASTM-A valid estimate of the uncertainty of colorimetric measurements is critical for

both the direct comparison of measurements of

proofs and prints and for development of charac-

Industry Acronyms Cited in This Article

ANSI-American National Standards

Institute, the industry organization

that coordinates standards development in the United States and

U.S. involvement in ISO and IEC.

ASTM International-American Society

for Testing and Materials.

BSI-British Standards Institution,

the UKs national standards body,

which also represents the UK in

international standards making.

CGATS-Committee for Graphic Arts

Technologies Standards, the ANSI

committee responsible for U.S.

standards for the printing and publishing industry.

CIE-Commission Internationale de

l'Eclairage, also known as International Commission on Illumination, an organization devoted to

international cooperation and

exchange of information on matters relating to the science and art

of lighting.

CMM-Color Management Module,

the name given to the computational engine that applies transforms to data.

ECI-European Color Initiative, a group

dedicated to advancing media-neutral color data processing in digital

publishing systems.

28

FOGRA-Graphic Technology Research

Association, the German national

printing research organization.

GRACoL-General Requirements for

Applications in Commercial Offset

Lithography, a committee of the

IDEAlliance.

ICC-International Color Consortium, develops color management

specifications.

IFRA-IFRA deals with all issues

related to production of publications in general, but is primarily

focused on newspaper printing.

IPA-The Association of Graphic

Solutions Providers.

IT8.7/3-IT8 standard-Graphic technology-Input data for characterization of 4-color process printing.

ISO/TC42-Technical Committee 42,

Photography, ISO Committee for

photographic industry standards.

NPES-The Association for Suppliers of Printing, Publishing and

Converting Technologies, acts as

secretariat for several standards

organizations.

PIA/GATF-Printing Industries of

America/Graphic Arts Technical

Foundation.

I PA B U L L E T I N M a rch / A p r i l 2 0 0 5

PPA-Professional Photographers

Association in the United Kingdom.

SC-Subcommittee, a subgroup within

a TC (Technical Committee).

SICOGIF-Syndicat National des

Industries de la Communication

Graphique et de lImprimerie

Franaises, the French National

Association of Graphic Communication and Printing Industries.

SNAP-Specications for Newspaper

Advertising Production Committee,

goal is to improve reproduction

quality in newsprint production and

provide guidelines for exchange of

information.

SWOP-Specications for Web Offset

Publications, focuses on specication of parameters necessary to

ensure consistent quality of advertising in publications.

TR 001-CGATS standard, Graphic

technology-Color characterization

data for Type 1 printing.

TC130-Technical Committee 130,

Graphic technology, the ISO standards committee responsible for

standards for the printing and publishing industry.

WG-Working Group, a subgroup

within an SC or a TC.

22-23, 26-29

3/21/05

8:35 PM

Page 29

terization data. Such estimates need to have provisions for measurements made with a single instrument, made with multiple instruments of the same

model, and made with multiple instruments of different models (but same geometry).

It is not clear who is in the best position to provide the testing methodology and coordinate testing

of this type. Some combination of CIE, TC130, and

Committee E-12 of ASTM come to mind. The assistance and cooperation of the spectrophotometer

manufacturers is also critical to provide valid estimates of the variability attributable to colorimetric

measurement (variables 1, 6 and 7).

CIE Division 8 (with help from TC130 and industry

groups)-The most critical need is to develop a colorimetric criteria that can be used to determine an

acceptable match between reproductions (proofto-print, proof-to-proof, etc.) of complex images.

While there may be a necessity to initially include

the color distribution and spatial characteristics of

the image content, ideally the acceptability criteria

need to be content independent.

CIE TC8-02 has spent the last several years

studying the issues and has completed a comprehensive literature survey that included factors that

affect the evaluation of color differences of digital

images. This information should be sufficient to

aid the definition of further experiments to investigate the use of current color-difference formulae,

and suggest further developments of color difference formulae or targets specifically for application to complex images. However, there are no

answers yet.

There are also a number of industry groups in

the United States and worldwide that are trying to

develop ways to certify proofing systems, i.e., the

ability of a proofing system to replicate a specified

printing condition. The dominant industry groups

involved in such activities are SWOP, GRACoL,

SNAP, IFRA, PPA (United Kingdom), SICOGIF

(France), ECI/FOGRA.

In addition, groups like IPA, GATF, IFRA, etc., routinely have proofing shootouts and industry competitions/comparisons. These groups depend

largely on visual comparisons. In addition, ANSI

CGATS is studying metrology techniques that might

assist in such certification processes.

Unfortunately, to date, there has not been extensive interaction between the groups evaluating

proofing systems and those looking at the compar-

ison problem from a more theoretical perspective.

Although each could learn from the others work, it

would add additional complexity to both tasks.

Our most critical issue is to determine the metric

that predicts the probability of a visual match

between images. We must find a way to feed information (and collect the additional information

required) from the groups doing ongoing comparisons into the theoretical work being done by the

color community.

Our most critical issue is to determine the

metric that predicts the probability of a

visual match between images. We must find

a way to feed information from the groups

doing ongoing comparisons into the work

being done by the color community.

So Who Is in Charge?

Or Where Do We Go from Here?

The short answer is that there is no umbrella group

that can take charge. One possible way to proceed

is to call a meeting that involves all of the potential

players, share knowledge, and propose some cooperative efforts.

Such a meeting is being arranged. It tentatively

will be held as part of the TC130 WG3 meeting on

Friday, May 13, 2005, at BSI in London. This

meeting is being arranged by Dr. Fred Dolezalek

(dolezalek.f@freenet.de), who is chairman of TC130,

and Craig Revie (craig.revie@ffei.co.uk), who serves

as chairman of the ICC.

This is also an open invitation to participate in

the discussions around this issue and/or commit

your group to be involved. If you want to be

involved, contact me at mcdowell@Kodak.com or

mcdowell@npes.org (or Fred or Craig) and I will

see that your interest is forwarded to the appropriate people.

If you would like to discuss the perspectives proposed, or suggest changes to this scenario, please

do not hesitate to contact me so that together we

can help find the best way forward.

M a rch / A p r i l 2 0 0 5 I PA B U L L E T I N

29

Das könnte Ihnen auch gefallen

- Taga 2007 Neutral Scales Press CalibrationDokument9 SeitenTaga 2007 Neutral Scales Press CalibrationQUALITY CONTROLNoch keine Bewertungen

- Overview Six Sigma PhasesDokument3 SeitenOverview Six Sigma Phaseshans_106Noch keine Bewertungen

- Computer Predictions With Quantified Uncertainty, Part IIDokument4 SeitenComputer Predictions With Quantified Uncertainty, Part IIYixuan SunNoch keine Bewertungen

- CE802 ReportDokument7 SeitenCE802 ReportprenithjohnsamuelNoch keine Bewertungen

- Multimodal Biometrics: Issues in Design and TestingDokument5 SeitenMultimodal Biometrics: Issues in Design and TestingMamta KambleNoch keine Bewertungen

- Modelado y CalibraciónDokument40 SeitenModelado y Calibraciónandres_123456Noch keine Bewertungen

- Project 2 Factor Hair Revised Case StudyDokument25 SeitenProject 2 Factor Hair Revised Case StudyrishitNoch keine Bewertungen

- Validation and Updating of FE Models For Structural AnalysisDokument10 SeitenValidation and Updating of FE Models For Structural Analysiskiran2381Noch keine Bewertungen

- ASME & NAFEMS - 2004 - What Is Verification and Validation - SCDokument4 SeitenASME & NAFEMS - 2004 - What Is Verification and Validation - SCjadamiat100% (1)

- IVT Network - FAQ - Statistics in Validation - 2017-07-05Dokument2 SeitenIVT Network - FAQ - Statistics in Validation - 2017-07-05ospina3andresNoch keine Bewertungen

- Comparison Cannot Be Perfect, Measurements Inherently Include ErrorDokument3 SeitenComparison Cannot Be Perfect, Measurements Inherently Include ErrorOlivia Ting MimieNoch keine Bewertungen

- What Is A GageDokument12 SeitenWhat Is A GageMohini MaratheNoch keine Bewertungen

- Regression ValidationDokument3 SeitenRegression Validationharrison9Noch keine Bewertungen

- L 8data Analysis and InterpretationDokument33 SeitenL 8data Analysis and InterpretationTonie NascentNoch keine Bewertungen

- Using Genetic Programming To Improve Software Effort Estimation Based On General Data SetsDokument11 SeitenUsing Genetic Programming To Improve Software Effort Estimation Based On General Data SetsNivya GaneshNoch keine Bewertungen

- How To Prepare Data For Predictive AnalysisDokument5 SeitenHow To Prepare Data For Predictive AnalysisMahak KathuriaNoch keine Bewertungen

- Bayesian EstimatorDokument29 SeitenBayesian Estimatorossama123456Noch keine Bewertungen

- Gage Linearity and AccuracyDokument10 SeitenGage Linearity and AccuracyRamdas PaithankarNoch keine Bewertungen

- HerramientasBasicas FAODokument86 SeitenHerramientasBasicas FAODenys Rivera GuevaraNoch keine Bewertungen

- Accuracy Traceabilityin Dimensional MeasDokument10 SeitenAccuracy Traceabilityin Dimensional MeasMeenakshi MeenuNoch keine Bewertungen

- Are We Doing WellDokument13 SeitenAre We Doing WellsamlenciNoch keine Bewertungen

- Researchpaper On The Estimation of Variance Components in Gage R R Studies Using Ranges ANOVADokument15 SeitenResearchpaper On The Estimation of Variance Components in Gage R R Studies Using Ranges ANOVALuis Fernando Giraldo JaramilloNoch keine Bewertungen

- Data Mining CaseBrasilTelecomDokument15 SeitenData Mining CaseBrasilTelecomMarcio SilvaNoch keine Bewertungen

- Data Warehouse Testing - Approaches and StandardsDokument8 SeitenData Warehouse Testing - Approaches and StandardsVignesh Babu M DNoch keine Bewertungen

- Assistant Gage R and RDokument19 SeitenAssistant Gage R and ROrlando Yaguas100% (1)

- P-149 Final PPTDokument57 SeitenP-149 Final PPTVijay rathodNoch keine Bewertungen

- Stat QuizDokument79 SeitenStat QuizJanice BulaoNoch keine Bewertungen

- Uncertainties and CFD Code Validation: H. W. ColemanDokument9 SeitenUncertainties and CFD Code Validation: H. W. ColemanLuis Felipe Gutierrez MarcantoniNoch keine Bewertungen

- Applications of Simulations in Social ResearchDokument3 SeitenApplications of Simulations in Social ResearchPio FabbriNoch keine Bewertungen

- Measurement System AnalysisDokument15 SeitenMeasurement System AnalysisIndian MHNoch keine Bewertungen

- Malhotra Im Ch09Dokument13 SeitenMalhotra Im Ch09sampritcNoch keine Bewertungen

- MSA Training Material - 18 - 04 - 2018Dokument10 SeitenMSA Training Material - 18 - 04 - 2018Mark AntonyNoch keine Bewertungen

- Up LeeDokument7 SeitenUp LeeGiovanni1618Noch keine Bewertungen

- Measurement Theory Process Mapping Measurement Errors Sampling PlansDokument4 SeitenMeasurement Theory Process Mapping Measurement Errors Sampling PlansTommaskNoch keine Bewertungen

- Cosc2753 A1 MCDokument8 SeitenCosc2753 A1 MCHuy NguyenNoch keine Bewertungen

- Vva 02-07Dokument51 SeitenVva 02-07asaksjaksNoch keine Bewertungen

- Analysis of Simulation DataDokument33 SeitenAnalysis of Simulation DatacrossgNoch keine Bewertungen

- Assumption of NormalityDokument27 SeitenAssumption of Normalitysubhas9804009247Noch keine Bewertungen

- Analysis of Common Supervised Learning Algorithms Through ApplicationDokument20 SeitenAnalysis of Common Supervised Learning Algorithms Through Applicationacii journalNoch keine Bewertungen

- Some Basic Qa QC Concepts: Quality Assurance (QA) Refers To The Overall ManagementDokument8 SeitenSome Basic Qa QC Concepts: Quality Assurance (QA) Refers To The Overall ManagementUltrichNoch keine Bewertungen

- Flow Measurement Uncertainty and Data ReconciliationDokument29 SeitenFlow Measurement Uncertainty and Data ReconciliationJahangir MalikNoch keine Bewertungen

- Ch7svol1 PDFDokument27 SeitenCh7svol1 PDFRisdiyanto Edy SaputroNoch keine Bewertungen

- Model Selection Using Correlation in Comparison With Qq-PlotDokument5 SeitenModel Selection Using Correlation in Comparison With Qq-PlotSowmya KoneruNoch keine Bewertungen

- Reference Vs Consensus ValuesDokument7 SeitenReference Vs Consensus ValuesChristian Saldaña DonayreNoch keine Bewertungen

- Summary of Coleman and Steele Uncertainty MethodsDokument6 SeitenSummary of Coleman and Steele Uncertainty MethodsAli Al-hamalyNoch keine Bewertungen

- 1-Linear RegressionDokument22 Seiten1-Linear RegressionSrinivasa GNoch keine Bewertungen

- Modeling Customer Survey DataDokument46 SeitenModeling Customer Survey Datapprabhakaran745Noch keine Bewertungen

- Comparison of Different Uses of Metamodels For Robust Design OptimizationDokument14 SeitenComparison of Different Uses of Metamodels For Robust Design OptimizationRajib ChowdhuryNoch keine Bewertungen

- SPSSProject PPTDokument15 SeitenSPSSProject PPTmba23shriyapandeyNoch keine Bewertungen

- Guidance On Significant Digits in The LaboratoryDokument15 SeitenGuidance On Significant Digits in The Laboratoryjose_barradas_1Noch keine Bewertungen

- Process Capability IndexDokument4 SeitenProcess Capability Indexchamp2357Noch keine Bewertungen

- Does Data Splitting Improve Prediction?: Julian J. FarawayDokument12 SeitenDoes Data Splitting Improve Prediction?: Julian J. FarawayMarcApariciNoch keine Bewertungen

- HerramientasBasicas FAODokument86 SeitenHerramientasBasicas FAOVíctor A. Huamaní LeónNoch keine Bewertungen

- Data Science Interview QuestionsDokument55 SeitenData Science Interview QuestionsArunachalam Narayanan100% (2)

- 6 Basic Statistical ToolsDokument30 Seiten6 Basic Statistical ToolsRose Ann MendigorinNoch keine Bewertungen

- Predictive Modeling Business Report Seetharaman Final Changes PDFDokument28 SeitenPredictive Modeling Business Report Seetharaman Final Changes PDFAnkita Mishra100% (1)

- Stock Price Forecasting by Hybrid Machine Learning TechniquesDokument6 SeitenStock Price Forecasting by Hybrid Machine Learning Techniquess_murugan02Noch keine Bewertungen

- Measurement System AnalysisDokument29 SeitenMeasurement System AnalysissidwalNoch keine Bewertungen

- Cross Validation ThesisDokument5 SeitenCross Validation Thesisafcnftqep100% (3)

- Process Performance Models: Statistical, Probabilistic & SimulationVon EverandProcess Performance Models: Statistical, Probabilistic & SimulationNoch keine Bewertungen

- Pasar PuduDokument1 SeitePasar PuduAzwan NazeriNoch keine Bewertungen

- Iowa Law Defines Sexual AbuseDokument6 SeitenIowa Law Defines Sexual AbuseAzwan NazeriNoch keine Bewertungen

- Rfot - Research Framework On Template (PHD / Master) :: Research Design: Sampling Frame / TechniqueDokument1 SeiteRfot - Research Framework On Template (PHD / Master) :: Research Design: Sampling Frame / TechniqueAzwan Nazeri100% (1)

- Iowa County Demographic DataDokument2 SeitenIowa County Demographic DataAzwan NazeriNoch keine Bewertungen

- Dina AlfasiDokument2 SeitenDina AlfasiAzwan NazeriNoch keine Bewertungen

- An Introduction To Islamic ArtDokument10 SeitenAn Introduction To Islamic ArtAzwan NazeriNoch keine Bewertungen

- Dina AlfasiDokument2 SeitenDina AlfasiAzwan NazeriNoch keine Bewertungen

- Ciril Jazbec: BiographyDokument4 SeitenCiril Jazbec: BiographyAzwan NazeriNoch keine Bewertungen

- SM 102Dokument1 SeiteSM 102Azwan NazeriNoch keine Bewertungen

- Technical Data Speedmaster SM 74: Printing Stock Blanket CylinderDokument1 SeiteTechnical Data Speedmaster SM 74: Printing Stock Blanket CylinderAzwan NazeriNoch keine Bewertungen

- Best Graphics Group SM52 Technical SpecsDokument1 SeiteBest Graphics Group SM52 Technical SpecsAzwan NazeriNoch keine Bewertungen

- Quality ControlDokument10 SeitenQuality ControlSamir Moreno GutierrezNoch keine Bewertungen

- Project Assessment GENERAL INSTRUCTIONS: Please Carefully Read The Below InstructionsDokument11 SeitenProject Assessment GENERAL INSTRUCTIONS: Please Carefully Read The Below InstructionsBhanu ChaitanyaNoch keine Bewertungen

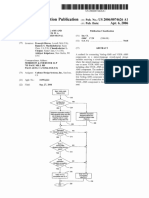

- US20060074626A1Dokument17 SeitenUS20060074626A1Tathagato BoseNoch keine Bewertungen

- Pwil CorporatebrochureDokument32 SeitenPwil Corporatebrochureasr2972Noch keine Bewertungen

- LPD Procedure CompleteDokument1 SeiteLPD Procedure CompleteimupathanNoch keine Bewertungen

- Lista de AlarmesDokument7 SeitenLista de AlarmesMarcos AlexandreNoch keine Bewertungen

- Iso 22705 2 2023Dokument13 SeitenIso 22705 2 2023Sushanth NutakkiNoch keine Bewertungen

- Phase II CatalogDokument84 SeitenPhase II CatalogTYO WIBOWONoch keine Bewertungen

- 290 AIS Best Practice Guide For Top Fixings 1Dokument32 Seiten290 AIS Best Practice Guide For Top Fixings 1easvc2000Noch keine Bewertungen

- Corrosion TestsDokument3 SeitenCorrosion TestsbalakaleesNoch keine Bewertungen

- RADIUS Setup: Figure 2. Configuring The RADIUS SettingsDokument30 SeitenRADIUS Setup: Figure 2. Configuring The RADIUS SettingsvenkataNoch keine Bewertungen

- Arduino Pellet Boiler Data Logger v1 My Nerd StuffDokument36 SeitenArduino Pellet Boiler Data Logger v1 My Nerd StuffmyEbooks0% (1)

- Duties and Responsibilities of A Quality EngineerDokument1 SeiteDuties and Responsibilities of A Quality EngineerRichard Periyanayagam100% (1)

- GROUPDokument17 SeitenGROUPkoib789Noch keine Bewertungen

- Need of Health & Safety in Social WorkplaceDokument20 SeitenNeed of Health & Safety in Social WorkplaceOnline Dissertation WritingNoch keine Bewertungen

- Routing Policys Juniper MXDokument12 SeitenRouting Policys Juniper MXSandra GonsalesNoch keine Bewertungen

- PIAB General Catalogue 2012 enDokument531 SeitenPIAB General Catalogue 2012 enMarco BernardesNoch keine Bewertungen

- 15 - Statistical Quality ControlDokument82 Seiten15 - Statistical Quality ControlGanesh MandpeNoch keine Bewertungen

- 868703-B21 Datasheet: Quick SpecDokument3 Seiten868703-B21 Datasheet: Quick SpecEdgar ChicuasuqueNoch keine Bewertungen

- J1587 Fault Codes: System OverviewDokument13 SeitenJ1587 Fault Codes: System OverviewEbied Yousif AlyNoch keine Bewertungen

- A2z Telugu Boothu Kathalu 17 - CompressDokument69 SeitenA2z Telugu Boothu Kathalu 17 - Compressnagendra100% (1)

- Iso TR 20461 2023Dokument11 SeitenIso TR 20461 2023Jhon David Villanueva100% (1)

- Burnertronic Bt300: Quick Reference For EndusersDokument48 SeitenBurnertronic Bt300: Quick Reference For Endusersjesus paezNoch keine Bewertungen

- Recci Catalog 20230705Dokument323 SeitenRecci Catalog 20230705CrosDigital FxNoch keine Bewertungen

- Securecrt ReadmeDokument6 SeitenSecurecrt ReadmemmontieluNoch keine Bewertungen

- Monitoring Dell Hardware With HP Systems Insight Manager PDFDokument17 SeitenMonitoring Dell Hardware With HP Systems Insight Manager PDFgflorez2011Noch keine Bewertungen

- IPTV PresentationDokument21 SeitenIPTV PresentationHiren ChawdaNoch keine Bewertungen

- 08-4940-00037 - 2 - Gamma With VMCD 4.1Dokument23 Seiten08-4940-00037 - 2 - Gamma With VMCD 4.1weekesNoch keine Bewertungen