Beruflich Dokumente

Kultur Dokumente

Evaluation Past, Present and Future (Cupitt and Boase 2015)

Hochgeladen von

NCVOOriginaltitel

Copyright

Verfügbare Formate

Dieses Dokument teilen

Dokument teilen oder einbetten

Stufen Sie dieses Dokument als nützlich ein?

Sind diese Inhalte unangemessen?

Dieses Dokument meldenCopyright:

Verfügbare Formate

Evaluation Past, Present and Future (Cupitt and Boase 2015)

Hochgeladen von

NCVOCopyright:

Verfügbare Formate

EVALUATION PAST PRESENT AND FUTURE

1 September 2015

To celebrate our 25th birthday, NCVO Charities Evaluation Services Sally Cupitt and Rowan Boase

reflect on the last 25 years of evaluation in the UK voluntary sector, and present a vision for the next

quarter of a century.

Do you remember 1990? Cast your mind back, if you can. Nelson Mandela walked out of prison,

the Cold War was coming to an end and Margaret Thatcher resigned. And there were concerns

about the state of the voluntary sector and calls for it to be more efficient and business-like.

Charities Evaluation Services (CES) was set up to improve the sectors ability to monitor and

evaluate itself while maintaining its distinctive values.

The twenty-five years since then have seen a lot of change. Voluntary and community sector

organisations are now seen as partners in delivering public services, the culture of accountability is

well-established and there is a drive to place outcomes and impact at the heart of the sector.

Since 1990, CES has trained tens of thousands of organisations and disseminated messages

through a range of publications. We have used our deep knowledge of the sector to develop

accessible tools and approaches. We have also carried out hundreds of independent evaluations,

using the practical learning from these to inform how we work with our clients and to develop our

information and training.

We have changed too. Following our merger with NCVO last year, we are now NCVO Charities

Evaluation Services different, stronger, but very much still here and with the same passion. As

we blow out the candles on our 25th birthday cake, we reflect on what has been learned over our

first quarter of a century and look ahead to the next 25 years. Well take a whirlwind tour of CES

history, share some of our most useful learning, and set out where we think the sector needs to go

next.

So help yourself to a piece of birthday cake, take a journey into CES memory, and join us as we

set out to support voluntary organisations in the next 25 years to achieve even more.

The story so far

1. The early 1990s: becoming more accountable

In the early 1990s, many voluntary sector organisations were losing their core funding in the new

contract economy and needed to give a better account of themselves. Before then, they werent

exposed to much public scrutiny and there was little demand on organisations to monitor their

performance, beyond providing numbers of people who came through the door. Where

1

Evaluation past, present and future

evaluations were carried out, they were often commissioned by funders and were carried out by

external evaluators. Concerns were expressed about moves to police the sector and, with little

internal ownership of evaluations and their findings, the result was that reports could be found

gathering dust in filing cabinets. We needed to change this to develop an understanding of how

monitoring and evaluation could benefit organisations themselves and their users.

CES focus on self-evaluation meant that organisations themselves would need to gain the skills

and the tools to assess their own achievements and to learn through the process. Our first step

was to develop practical methods that could be integrated into everyday work routines. We

trained around a cycle of evaluation, with a simple five-step approach that emphasised clarity

about organisational aims, based on an understanding of the needs of users, and getting

agreement at the start about the information that was important to collect. We emphasised the

potential of evaluation as a reflective process, delivering learning, and being an important part of

organisational management.

We had our roots both in the voluntary sector and in a broader evaluation culture, so we were in a

unique position to translate and transmit technical skills to organisations of all sizes. Now that

CES models and some of the basic concepts, techniques and tools have been so widely taken up,

its difficult to remember how new this all was at the time. The simple tool that made a real

difference from the late 1990s, and that we still use in some of our training today, is the CES

planning triangle, which helped teams to take their first steps into outcomes thinking. With aims

clear, outcomes could then be identified and indicators selected, to form the basis of a monitoring

and evaluation plan.

The CES planning triangle encouraged organisations to plan backwards from planned aims the

difference they wanted to make. In the early days of developing CES training, Sally Cupitt

remembers people really struggling with the distinction between outputs and outcomes; this is a

challenge that remains for some.

One development was that a new evaluation

partnership emerged between our external

evaluators and programme staff, a pragmatic

and effective response to limited budgets.

We began to work with managers and

frontline staff at the start, helping to put in

place a sound monitoring framework and

systems. This remains a key component of

our work today.

Evaluation past, present and future

2. Mid-1990s to early 2000s: its the difference you make that counts

CES did early work with infrastructure organisations in 2001 and 2002 on developing shared

measurement tools and outcomes monitoring systems. There were also important wider

developments at the time; there was pioneering work on measuring outcomes by alcohol and

homelessness agencies, with the development of tools such as the SOUL record and the

Outcomes Star. By the mid-1990s, information about outcomes had become part of Charity

Commission guidance and regulatory requirements and in 2006 the Office of the Third Sector

increased the focus on benefits for users in its third sector Action Plan.

In 1997, CES produced the first edition of PQASSO, the first quality system designed specifically

by the voluntary sector for the sector, destined to become the sectors leading quality system,

with a dedicated quality area on monitoring and evaluation. The focus on outcomes in the sector

was such that by the second edition of PQASSO we made sure that this was reflected

throughout the standards, and with a specific quality area for organisational results.

We worked with high-profile funders to develop outcomes approaches. Our booklet, Your Project

and its Outcomes, written for the then Community Fund (now BIG) in 2003, had a print run of

50,000, with a new edition later developed for the Big Lottery Fund. This document is still well

read today. The CES National Outcomes Programme, which ran from 2003 to 2009, cascaded

an outcomes approach throughout the nine England regions to frontline organisations. As the

recession hit the sector, we urged organisations to use an outcomes approach to be more needsled, to be more accountable and credible and to build relationships with funders and

commissioners.

But for many organisations there were real challenges in developing effective outcomes

monitoring systems, and there was some resistance to what was seen as an additional

organisational burden. While we supported organisations to design simple data collection tools, we

also encouraged them to consider off-the-peg tools, but only where they fitted well with the work

they were doing and their user group, with their identified outcomes and indicators, and with their

underlying values and ways of working. There was also an emerging interest in using interactive,

creative tools for working with children and young people and other specific groups and

participatory methods, more commonly used at the time by international NGOs. Community

organisations in particular started using visual evaluation methods, such as photos and video

diaries, to provide richer evaluation evidence.

3. Around 2004 to 2010: monitoring themselves to death

As the new millennium progressed and the sector became increasingly involved in delivering

public services, performance management and performance improvement were high on the

agenda. CES was able to take the message about outcomes further when we had government

funding for the Performance Hub and the National Performance Programme from 2005 to

3

Evaluation past, present and future

2011, increasing our reach and our capacity to work in a more direct way with infrastructure

organisations.

Some organisations found themselves accountable to a range of different regulatory bodies and

funders with different information demands. In 2008, we reported back to government on the

findings from what was the first large-scale research study into monitoring and evaluation in the

UK voluntary sector (Accountability and Learning, Ellis, 2008). One key recommendation was

that monitoring should be proportionate, taking into account the level of funding, and the size and

resources of the funded organisation. People had told us that they were monitoring themselves

to death, in many cases unable to use the data they were generating because so much time was

spent collecting it. We subsequently worked with the National Audit Office on its 2009

guidance; this message about the need for monitoring and evaluation to be proportionate has

since been taken up more widely as a funding principle, and is currently urged by the Inspiring

Impact initiative of which we are a part.

Our training to funders and commissioners, and the training and capacity building we do for their

grantees is an important part of our work. Our 2001 publication Does Your Money Make a

Difference? (now updated) set out monitoring and evaluation principles for funders and was

republished as a second edition in 2010, stressing the importance of proportionality, realistic

monitoring and evaluation budgets, and the need to develop sector skills to provide better

evidence, and partnership in learning. One of the projects that we developed jointly with partners

such as Charities Aid Foundation and the Association of Charitable Foundations was

www.jargonbusters.org.uk so that funders and funded could speak the same simple and accessible

language.

By 2008, we had found that self-evaluation was increasing in currency and legitimacy. New

software was providing opportunities for improved data analysis and reporting, with potential time

savings and improved ease of reporting. However, we also posted warnings about the quality of

evidence that was being provided. It was clear that there was a lot of work still to be done to build

evaluation skills and improve data management in the sector.

4. Around 2010 to 2015: emphasis on social value

As we reached the end of the 2000s, the public and voluntary sectors were increasingly affected

by the pressure of tighter budgets. This brought with it a new emphasis on demonstrating

economic measures of value, the government leading with messages about the need for both the

public and voluntary sectors to demonstrate both social benefits and savings for the public purse.

Earlier there had been an interest in social audit, and by the early 2000s there was a new

methodology in town; social return on investment (SROI), which puts a monetary value on the

social and environmental benefits of an organisation relative to a given amount of investment.

Initially quite niche, SROI really came to the fore in 2008-11 with the Cabinet-Office-funded

4

Evaluation past, present and future

Measuring Social Value programme, of which we were a part, which aimed to make SROI

accessible to voluntary sector organisations.

We welcomed the value that SROI placed on softer social outcomes, and its emphasis on

stakeholder involvement. But we have always been careful to advise caution where necessary,

ensuring that organisations are aware of the strengths and limitations of any approach. For

example, with SROI, the method was difficult to apply across some of the sectors work, such as

campaigning or infrastructure bodies. We also had concerns that the results of SROIs should not

be used to make comparisons attractive for funders as it had potential as a tool to assess value

for money. SROI is a useful addition to the evaluation toolbox, but our focus remains on

supporting organisations to evidence their outcomes, the foundation on which more technical

evaluation approaches, such as SROI, must rest.

One concern that we raised in our publications and training was that the focus on outcomes

should not exclude attention to process indeed that it was important to track how projects and

programmes were implemented, how things were done and the overall context, if there were to be

any real understanding about what worked, for whom, and how and why outcomes were achieved.

One approach that is gaining ground is theory of change, which shows the links between activities

and short-term change, steps along the way and longer-term outcomes, connecting to a bigger

picture of social change. This had been introduced to the UK from America in the late 1990s. The

CES planning triangle was a simple, early theory of change which had become popularised

through our training and publications, but our 2008 research report advocated a more developed

use of theory of change as a tool for some organisations to plan for and to track outcomes and

impact.

A fully-developed theory of change approach remains complex and can be time-consuming, but

we are working with more and more organisations to use the model; we are sure that this sharp

edge of evaluation practice will lead the sector as a whole towards a more strategic, reflective

approach and better evidence.

The present day: what weve learned

At the age of 25, CES is now a grown-up organisation. We have learned and reflected over the

years and would like to take the opportunity to share eight key messages with you.

1. Slow down and plan

It is ever the tendency for organisations new to evaluation to rush straight to collecting data. Our

message is to slow down and recognise that planning is an essential first stage in getting good

quality evidence about the difference you are making. You need to step back and bring internal

and external stakeholders into the process of reviewing your aims, being clear about outcomes,

Evaluation past, present and future

what you are going to measure and how. Planning tools such as our CES planning triangle, a logic

model, or theory of change can be a helpful way to start.

Recognise that bringing in an outcomes approach can involve massive culture change and can

sometimes take a number of years. It takes time to set up new systems, first of all building

engagement from management and other stakeholders. Often there are other skills gaps to be

addressed too, for example around IT and data management. For some established organisations,

introducing outcomes and impact thinking can involve radically changing how they think about

themselves, their work and their clients.

2. Be realistic

Quite commonly funders still ask for too much information and voluntary organisations

themselves may be over-ambitious, not appreciating the amount of work involved. The key is to

prioritise the most important outcomes and the information that will be most helpful to evidence

whether change occurred. Sometimes there are choices to be made about the rigour of the

evidence you might like in an ideal world, and what is most useful to meet your own needs and

those of your funders within your resource limitations.

3. Horses for courses

When you step back to plan, there are pragmatic decisions to be made, for example, about the

information needed, about priorities, what can be done with the resources and skills available. This

process should lead your choice of methods. One organisation we worked with had been advised

to collect before and after data on all the one-off calls to its advice helpline. But some calls were

only 10 minutes long so the process was irritating for the caller, excruciating for the adviser, and

unlikely to provide meaningful outcomes data. Make sure you choose methods that answer your

evaluation questions and are appropriate for the intervention.

4. Be creative and innovative

Dont be afraid to think creatively about how to collect data and how to engage with your users

and other stakeholders. There is now a plethora of useful and available resources that will suggest

ways you can engage people and gather valuable evidence to supplement more standard

methodologies. Evaluation should also be seen as a useful partner to piloting and developing

innovative practice and disseminating their results.

5. Measure the right level of change

Gradually a common language is being built in the sector. Make sure you have a shared

understanding throughout your organisation and with your funders and commissioners about what

you mean by the terminology. The technical distinction between outcomes and impact is really

worth knowing about as these different levels of change can require different forms of evaluation.

6

Evaluation past, present and future

Whereas outcomes are direct changes resulting from your work, impact is the wider, longerterm change that you can link back to your own services, campaigning or other intervention.

Measuring true impact can be complex, requiring rigorous methods and analysis, and it may not

be appropriate or realistic for your organisation. Simply measuring outcomes that are more likely

to be the direct result of your work and less influenced by other factors may be all you need to do.

6. Make links between outcomes and your work

Get the best evidence you can to make a strong link between your intervention and the outcomes

and longer-term change. In the last few years, the voluntary sector has been asked to consider

the importance of experimental or scientific methods, such as randomised control trials. RCTs

are regarded by some as providing the best way of measuring impact and establishing causality;

however, they are still rare in the sector as they involve rigorous statistical analysis and can be

time-consuming and costly to carry out. Its well worth knowing about scientific methods, but

there are also qualitative ways of making a causal link, working from a theory of change, testing

out alternative explanations of why change happened and submitting your data to careful analysis.

7. Engage with others

Bring internal and external stakeholders into the process; they are your partners in evaluation. All

staff and volunteers need to understand the part they play; its important for them to understand

how monitoring and evaluation will help their own work and benefit their users. They may have to

learn new skills and adjust how they work, so they should be involved early on. Your evaluation

planning will be better for their involvement.

Its also valuable to involve wider stakeholder groups, particularly in the planning stage and when

you are thinking about outcomes. Decide whether users will be your full collaborators or how they

might be otherwise involved in setting outcomes and planning and carrying out data collection.

Remember to share your findings, with the people who have given the data, and the people who

have collected it. This is respectful, increases the chance of their producing data again in future,

and it enables staff to use the findings in their own work.

8. Learn from both failure and success

Make sure that your enquiry remains open and that you proactively recognise and report bad

news as well as good it will be a sign of learning. Its enormously helpful that positive evaluation

findings can be used for PR, but we need to get to the situation where organisations trust enough

to share learning from failure as well as success. Measurement of social value can sometimes set a

contrary message as it emphasises positive change; developments such as payment by results may

also work against a learning culture. But its important to learn from the barriers to change and any

negative outcomes as this can only help you to improve what you do.

Evaluation past, present and future

Evaluation past, present and future

The future: our vision

The vision that has supported CES is that of a stronger sector, better meeting needs and, indeed,

we have seen how organisations have used findings from their monitoring and evaluation to

improve what they do, highlight their achievements and demonstrate their value. New challenges

are emerging all the time and there is a lot more work to be done over the next 25 years. We

intend to continue in the front line, taking a lead in voluntary sector evaluation over the coming

years.

We invite you to share in our vision for the future, captured here in eight key points. We look

forward to working closely with you again as partners in the development of monitoring and

evaluation to make the voluntary sector the best it can be.

1. Outcomes and impact are central

The difference organisations want to make will be evident in plans and strategy. Trustees, staff and

volunteers will all be able to tell people clearly about their organisational or project outcomes and

impact and know how their work contributes to it. Known outcomes will guide the work with users

and partners, and clients and funders will buy in to them. Monitoring and evaluation activities will

be an integral part of both planning and the work itself.

2. The smallest organisations can report their outcomes

Smaller organisations will not be forgotten in our drive to understand the latest cutting-edge

approaches and tools. Most of our sector is made up of small organisations, with over threequarters having an annual income of 100,000 and almost half with an income of less than

10,000. 1 They will be able to access the right sort of support, including free and online

resources, to develop simple but effective monitoring and evaluation systems. The information

they provide will be set at a realistic and appropriate level, building on the data they collect in their

day to day management. They and their funders will be able to see how their work contributes to

wider social outcomes.

3. Real-time data drives continuous development

Final evaluation reports at the end of a project will continue to be useful, but project managers will

increasingly be able to speed up the planning-monitoring-evaluation-reflection learning cycle to

provide more responsive project and service development and improvement. Technology will allow

easier access to real-time data, allowing internal and external evaluators to be more agile and work

in greater partnership with managers, providing shorter, more regular reports. The formative role

NCVO Almanac, 2015

Evaluation past, present and future

of evaluation will be embraced, and will become central, with time and resources allowed for

reflective decision making.

4. The sector shares good practice and learning

The voluntary sector will continue to develop its monitoring and evaluation practice and learn from

evaluation findings. There will be good quality research about what works in voluntary sector

monitoring and evaluation practice. Evaluators and others providing technical support will learn

from each other, developing a common pool of knowledge.

There will be more consistent and shared good practice in the sector more widely. This sharing and

learning culture will be more developed as sub-sectors share and learn from peerto-peer to help

face challenges and barriers. Measurement tools in the sector will continue to be developed jointly

where appropriate and shared by organisations working towards similar goals.

Voluntary sector organisations will be able to easily access reports, validated findings and open

data, for example from NPCs Data Labs. They will use these to substantiate their own theories of

change, which will reduce the need for them to carry out costly and complex primary research

themselves. Evaluation findings will stimulate funding of innovative projects and programmes as

well as established good practice.

5. Data collection and outcomes reporting are seen as core skills

Outcomes-focused monitoring and evaluation will be built into job descriptions and regarded as a

core activity. The sector will develop the standard of its outcome and impact measurement by

inducting and training its staff and volunteers routinely in its systems and in the skills needed to

perform essential tasks. Organisations will know how to access external technical help.

6. Voluntary organisations make the best use of methodologies

Organisations and their funders will have enough information about the benefits and implications

of different evaluation methods to choose appropriately in the light of the work being carried out,

information needs and available resources and skills. There will be more understanding about when

economic evaluation and the most rigorous methods of measuring impact are appropriate and

useful, and about the pragmatic considerations involved in using scientific methods. Funders,

evaluators and organisations themselves will be clear about when there will be added value from

using more technical methods. In the drive for increasing rigour, the sector will not lose what it

has gained in the development of self-evaluation, for the learning of lessons, and the use of

findings for change and improvement.

10

Evaluation past, present and future

7. Qualitative methods are sharper

Organisations will get better quality data as the sector becomes increasingly skilled in using

surveys, focus groups, interviews and other qualitative methods. There will also be more rigour in

analysing and interpreting data. In particular organisations will be able to use qualitative methods

effectively to establish attribution, providing a robust method of measuring impact. The use of

theory of change will introduce a more reflective way of thinking about cause and effect, allowing

those implementing projects and services to test out assumptions about what will work and to

explore evidence for and against alternative explanations for change.

8. Funders and commissioners provide support to self-evaluation

There will be a greater understanding by funders and commissioners of outcomes and impact, and

they will place value on working collaboratively with the organisations they fund to achieve their

aims. Demands for information will have regard to the size of organisation, the nature of the

intervention and the funding provided. Monitoring and evaluation will be realistically funded,

recognising the time and resources required for good quality data collection; funding for project

evaluation will recognise the principle of a budget of between 5 per cent and 10 per cent of overall

project costs. Funders and commissioners will also support their funded organisations to build

their self-evaluation practice, actively encouraging the sharing of good practice and learning.

Thank you: heres to the next 25 years

Before we end, we urge you to look for support when you are breaking new ground. There are lots

of great free resources available that can guide you and stop you from having to reinvent the

wheel. Try www.ces-vol.org.uk and www.inspiringimpact.org for a start. If you have a budget, even

a day of consultancy support can mean avoiding weeks of frustration.

While we dont know exactly what the next 25 years will look like, we do know that CES will

continue to work with voluntary organisations and their funders, big and small, tackling challenges

and using our trusted approaches but also new techniques where useful, all to help you to make a

bigger difference for the causes you serve.

We would like to raise a glass to all those we have worked with over the last 25 years funders

and commissioners, government departments, voluntary organisations and their users. The future

is always unknown, but the need for evaluation learning about how you are doing and the

difference you are making wont be going away. Thanks for being part of the journey so far and

heres to the next 25 years.

11

Das könnte Ihnen auch gefallen

- Shoe Dog: A Memoir by the Creator of NikeVon EverandShoe Dog: A Memoir by the Creator of NikeBewertung: 4.5 von 5 Sternen4.5/5 (537)

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeVon EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeBewertung: 4 von 5 Sternen4/5 (5795)

- LSF Project: Final ReportDokument98 SeitenLSF Project: Final ReportNCVO100% (2)

- LSF Project Case Study: Hoot Creative ArtsDokument1 SeiteLSF Project Case Study: Hoot Creative ArtsNCVONoch keine Bewertungen

- Health Check: A Practical Guide To Assessing The Impact of Volunteering in The NHSDokument36 SeitenHealth Check: A Practical Guide To Assessing The Impact of Volunteering in The NHSNCVONoch keine Bewertungen

- LSF Project: NCVO CES Evaluation of Learning StrandDokument17 SeitenLSF Project: NCVO CES Evaluation of Learning StrandNCVONoch keine Bewertungen

- LSF Project Bulletin: Journeys Start-Up (November 2016)Dokument3 SeitenLSF Project Bulletin: Journeys Start-Up (November 2016)NCVONoch keine Bewertungen

- Sample From The Volunteering Impact Assessment ToolkitDokument8 SeitenSample From The Volunteering Impact Assessment ToolkitNCVO100% (8)

- Evidence Review of Organisational Sustainability (November 2017)Dokument95 SeitenEvidence Review of Organisational Sustainability (November 2017)NCVO100% (2)

- The Value of Giving A Little Time: Understanding The Potential of Micro-VolunteeringDokument76 SeitenThe Value of Giving A Little Time: Understanding The Potential of Micro-VolunteeringNCVO100% (1)

- Understanding Participation: A Literature ReviewDokument50 SeitenUnderstanding Participation: A Literature ReviewNCVO100% (1)

- Pathways Through Participation Progress Report May 2010Dokument13 SeitenPathways Through Participation Progress Report May 2010NCVO100% (1)

- Understanding Impact in Social and Personal Context: Making A Case For Life Stories in Volunteering ResearchDokument7 SeitenUnderstanding Impact in Social and Personal Context: Making A Case For Life Stories in Volunteering ResearchNCVONoch keine Bewertungen

- Using Participatory Mapping To Explore Participation in Three CommunitiesDokument16 SeitenUsing Participatory Mapping To Explore Participation in Three CommunitiesNCVO100% (1)

- A Rose by Any Other Name ' Revisiting The Question: What Exactly Is Volunteering?'Dokument40 SeitenA Rose by Any Other Name ' Revisiting The Question: What Exactly Is Volunteering?'NCVO100% (3)

- VIVA - The Volunteer Investment and Value Audit: A Self-Help GuideDokument4 SeitenVIVA - The Volunteer Investment and Value Audit: A Self-Help GuideNCVO50% (2)

- New Ways of Giving Time: Opportunities and Challenges in Micro-Volunteering. A Literature ReviewDokument18 SeitenNew Ways of Giving Time: Opportunities and Challenges in Micro-Volunteering. A Literature ReviewNCVO50% (2)

- Understanding How Volunteering Creates Stronger Communities: A Literature ReviewDokument39 SeitenUnderstanding How Volunteering Creates Stronger Communities: A Literature ReviewNCVO100% (1)

- Micro-Volunteering Bulletin Final Version JuneDokument16 SeitenMicro-Volunteering Bulletin Final Version JuneNCVONoch keine Bewertungen

- Volunteering and Health: What Impact Does It Really Have?Dokument101 SeitenVolunteering and Health: What Impact Does It Really Have?NCVONoch keine Bewertungen

- Volunteering and Health Literature ReviewDokument38 SeitenVolunteering and Health Literature ReviewNCVO100% (1)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceVon EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceBewertung: 4 von 5 Sternen4/5 (895)

- The Yellow House: A Memoir (2019 National Book Award Winner)Von EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Bewertung: 4 von 5 Sternen4/5 (98)

- Grit: The Power of Passion and PerseveranceVon EverandGrit: The Power of Passion and PerseveranceBewertung: 4 von 5 Sternen4/5 (588)

- The Little Book of Hygge: Danish Secrets to Happy LivingVon EverandThe Little Book of Hygge: Danish Secrets to Happy LivingBewertung: 3.5 von 5 Sternen3.5/5 (400)

- The Emperor of All Maladies: A Biography of CancerVon EverandThe Emperor of All Maladies: A Biography of CancerBewertung: 4.5 von 5 Sternen4.5/5 (271)

- Never Split the Difference: Negotiating As If Your Life Depended On ItVon EverandNever Split the Difference: Negotiating As If Your Life Depended On ItBewertung: 4.5 von 5 Sternen4.5/5 (838)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyVon EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyBewertung: 3.5 von 5 Sternen3.5/5 (2259)

- On Fire: The (Burning) Case for a Green New DealVon EverandOn Fire: The (Burning) Case for a Green New DealBewertung: 4 von 5 Sternen4/5 (74)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureVon EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureBewertung: 4.5 von 5 Sternen4.5/5 (474)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryVon EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryBewertung: 3.5 von 5 Sternen3.5/5 (231)

- Team of Rivals: The Political Genius of Abraham LincolnVon EverandTeam of Rivals: The Political Genius of Abraham LincolnBewertung: 4.5 von 5 Sternen4.5/5 (234)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaVon EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaBewertung: 4.5 von 5 Sternen4.5/5 (266)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersVon EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersBewertung: 4.5 von 5 Sternen4.5/5 (345)

- The Unwinding: An Inner History of the New AmericaVon EverandThe Unwinding: An Inner History of the New AmericaBewertung: 4 von 5 Sternen4/5 (45)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreVon EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreBewertung: 4 von 5 Sternen4/5 (1090)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)Von EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Bewertung: 4.5 von 5 Sternen4.5/5 (121)

- Her Body and Other Parties: StoriesVon EverandHer Body and Other Parties: StoriesBewertung: 4 von 5 Sternen4/5 (821)

- Use CaseDokument4 SeitenUse CasemeriiNoch keine Bewertungen

- 3D2N Bohol With Countryside & Island Hopping Tour Package PDFDokument10 Seiten3D2N Bohol With Countryside & Island Hopping Tour Package PDFAnonymous HgWGfjSlNoch keine Bewertungen

- CBIM 2021 Form B-3 - Barangay Sub-Project Work Schedule and Physical Progress ReportDokument2 SeitenCBIM 2021 Form B-3 - Barangay Sub-Project Work Schedule and Physical Progress ReportMessy Rose Rafales-CamachoNoch keine Bewertungen

- The Making of A Global World 1Dokument6 SeitenThe Making of A Global World 1SujitnkbpsNoch keine Bewertungen

- QQy 5 N OKBej DP 2 U 8 MDokument4 SeitenQQy 5 N OKBej DP 2 U 8 MAaditi yadavNoch keine Bewertungen

- BiogasForSanitation LesothoDokument20 SeitenBiogasForSanitation LesothomangooooNoch keine Bewertungen

- Sample Business ProposalDokument10 SeitenSample Business Proposalvladimir_kolessov100% (8)

- Fire Service Resource GuideDokument45 SeitenFire Service Resource GuidegarytxNoch keine Bewertungen

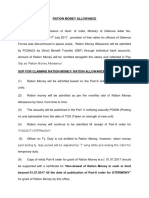

- RM AllowanceDokument2 SeitenRM AllowancekapsicumadNoch keine Bewertungen

- DSE at A GlanceDokument27 SeitenDSE at A GlanceMahbubul HaqueNoch keine Bewertungen

- Jay Chou Medley (周杰伦小提琴串烧) Arranged by XJ ViolinDokument2 SeitenJay Chou Medley (周杰伦小提琴串烧) Arranged by XJ ViolinAsh Zaiver OdavarNoch keine Bewertungen

- Cfa - Technical Analysis ExplainedDokument32 SeitenCfa - Technical Analysis Explainedshare757592% (13)

- Net Zero Energy Buildings PDFDokument195 SeitenNet Zero Energy Buildings PDFAnamaria StranzNoch keine Bewertungen

- CBN Rule Book Volume 5Dokument687 SeitenCBN Rule Book Volume 5Justus OhakanuNoch keine Bewertungen

- SKS Microfinance CompanyDokument7 SeitenSKS Microfinance CompanyMonisha KMNoch keine Bewertungen

- Packing List PDFDokument1 SeitePacking List PDFKatherine SalamancaNoch keine Bewertungen

- Boquet 2017, Philippines, Springer GeographyDokument856 SeitenBoquet 2017, Philippines, Springer Geographyfusonegro100% (3)

- DX210WDokument13 SeitenDX210WScanner Camiones CáceresNoch keine Bewertungen

- Global Supply Chain Planning at IKEA: ArticleDokument15 SeitenGlobal Supply Chain Planning at IKEA: ArticleyashNoch keine Bewertungen

- Arithmetic of EquitiesDokument5 SeitenArithmetic of Equitiesrwmortell3580Noch keine Bewertungen

- Malta in A NutshellDokument4 SeitenMalta in A NutshellsjplepNoch keine Bewertungen

- Pune HNIDokument9 SeitenPune HNIAvik Sarkar0% (1)

- Proposal Tanaman MelonDokument3 SeitenProposal Tanaman Melondr walferNoch keine Bewertungen

- Aus Tin 20105575Dokument120 SeitenAus Tin 20105575beawinkNoch keine Bewertungen

- Chap014 Solution Manual Financial Institutions Management A Risk Management ApproachDokument19 SeitenChap014 Solution Manual Financial Institutions Management A Risk Management ApproachFami FamzNoch keine Bewertungen

- Flex Parts BookDokument16 SeitenFlex Parts BookrodolfoNoch keine Bewertungen

- Thai LawDokument18 SeitenThai LawsohaibleghariNoch keine Bewertungen

- Smart Home Lista de ProduseDokument292 SeitenSmart Home Lista de ProduseNicolae Chiriac0% (1)

- TCW Act #4 EdoraDokument5 SeitenTCW Act #4 EdoraMon RamNoch keine Bewertungen

- Measure of Eco WelfareDokument7 SeitenMeasure of Eco WelfareRUDRESH SINGHNoch keine Bewertungen