Beruflich Dokumente

Kultur Dokumente

A PPDM Framework To Analyze Privacy and

Hochgeladen von

IJARTETOriginaltitel

Copyright

Verfügbare Formate

Dieses Dokument teilen

Dokument teilen oder einbetten

Stufen Sie dieses Dokument als nützlich ein?

Sind diese Inhalte unangemessen?

Dieses Dokument meldenCopyright:

Verfügbare Formate

A PPDM Framework To Analyze Privacy and

Hochgeladen von

IJARTETCopyright:

Verfügbare Formate

ISSN 2394-3777 (Print)

ISSN 2394-3785 (Online)

Available online at www.ijartet.com

International Journal of Advanced Research Trends in Engineering and Technology (IJARTET)

Vol. 4, Issue 4, 2017 April

A PPDM Framework to Analyze Privacy and

Utility Trade-Off for MNQIA Anonymization

Algorithm

K.Abrar Ahmed1, H.Abdul Rauf2

Research Scholar, Department of CSE Manonmaniam Sundaranar University1

Acedamic Dean, Sree Sastha Institute of Engineering and Technology2

Abstract: The rising developments in the field of Internet have facilitated the individual to a greater extent to share their

personal data for numerous applications such as analysis, mining, forecasting and prediction etc. Therefore, these have

created a huge opportunities to the adversary to misuse the datas that available and probably leads to privacy threat of the

individuals involved and support knowledge management and Information retrieval. Several approach have been proposed

such Anonymization and perturbation, which promotes the attention of privacy preservation data mining (PPDM) among

the researchers. K-Anonymization is widely used approach to prevent the adversary from being raising the privacy threat to

individuals who involved in the process. Our framework purely concentrates in adopting K-Anonymization strategy that

suits well utility aspects of the PPDM. The Utility is justified based on classification accuracy. Our approach uses

bucketization and MMDCF methods to achieve K-anonymization and classification framework of anonymization which

strives to declare the optimal K-Anonymization that suits the better utility. Our experimental results facilitate in deriving

the optimal K factor that facilitates the data owner to create a utility enriched privatized database (i.e. based on information

loss and classification accuracy).

Keywords: PPDM, K-Anonymization Classification, Bucketization, MMDCF.

be present. Suppression reduces quality on information of

I. INTRODUCTION individuals.

In recent year the datas about individuals are All PPDM Techniques focus on single attribute for

shared or exchanged for different reasons. Those data anonymization. At this moment only a few work concentrate

contains sensitive information of user, thereby increasing on multiple QI attribute anonymization at once. In our work

concerns about privacy. In order to achieve privacy on data, we use Bucketization approach combined with maximal

PPDM plays a major role. The main idea of PPDM is to Multi-Dimensional capacity First (MMDCF).The main idea

develop a method that should modify or transforms original of Bucketization is to partition the multiple QI attribute in to

data in such a way that no one can identify the sensitive equivalence classes. The QI value in each equivalence is

information of owner. There are many PPDM techniques generalized to same value under K-Anonymity principle.

have been proposed, some of them are secure multi party MMDCF is linear greedy algorithm uses to choose record

computation, cryptography and randomization. Many from bucket. We use classification Techniques to find out

researchers have done outstanding work for achieving utility of anonymized data.

privacy on datasets using K-Anonymity. The main idea of The rest of the paper organizes as follows: section 2

K-Anonymity is that each record cannot be distinguished states about preliminaries of work.section 3 describe about

from at least (K-1) records. K-Anonymity uses two the methodology used to achieve K-Anonymity. Section 4

techniques such as generalization and suppression. states about Experiment and result. Section 5 concludes this

Generalization Techniques replace QI attribute value to less- paper.

specific but semantically consistent. Suppression eliminates

II. RELATED WORKS

the records present in datasets and update the record with

some special symbol such as * which means any value can In Paper [Ninghui Li, Tianchengi Li, Suresh

Venktasubramainan], Authors finds the problem that K-

All Rights Reserved 2017 IJARTET 1

ISSN 2394-3777 (Print)

ISSN 2394-3785 (Online)

Available online at www.ijartet.com

International Journal of Advanced Research Trends in Engineering and Technology (IJARTET)

Vol. 4, Issue 4, April 2017

Anonymity method suffer from Identity disclosure problem. K=5,6,.12) .Then we apply ZeroR classification method to

Also they find problem with l-diversity techniques as not device the utility of the anonymized dataset. Hence by our

enough to halt attribute disclosure. So Authors gives methodology we attempts to deliver the optimal k_value that

solution to that problem by proposing techniques called t- can be used for anonymizing the original datasets through

closeness. Also they explore the motivations for t-closeness which the two conflicting goals privacy and utility is

and shown its advantages through experiment. achievable to a better trade off. The factors considered for

In paper [Ji-Won Byun,Ashish Karma, Elisa deriving optimal K-value are i) Information loss with respect

Bertino,Ninghui Li], Authors problem is to reduce to privacy ii)Classification Accuracy with respect to Utility.

information loss after performing Anonymization on A. Single QI Attribute Anonymization:

datasets. Author propose a method called K-member Many K-Anonymization Algorithms have been

clustering which uses to minimize information loss and to proposed in [2][3][4],which anonymized only one single

guaranteed the data quality. In addition to that they provide attribute at a time, whose purpose is to make individuals

their method as NP-hard, Also they develop a metric to data to be very similar in a published data. In the other

calculate information loss induced by generalization. word, a record of each QI has to similar for at least (k-1)

In paper [He Zhi-qiang, Chen Gang], Authors for other records.

the first time applied K-Anonymity in mobile network for B. Multiple Numerical QI Attribute Anonymization [13]:

giving security in Location Based Services (LBS). Since K- In this division, we will explain briefly about our

Anonymity may have many drawbacks such as K-Anonymization process. In paper [13] We considered the

neighborhood attack, Identity disclosure attack, Background datasets be S with n number of records and every records

Knowledge attack. Authors identified the sources for the has m numerical Quasi-identifier attributes (QI).we had

creating problems in K-Anonymity and develops a new taken these QI attributes and mark as QI1,QI2,.QIm. For

method using hierarchical clustering. They experimentally each QIi, 1im, we clustered these values into multiple

verified and proved that their idea is improved form existing group based on coarse-grained level.

K-Anonymity. From the group of clusters, we need to create multi-

In paper [Peter KIeseberg, Sebatian Schrittwieser, dimensional bucket. The main idea is that n tuples has to

Mrtin Mulazzani, Isao Echizen], Authors propose a method be mapped into their corresponding bucket according to their

that gives solution for two problems occur in exchanging own QI attributes. Once the multi-dimensional bucket is

sensitive information about individuals. Also authors forms constructed, we use maximal multi-dimensional capacity

a policy to find collaborative attack as well as first (MMDCF) methods [5] to choose different records to

anonymization scheme. form QI group. The selection priority of MMDCF is based

In Paper[Chen wang,Lianzhong Liu,Lijie Gao], on

Authors finds the problem that almost all the Selection (buk<QI01, QI02,.. QI0d>)=

Anonymization algorithm currently used are depends on +size(buk< QI01, QI02,.. QI0d >

generalization hierarchy which may domain generalization

hierarchy (DGH) or value generalization hierarchy (VGH) in

order to make data Anonymous. This method obviously

gives information loss on data. Authors develop a methods

using K-Member clustering Algorithm and experimentally QI01, QI02,.. QI0d >.once we select different record to make

up matching QI-group, thereby we formed K=Anonymous

proved that it has less information loss compared to other K-

Anonymity method. Table.

1) Example [13]:

III. METHODOLOGY In this section we explain our methods via real

Our methodology mainly concentrates on situation. In Paper [13] we considered the following micro

Anonymization and classification. Here we anonymized the data.

original datasets using K-Anonymization approach and

derived information loss for various value of K (i.e

All Rights Reserved 2017 IJARTET 2

ISSN 2394-3777 (Print)

ISSN 2394-3785 (Online)

Available online at www.ijartet.com

International Journal of Advanced Research Trends in Engineering and Technology (IJARTET)

Vol. 4, Issue 4, April 2017

Table: 3 Two Dimensional Cluster

Then tuple t1 belongs to group A13, A21.Therefore

Id Age Zip Salary Bonus

we put t1 in the corresponding cell.Similarly, we place all

T1 27 12,000 1000 1010 the other records as well. We structure a two dimensional

T2 22 22,000 2975 1010 bucket as in above table.

T3 34 24,000 10,100 950 According to MMDCF [15], we can choose

T4 26 17,000 1040 2000

different record to make up the matching QI-Group. For

T5 30 16,000 3050 2020

example Age and Zip code are choosen as QI-Attributes.

T6 32 14,000 5000 3035

T7 22 19,000 5120 2950

Now according to the selection priority equation is as

T8 37 26,000 7950 4100 follows.

T9 39 27,000 1050 6000 Group 1:

Table: 1 Micro data Iteration-1

There are two quasi-Identifier Age and zip code and Selection (buk< A21, A13> = 8 tuples

two sensitive attribute such as salary and bonus. We put the {T1, T6} to break the tie tuple T1 is selected.

age cluster into four group: A11={26,27}, A12={22,22},

A13={30,32,34} and A14={37,39}.Ultimately we put the

zipcode into four cluster groups: A21={12,000,14,000}, There are 4 tuples in A13, 2 tuples in A21 and 2

A22={17,000,16,000,19,000}, A23={22,000,24,000} and tuples in buk<A21,A13>, Totally 8 tuples. The Priority in

A24={26,000,27,000}, it is shown in table 2. buk<A22,A12> is 6 tuples, and in buk<A22,A13> is 7 tuples

which is rejected because tuple from A13 already selected in

Iteration 1. To break tie between buk<A22,A11> and

Age-Group(A1i) Zip-code Group(A2i)

buk<A22,A12>, we select buk<A22,A11> whose tuples is

A11={22,22} A21={12,000,14,000} T4.Therefore the highest priority is buk<A22,A11> so tuples

T4 is selected, then we shield dimension <A11>.

Iteration-2

A12={26} A22={17,000,16,000,19,000}

Slection(buk< A22,A11> = 6 tuples T7

A13={32,33,34,35} A23={22,000,24,000}

Selected.

Iteration-2

Selection(buk< A22,A12> = 5 tuples T4

A14={37,39} A24={26,000,27,000}

Slection(buk< A22,A13> = 8 tuples

Table: 2 Two cluster Group Already tuple selected from A13.

We make age and zipcode to be the first dimension

and second dimension respectively. Now check the tuple t1

values of age and zip code with two cluster group.

There are 2 tuples in A11, 3 tuples in A22 and 1

A11 A12 A13 A14

tuples in buk<A22,A11>, Totally 6 tuples. The Priority in

buk<A22,A12> is 5 tuples, and in

A21 {T1,T6}

buk<A22,A13> is 8 tuples which is rejected

A22 T7 T4 T5 because tuple from A13 already selected in Iteration 1.The

highest priority is buk<A22,A11> so tuples T4 is selected,

A23 T2 T3 then we shield dimension <A11>.

A24 {T8,T9}

All Rights Reserved 2017 IJARTET 3

ISSN 2394-3777 (Print)

ISSN 2394-3785 (Online)

Available online at www.ijartet.com

International Journal of Advanced Research Trends in Engineering and Technology (IJARTET)

Vol. 4, Issue 4, April 2017

14,000-

T2 22-32 2975 1010

Iteration-3 20,000

14,000-

T4 22-32 1040 2000

Selection(buk< A23,A11> = 5 tuples 20,000

14,000-

Already tuple selected from A11 T6 22-32

20,000

5000 3035

Slection(buk< A23,A13> = 6 tuples 24,000-

Iteration-4

Already tuple selected from A13

T3 34-37

26,000

10,100 950

Iteration-4 24,000-

T5 34-37 3050 2020

26,000

24,000-

T8 34-37 7950 4100

Iteration-4 26,000

Table: 5 Anonymized micro data

Selection (buk< A24,A14> = 6 tuples {T8

,T9} to break the tie highest tuple T9 is Selected C. ZeroR classification Methods:

ZeroR is one of the easiest classification

methods that depends on target and omits all predictors.

Finally in all four iteration we selected 4 tuples in a first ZeroR classifier [6] simply predicts the majority category

group (T1, T7, T9} (class).Although there is no predictability power in ZeroR, it

Group 2: is useful for determining a baseline performance as a

guidelines for other classification methods. Algorithm

The tuples which is selected in group one should be constructs a frequency table for the target and selects its

removed from Two Dimensional Cluster table and repeat most frequent value. Predictors contribution is not used in

this procedure. this model. The Model Evaluation for zeroR only predicts

the majority class correctly. As mention earlier, zeroR is

A1 A12 A13 A14 only useful for determining a baseline performance for other

classification methods

A21 T6

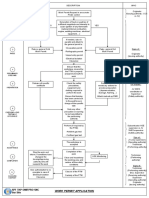

Significant steps in PPDM Framework:

A22 T4 T5

A23 T2 T3 Step:1 Choose N QI Attributes

Step:2 Cluster the each QI Attributes in

A24 cluster based on degree of approximation.

T9

Step:3 Construct Two dimensional

Table: 4 Two Dimensional Cluster buckets.

The same procedure has to be followed to obtain Step:4 Then apply MMDCF [15], and

second group which contains tuples {T2,T4,T6} and Third choose different record to make up the

group which tuples contain {T3,T5,T8}.From these group matching QI-Group.

we perform generalization on multiple Quasi Identifier Step:5 Repeat step3,4 untill K-

attributes to get 3-Anonymization with 3-diversity on micro Anonymization and L-diversity is

data as shown below. achieved.

Step:6 Apply ZeroR Claasification

ID Age Zip Salary Bonus

Algorithm to find out the Uitiliy and

12,000-

T1 22-39 1000 1010 Privacy of MNQIA Method.

27,000

12,000-

T7 22-39 5120 2950

27,000

12,000-

T9 22-39 1050 6000

27,000

All Rights Reserved 2017 IJARTET 4

ISSN 2394-3777 (Print)

ISSN 2394-3785 (Online)

Available online at www.ijartet.com

International Journal of Advanced Research Trends in Engineering and Technology (IJARTET)

Vol. 4, Issue 4, April 2017

D. Information Loss [13]: value in the event e*.Hence the information loss incurred by

Since we use bucketization and MMDCF method to anonymizing each sequence is as follows

modify data and form clusters. This Anonymization suffer IL(S,S*) = (5)

from information loss because some original values of QI in Generalization hierarchy of attribute age is shown below.

every record are either replaced with less specific values or Fig-1: Generalization Hierarchy of Age Attribute

are totally removed. Our goal is to anonymized a dataset that

should own privacy while maintain data utility on other

hand. We use following metric to calculate the information

loss.

According to paper [7], Let D* be anonymized

dataset of D.D* corresponds to a set of clusters

c={c1,c2,c3,.,cp) which is group of cluster. All records in a

given cluster cj are anonymized. Information loss occur in

anonymizing a data Sets D to D* is given in [7] as

IL(D,D*) = (1)

Where IL(Cj) is the number of information loss of cluster Cj,

Which is defined as the sum of information loss occur in

anonymizing every sequence S in Cj as in [7]. Fig-1: Generalization Hierarchy of Age Attribute

IL(C) = (2)

Where |c| is the sum of sequence in the cluster Cj, and IV. EXPERIMENT AND RESULT

IL(S,S*) is the information loss occur in anonymizing the We had taken adult dataset [13] from UCI Machine

sequence S t the sequence S*.Each sequence is Anonymizd Learning Repository which consists of attributes age,

by generalizing or suppressing some QIs values in some of workclass, fnlwgt, education, education number, marital

its events as in [7]. So we define information loss of a status, sex, race etc. From these attributes age,sex,race have

sequence based on the information loss of its events. been taken and anonymized using our anonymization

Let H be generalization hierarchy of the attribute method. We obtained Anonymized datasets for different

A.We use the loss metric (LM) measure [7] to capture the values of K-level.

amount of information loss occurred by generalizing the For different K Values we calculated Information

value a of the attribute A to one of its ancestors with loss for MNQIA method and Datafly method using the

respect to generalization hierarchy H as in [7]. metric explain in section 3.4, following table show

information loss obtained for different K values. From the

IL( , ) = (3)

below table it is clear that the information loss we obtain for

Where |L(X)| is the number of leaves in the sub tree rooted MNQIA is better than Datafly Methods for different values

at x. The information loss of each events e is then defined as of K-level.

[7]

IL(e,e*)= (4)

Where e* is the ancestor of event e,e(n) is the value of nth QI

of the event e and e*(n) is its corresponding

All Rights Reserved 2017 IJARTET 5

ISSN 2394-3777 (Print)

ISSN 2394-3785 (Online)

Available online at www.ijartet.com

International Journal of Advanced Research Trends in Engineering and Technology (IJARTET)

Vol. 4, Issue 4, April 2017

Information Loss

K-Value

MNQIA Method Datafly Method

4 0 4.25

5 5.59 6.02

6 7.37 7.5

7 8.84 9.03

8 10.09 11.5

12.5

12.07

9

10 15.17 16.8

11 18.47 19.04 Fig-3: Implemented Output of Generalization Hierarchy for Age

Attribute

12 22.38 23.4

TABLE 6: Information Loss for Different Values of K-level.

The sample implemented output screen shot, the Information

Loss when k=5, 12 was shown below,

Fig-4: Information Loss of MNQIA (K=12)

We had taken Weak tool where original adult

datasets have been classified using ZeroR classification

methods, thereby we obtained classification accuracy as

78.86.Similarly we had taken different K-Anonymized

datasets and applied ZeroR classification, we obtained

different classification accuracy for different K values as

shown below.

Classification Accuracy for

K-Value

Fig-2: Information Loss MNQIA (K=5)

MNQIA

5 76.93

6 75.33

7 73.45

8 71.63

9 71.23

10 70.02

11 69.15

12 68.12

Table-7: Classification Accuracy for K-Anonymized Table.

All Rights Reserved 2017 IJARTET 6

ISSN 2394-3777 (Print)

ISSN 2394-3785 (Online)

Available online at www.ijartet.com

International Journal of Advanced Research Trends in Engineering and Technology (IJARTET)

Vol. 4, Issue 4, April 2017

Accuracy. We suggest that k=8 is the best Anonymization

factor to give better privacy and utility when data is

supposed to publish.

REFERENCES

[1]. Ji-won Byum,Ashish Kamra,Elisa Bertino,Ninghui Li,Efficient K-

Anonymization Using clustering Techniques,DASFA 2007,LNCS-

4443,pp.no-188-200,2007.

[2]. Jiuyong Li,R.chi-wing wong,Ada wai-chee Fu,Jian

Oei,Anonymization by Local Recoding in Data with Attribute

Hierarchical Taxonomies,IEEE Transaction on Data And

Knowledge Engineering,Vol-20,No-9,pp.no.1-14,2008.

[3]. Chen wangg,Lianzhong Liu,Lijie Gao,Research on K-Anonymity

Algorithm in privacy protection,Proc.2nd International conf.on

computer and Infor.Application(ICCIA-2012),pp.0194-0196,2012.

[4]. Chitra Nasa,Suman,Evaluation of Different classification

Techniques for Web Data,International Journal of Computer

Application,Vol-52,No.9,pp. 0975-8887,2012.

[5]. Ashwin MachanavajjhalaGehrke,Daniel Kifer,L-Diversity:Privacy

Beyond K-Anonymity,Proc.22nd

[6]. Ninghui Li,Tiancheng Li,Suresh Venkatasubramanian,T-Closeness:

Fig-5: Comparison of Information Loss for MNQIA verses Datafly

Privacy Beyond K-Anonymity and L-Diversity, Proc.23nd

The sample screen shot of classification accuracy is shown

International conf. on Data Engineering(ICDE),pp.24,2008.

below

[7]. He Zhi-qiang,chen gang,Improvement of K-Anonymity Location

privacy protrction Algorithm based on Hierarchical

Clustering,Applied Mechanics and materials,vols.599-601,pp.1553-

1557,2014.

[8]. Peter Kieserberg,Sebastian Schrittwieser,Martin Mulazzan,Isoa

Echizen,An Algorithm for collusion-resistant Anonymization and

fingerprinting of sensitive microdata,Institute of Information

Management vol.24,pp.113-124,2014.

[9]. Qinghi Liu,Hong Shen and Yingpeng sang,Privacy preserving data

publishing for multiple Numerical sensitive Attribute

,TSINGHUASCIENCE and TECHNOLOGY,vol.20,no.3,pp.246-

Fig-6 : Classification accuracy when K=5 254,2015.

V. CONCLUSION [10]. Jianmin Han,Fangwei Lue,Jianfeng Lu and Haopeng,SLOMS:A

Since several K-Anonymization Techniques have privacy preserving Data Publishing Method for Multiple sensitive

been developed for preventing the disclosure of individuals Attribute Microdata,Journal of Software,vol.8,No.12,pp. 3096-

sensitive information .This paper address the framework 3104,2013.

which purely concentrate in adopting K-Anonymization [11]. Morvarid sehatakar,stan matwin,Clustering-based multi-dimensional

strategy that suits well with utility and privacy aspects of sequence Data Anonymization,proc. EDBT/ICDT 2014 JOINT

privacy preserving Data mining. Using our Anonymization CONFERENCE,pp. 385-390,2014.

Techniques we analyze that the information loss when K=8

is having minimum deviation and better classification

All Rights Reserved 2017 IJARTET 7

ISSN 2394-3777 (Print)

ISSN 2394-3785 (Online)

Available online at www.ijartet.com

International Journal of Advanced Research Trends in Engineering and Technology (IJARTET)

Vol. 4, Issue 4, April 2017

[12]. K.AbrarAhmed,H.Abdul Rauf,A.Rajesh,Study of K-Anonymization

Level qith respect to information loss,Australian Journal Basic And

Applied Science,vol.10,no.2,pp.1-8,2016.

[13]. K.AbrarAhmed,H.AbdulRauf,A.Rajesh,MNQIA:A METHOD FOR

MULTIPLE NUMERICAL QI ATTRIBUTE ANONYMIZATION

,IJCTA,vol.9,no. , pp.227-236, 2016.

[14]. http://storm.cis.fordham.edu/~gweiss/data-mining/ weka-

data/diabetes.arff

[15]. http://archive.ics.uci.edu/ml/datasets/BankMarketing

[16]. http://archive.ics.uci.edu/ml/datasets/ILPD+%28Indian+Liver+Patien

t+Dataset%29

[17]. Kaufman L and Rousseeuw,P.J 1990.Finding grop in data:An

Introduction to cluster Analysis.John Wiley 1990.

All Rights Reserved 2017 IJARTET 8

Das könnte Ihnen auch gefallen

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeVon EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeBewertung: 4 von 5 Sternen4/5 (5795)

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreVon EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreBewertung: 4 von 5 Sternen4/5 (1091)

- Never Split the Difference: Negotiating As If Your Life Depended On ItVon EverandNever Split the Difference: Negotiating As If Your Life Depended On ItBewertung: 4.5 von 5 Sternen4.5/5 (838)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceVon EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceBewertung: 4 von 5 Sternen4/5 (895)

- Grit: The Power of Passion and PerseveranceVon EverandGrit: The Power of Passion and PerseveranceBewertung: 4 von 5 Sternen4/5 (588)

- Shoe Dog: A Memoir by the Creator of NikeVon EverandShoe Dog: A Memoir by the Creator of NikeBewertung: 4.5 von 5 Sternen4.5/5 (537)

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersVon EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersBewertung: 4.5 von 5 Sternen4.5/5 (345)

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureVon EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureBewertung: 4.5 von 5 Sternen4.5/5 (474)

- Her Body and Other Parties: StoriesVon EverandHer Body and Other Parties: StoriesBewertung: 4 von 5 Sternen4/5 (821)

- The Emperor of All Maladies: A Biography of CancerVon EverandThe Emperor of All Maladies: A Biography of CancerBewertung: 4.5 von 5 Sternen4.5/5 (271)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)Von EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Bewertung: 4.5 von 5 Sternen4.5/5 (121)

- Retail Analysis With Walmart DataDokument2 SeitenRetail Analysis With Walmart DataSriram100% (9)

- The Little Book of Hygge: Danish Secrets to Happy LivingVon EverandThe Little Book of Hygge: Danish Secrets to Happy LivingBewertung: 3.5 von 5 Sternen3.5/5 (400)

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyVon EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyBewertung: 3.5 von 5 Sternen3.5/5 (2259)

- The Yellow House: A Memoir (2019 National Book Award Winner)Von EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Bewertung: 4 von 5 Sternen4/5 (98)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaVon EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaBewertung: 4.5 von 5 Sternen4.5/5 (266)

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryVon EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryBewertung: 3.5 von 5 Sternen3.5/5 (231)

- Team of Rivals: The Political Genius of Abraham LincolnVon EverandTeam of Rivals: The Political Genius of Abraham LincolnBewertung: 4.5 von 5 Sternen4.5/5 (234)

- On Fire: The (Burning) Case for a Green New DealVon EverandOn Fire: The (Burning) Case for a Green New DealBewertung: 4 von 5 Sternen4/5 (74)

- The Unwinding: An Inner History of the New AmericaVon EverandThe Unwinding: An Inner History of the New AmericaBewertung: 4 von 5 Sternen4/5 (45)

- Cyberpunk 2020 Ammo & Add-Ons PDFDokument6 SeitenCyberpunk 2020 Ammo & Add-Ons PDFFabricio MoreiraNoch keine Bewertungen

- Template - OQ - 0001 - 01 - Operational Qualification TemplateDokument9 SeitenTemplate - OQ - 0001 - 01 - Operational Qualification TemplateSoon Kooi0% (1)

- Experimental PsychologyDokument38 SeitenExperimental PsychologyPauloKinaging100% (2)

- Smart Water Management System Using IoTDokument4 SeitenSmart Water Management System Using IoTIJARTETNoch keine Bewertungen

- Privacy Preservation of Fraud DocumentDokument4 SeitenPrivacy Preservation of Fraud DocumentIJARTETNoch keine Bewertungen

- Wireless Notice Board With Wide RangeDokument5 SeitenWireless Notice Board With Wide RangeIJARTETNoch keine Bewertungen

- Ready To Travel ApplicationsDokument4 SeitenReady To Travel ApplicationsIJARTETNoch keine Bewertungen

- Non Invasive Blood Glucose Monitoring ForDokument4 SeitenNon Invasive Blood Glucose Monitoring ForIJARTETNoch keine Bewertungen

- Smart Door Using Raspberry PiDokument4 SeitenSmart Door Using Raspberry PiIJARTETNoch keine Bewertungen

- Power Transformer Explained PracticallyDokument4 SeitenPower Transformer Explained PracticallyIJARTETNoch keine Bewertungen

- Complaint Box Mobile ApplicationDokument4 SeitenComplaint Box Mobile ApplicationIJARTETNoch keine Bewertungen

- Insect Detection Using Image Processing and IoTDokument4 SeitenInsect Detection Using Image Processing and IoTIJARTETNoch keine Bewertungen

- Fire Detection and Alert System Using Convolutional Neural NetworkDokument6 SeitenFire Detection and Alert System Using Convolutional Neural NetworkIJARTETNoch keine Bewertungen

- Asset Tracking Using Low Power BluetoothDokument6 SeitenAsset Tracking Using Low Power BluetoothIJARTETNoch keine Bewertungen

- Review On Recycled Aluminium Dross and It's Utility in Hot Weather ConcretingDokument3 SeitenReview On Recycled Aluminium Dross and It's Utility in Hot Weather ConcretingIJARTETNoch keine Bewertungen

- CE325 - 16 Pile CapacityDokument45 SeitenCE325 - 16 Pile CapacityAhmadAliAKbarPhambraNoch keine Bewertungen

- ABB-Handbook Protection and Control DevicesDokument157 SeitenABB-Handbook Protection and Control DevicesJose Antonio Ramirez MurilloNoch keine Bewertungen

- Position Paper ExampleDokument3 SeitenPosition Paper ExampleRamy Joy MorquianosNoch keine Bewertungen

- MS Samsung Ar7000 Inverter Airconditioning PDFDokument2 SeitenMS Samsung Ar7000 Inverter Airconditioning PDFMacSparesNoch keine Bewertungen

- The US Shadow GovernmentDokument10 SeitenThe US Shadow GovernmentLipstikk N'Leathr (EyesOfMisery)Noch keine Bewertungen

- Siebel Certification QBankDokument85 SeitenSiebel Certification QBankSoj SurajNoch keine Bewertungen

- Nabl 600Dokument350 SeitenNabl 600Gian Pierre CuevaNoch keine Bewertungen

- Design Guide For Structure & FoundationDokument50 SeitenDesign Guide For Structure & FoundationDifa Liu100% (1)

- Bank Islam Malaysia Berhad - Online Account OpeningDokument1 SeiteBank Islam Malaysia Berhad - Online Account OpeningzaffiranNoch keine Bewertungen

- MANEX A320 FCOM/FCTM v2Dokument109 SeitenMANEX A320 FCOM/FCTM v2Renan SallesNoch keine Bewertungen

- Process-Flow Analysis: Mcgraw-Hill/IrwinDokument26 SeitenProcess-Flow Analysis: Mcgraw-Hill/IrwinVanessa Aulia PutriNoch keine Bewertungen

- PS1800 Centrifugal Pumping Systems: General Data and Sizing TablesDokument12 SeitenPS1800 Centrifugal Pumping Systems: General Data and Sizing TablesSINES FranceNoch keine Bewertungen

- Especificacion Daikin Serie CL 11 SEER R410A Bomba de Calor CPDokument3 SeitenEspecificacion Daikin Serie CL 11 SEER R410A Bomba de Calor CPSandy CastroNoch keine Bewertungen

- CIA and Pentagon Deploy RFID Death Chips - Coming Soon To A Product Near You - DeeppoliticsforumDokument8 SeitenCIA and Pentagon Deploy RFID Death Chips - Coming Soon To A Product Near You - DeeppoliticsforumEmil-Wendtland100% (1)

- HMMWV HawkeyeDokument2 SeitenHMMWV HawkeyeVictor PileggiNoch keine Bewertungen

- Prescribing and Describing: Popular and Academic Views of "Correctness"Dokument17 SeitenPrescribing and Describing: Popular and Academic Views of "Correctness"zaid ahmed100% (2)

- RCDC Getting StartedDokument13 SeitenRCDC Getting StartedEr Suresh Kumar100% (2)

- IOCL-EKC-STATIONARY CASCADE-DIA 559 X 06 CYLINDER X 3000 LTR-SHEET 5 OF 5-REV.01Dokument1 SeiteIOCL-EKC-STATIONARY CASCADE-DIA 559 X 06 CYLINDER X 3000 LTR-SHEET 5 OF 5-REV.01subudhiprasannaNoch keine Bewertungen

- Statement 6476869168 20230609 154402 37Dokument1 SeiteStatement 6476869168 20230609 154402 37kuttyNoch keine Bewertungen

- RT MDokument6 SeitenRT MShikhar NigamNoch keine Bewertungen

- Content - EJ 2014 Galvanised GratesDokument32 SeitenContent - EJ 2014 Galvanised GratesFilip StojkovskiNoch keine Bewertungen

- PTW Logic DiagramDokument1 SeitePTW Logic DiagramAdrianNoch keine Bewertungen

- TRZ 03 TRZ 03: Turbine Meter Turbine MeterDokument6 SeitenTRZ 03 TRZ 03: Turbine Meter Turbine MeterLuigi PortugalNoch keine Bewertungen

- Design and Construction of A Yagi-Uda Antenna For The 433 MHZ BandDokument4 SeitenDesign and Construction of A Yagi-Uda Antenna For The 433 MHZ BandognianovNoch keine Bewertungen

- AutoPIPE Pipe Stress Analysis - TutorialDokument198 SeitenAutoPIPE Pipe Stress Analysis - Tutorialaprabhakar15100% (6)

- MikrotikDokument4 SeitenMikrotikShinichi Ana100% (1)