Beruflich Dokumente

Kultur Dokumente

Example Infrastructure Outage Incident Report

Hochgeladen von

LEXUS CCTVOriginaltitel

Copyright

Verfügbare Formate

Dieses Dokument teilen

Dokument teilen oder einbetten

Stufen Sie dieses Dokument als nützlich ein?

Sind diese Inhalte unangemessen?

Dieses Dokument meldenCopyright:

Verfügbare Formate

Example Infrastructure Outage Incident Report

Hochgeladen von

LEXUS CCTVCopyright:

Verfügbare Formate

Example infrastructure outage incident report

Friday, May 13, 2077

By the Example Security Team

Earlier this week we experienced an outage in our API infrastructure. Today we’re providing an incident

report that details the nature of the outage and our response.

The following is the incident report for the Example Security outage that occurred on April 30, 2077. We

understand this service issue has impacted our valued developers and users, and we apologize to

everyone who was affected.

Issue Summary

From 6:26 PM to 7:58 PM PT, requests to most Example Security APIs resulted in 500 error response

messages. Example Security applications that rely on these APIs also returned errors or had reduced

functionality. At its peak, the issue affected 100% of traffic to this API infrastructure. Users could

continue to access certain APIs that run on separate infrastructures. The root cause of this outage was

an invalid configuration change that exposed a bug in a widely used internal library.

Timeline (all times Pacific Time)

6:19 PM: Configuration push begins

6:26 PM: Outage begins

6:26 PM: Pagers alerted teams

6:54 PM: Failed configuration change rollback

7:15 PM: Successful configuration change rollback

7:19 PM: Server restarts begin

7:58 PM: 100% of traffic back online

Root Cause

At 6:19 PM PT, a configuration change was inadvertently released to our production environment

without first being released to the testing enviroment. The change specified an invalid address for the

authentication servers in production. This exposed a bug in the authentication libraries which caused

them to block permanently while attempting to resolve the invalid address to physical services. In

addition, the internal monitoring systems permanently blocked on this call to the authentication library.

The combination of the bug and configuration error quickly caused all of the serving threads to be

consumed. Traffic was permanently queued waiting for a serving thread to become available. The

servers began repeatedly hanging and restarting as they attempted to recover and at 6:26 PM PT, the

service outage began.

Resolution and recovery

At 6:26 PM PT, the monitoring systems alerted our engineers who investigated and quickly escalated the

issue. By 6:40 PM, the incident response team identified that the monitoring system was exacerbating

the problem caused by this bug.

At 6:54 PM, we attempted to rollback the problematic configuration change. This rollback failed due to

complexity in the configuration system which caused our security checks to reject the rollback. These

problems were addressed and we successfully rolled back at 7:15 PM.

Some jobs started to slowly recover, and we determined that the overall recovery would be faster by a

restart of all of the API infrastructure servers globally. To help with the recovery, we turned off some of

our monitoring systems which were triggering the bug. As a result, we decided to restart servers

gradually (at 7:19 PM), to avoid possible cascading failures from a wide scale restart. By 7:49 PM, 25% of

traffic was restored and 100% of traffic was routed to the API infrastructure at 7:58 PM.

Corrective and Preventative Measures

In the last two days, we’ve conducted an internal review and analysis of the outage. The following are

actions we are taking to address the underlying causes of the issue and to help prevent recurrence and

improve response times:

Disable the current configuration release mechanism until safer measures are implemented.

(Completed.)

Change rollback process to be quicker and more robust.

Fix the underlying authentication libraries and monitoring to correctly timeout/interrupt on errors.

Programmatically enforce staged rollouts of all configuration changes.

Improve process for auditing all high-risk configuration options.

Add a faster rollback mechanism and improve the traffic ramp-up process, so any future problems of

this type can be corrected quickly.

Develop better mechanism for quickly delivering status notifications during incidents.

Example Security is committed to continually and quickly improving our technology and operational

processes to prevent outages. We appreciate your patience and again apologize for the impact to you,

your users, and your organization. We thank you for your business and continued support.

Sincerely,

The Example Security Team

Posted by Joe Napster, Editor

Das könnte Ihnen auch gefallen

- Automating SAP System Refresh Systems - WP - V2a PDFDokument7 SeitenAutomating SAP System Refresh Systems - WP - V2a PDFdithotse757074Noch keine Bewertungen

- DB Replay White Paper Ow07 1-2-133325Dokument19 SeitenDB Replay White Paper Ow07 1-2-133325IgorNoch keine Bewertungen

- Computer Science Project File For Class XII CBSE On The Topic: Bank Customer Management SystemDokument75 SeitenComputer Science Project File For Class XII CBSE On The Topic: Bank Customer Management SystemJYOTI DOGRA66% (29)

- Abstraction and Empathy - ReviewDokument7 SeitenAbstraction and Empathy - ReviewXXXXNoch keine Bewertungen

- Functions of Ecgc and Exim BankDokument12 SeitenFunctions of Ecgc and Exim BankbhumishahNoch keine Bewertungen

- Percy JacksonDokument13 SeitenPercy JacksonDawn Marco0% (2)

- Strengthsfinder Sept GBMDokument32 SeitenStrengthsfinder Sept GBMapi-236473176Noch keine Bewertungen

- Slack Incident Jan 04 2021 RCA FinalDokument3 SeitenSlack Incident Jan 04 2021 RCA FinalAnjali karnikaNoch keine Bewertungen

- Azure Status History - Microsoft AzureDokument6 SeitenAzure Status History - Microsoft Azurebruno.miguelscribd1Noch keine Bewertungen

- TIBCO BusinessWorks Performance TuningDokument4 SeitenTIBCO BusinessWorks Performance TuningArun_Kumar_57100% (1)

- Risk Mitigation and Performance TestingDokument6 SeitenRisk Mitigation and Performance TestingtalonmiesNoch keine Bewertungen

- Automation Regression Suite Creation For An Insurance Company in Asia-PacificDokument5 SeitenAutomation Regression Suite Creation For An Insurance Company in Asia-PacificVivek ShNoch keine Bewertungen

- CHAPTER 3 Network MaintenanceDokument9 SeitenCHAPTER 3 Network MaintenanceOge EstherNoch keine Bewertungen

- Performance BTS 1407951917Dokument10 SeitenPerformance BTS 1407951917Sumana NanjundaiahNoch keine Bewertungen

- Objectives and ScopeDokument7 SeitenObjectives and Scopedewang goelNoch keine Bewertungen

- Access Control SystemDokument9 SeitenAccess Control SystemTim McCardleNoch keine Bewertungen

- SqaDokument17 SeitenSqaTnvs PraveenNoch keine Bewertungen

- An Open Source Mail Server Migration Experience: Iredmail: Corresponding AuthorDokument5 SeitenAn Open Source Mail Server Migration Experience: Iredmail: Corresponding AuthornemamNoch keine Bewertungen

- MI0033 Set 2Dokument8 SeitenMI0033 Set 2Pradeep KrNoch keine Bewertungen

- Slac Pub 9761Dokument9 SeitenSlac Pub 9761JoseGocelaTaporocJr.Noch keine Bewertungen

- Agility Eservices Performance Test Summary Report For Internet BasedDokument30 SeitenAgility Eservices Performance Test Summary Report For Internet BasedSreenivasulu Reddy SanamNoch keine Bewertungen

- Failure Detection and Revival For Peer-To-Peer Storage Using MassDokument4 SeitenFailure Detection and Revival For Peer-To-Peer Storage Using MassInternational Organization of Scientific Research (IOSR)Noch keine Bewertungen

- Enhancing Your IT Environment Using SnapshotsDokument2 SeitenEnhancing Your IT Environment Using SnapshotsVR6SystemsNoch keine Bewertungen

- Akips ManualDokument7 SeitenAkips ManualJohn155Noch keine Bewertungen

- ZA380 Unit19 ScriptDokument11 SeitenZA380 Unit19 ScriptVenkat RamanaNoch keine Bewertungen

- Certificate of Approval: Signature: Supervisor: Zahid IqbalDokument2 SeitenCertificate of Approval: Signature: Supervisor: Zahid IqbalasamNoch keine Bewertungen

- Test The Rest: Testing Restful Webservices Using Rest AssuredDokument11 SeitenTest The Rest: Testing Restful Webservices Using Rest Assuredkarthick555Noch keine Bewertungen

- Project DetailsDokument10 SeitenProject DetailsBranol SpinNoch keine Bewertungen

- MonaDokument8 SeitenMonapratiksha maraneNoch keine Bewertungen

- Defect SLADokument2 SeitenDefect SLArajat_rathNoch keine Bewertungen

- TA3 Martins, HJKJKDokument9 SeitenTA3 Martins, HJKJKBrunoNoch keine Bewertungen

- Objectives and ScopeDokument9 SeitenObjectives and Scopedewang goelNoch keine Bewertungen

- Objectives and ScopeDokument8 SeitenObjectives and Scopedewang goelNoch keine Bewertungen

- Final Bug Tracking 02-05-2010Dokument108 SeitenFinal Bug Tracking 02-05-2010atukbaraza100% (2)

- SRS Use Case StudyDokument6 SeitenSRS Use Case StudyBob CotNoch keine Bewertungen

- Health Checking On Load BalancersDokument119 SeitenHealth Checking On Load BalancersElango SPNoch keine Bewertungen

- 2019.12.16 RFO B-Hive Dial Delay and Call ProcessingDokument2 Seiten2019.12.16 RFO B-Hive Dial Delay and Call Processingdamon.praterNoch keine Bewertungen

- Chapter 6 - ImplementationDokument8 SeitenChapter 6 - ImplementationtadiwaNoch keine Bewertungen

- Sample Introduction and ObjectivesDokument9 SeitenSample Introduction and Objectivesyumira khateNoch keine Bewertungen

- Hema.V Sikkim Manipal University - DE Reg:No 511119346 Subject: MC0071-Software Engineering. Book ID: B0808 & B0809 Assignment 1 & 2Dokument25 SeitenHema.V Sikkim Manipal University - DE Reg:No 511119346 Subject: MC0071-Software Engineering. Book ID: B0808 & B0809 Assignment 1 & 2Hema Sudarshan VNoch keine Bewertungen

- OnlineExaminationQuiz/Report/Final Online Examination Porject ReportDokument71 SeitenOnlineExaminationQuiz/Report/Final Online Examination Porject ReportAnjan HRNoch keine Bewertungen

- Uploads File White Paper 1 Physical Versus Virtual AppliancesDokument6 SeitenUploads File White Paper 1 Physical Versus Virtual Appliancesbeejohn4Noch keine Bewertungen

- Spring CloudDokument27 SeitenSpring CloudAnuj TripathiNoch keine Bewertungen

- Minimalist Box Grid Project Marketing ProposalDokument3 SeitenMinimalist Box Grid Project Marketing ProposalShaikh Ahsan AliNoch keine Bewertungen

- Non Functional Testing of Web Applications: Let Us Discuss Each Types of These Testings in DetailDokument9 SeitenNon Functional Testing of Web Applications: Let Us Discuss Each Types of These Testings in Detailneovik82100% (1)

- Automated SystemDokument81 SeitenAutomated SystemDeepak GargNoch keine Bewertungen

- Electricity Billing SystemDokument4 SeitenElectricity Billing SystemMohith Reddy100% (2)

- Error Tracking1Dokument92 SeitenError Tracking1techcare123Noch keine Bewertungen

- MicroService Project Interview QuestionDokument5 SeitenMicroService Project Interview QuestionRadheshyam NayakNoch keine Bewertungen

- Ebilling and Invoice System Test Plan: ObjectiveDokument5 SeitenEbilling and Invoice System Test Plan: ObjectiveMishra Shubham SSNoch keine Bewertungen

- SystemDokument4 SeitenSystemjak204Noch keine Bewertungen

- Comp InvestigatoryDokument31 SeitenComp Investigatorygolumolu.0411Noch keine Bewertungen

- Oracle Hyperion Epm 11-1-2 Installation Configuration 11 1 2 4Dokument5 SeitenOracle Hyperion Epm 11-1-2 Installation Configuration 11 1 2 4Ba HaniyaNoch keine Bewertungen

- HP Loadrunner PitfallsDokument2 SeitenHP Loadrunner PitfallsaustinfruNoch keine Bewertungen

- IntegrationServer Readme 9-12 PDFDokument174 SeitenIntegrationServer Readme 9-12 PDFvarma_43Noch keine Bewertungen

- Updated System Assignment MeloDokument3 SeitenUpdated System Assignment Melokyle meloNoch keine Bewertungen

- Important Interview QuestionsDokument22 SeitenImportant Interview Questionsmadhu_devu9837Noch keine Bewertungen

- Creating FT View SE Station BackupDokument1 SeiteCreating FT View SE Station BackupBryn WilliamsNoch keine Bewertungen

- Gridmpc: A Service-Oriented Grid Architecture For Coupling Simulation and Control of Industrial SystemsDokument8 SeitenGridmpc: A Service-Oriented Grid Architecture For Coupling Simulation and Control of Industrial SystemsIrfan Akbar BarbarossaNoch keine Bewertungen

- Test Plan: Lecture 5: Week 5 SEN 460: FALL 2016Dokument23 SeitenTest Plan: Lecture 5: Week 5 SEN 460: FALL 2016zombiee hookNoch keine Bewertungen

- SW Engineering NotesDokument4 SeitenSW Engineering NotesRamu EppalaNoch keine Bewertungen

- Integrating ISA Server 2006 with Microsoft Exchange 2007Von EverandIntegrating ISA Server 2006 with Microsoft Exchange 2007Noch keine Bewertungen

- Small Business Server 2008: Installation, Migration, and ConfigurationVon EverandSmall Business Server 2008: Installation, Migration, and ConfigurationNoch keine Bewertungen

- Component Retrieving Tools: CautionDokument3 SeitenComponent Retrieving Tools: CautionLunarNoch keine Bewertungen

- Grim Story XVDokument1 SeiteGrim Story XVLunarNoch keine Bewertungen

- Antistatic Mat (2.2.4.2) : ScrewsDokument1 SeiteAntistatic Mat (2.2.4.2) : ScrewsLunarNoch keine Bewertungen

- Grim Story XIDokument1 SeiteGrim Story XILunarNoch keine Bewertungen

- Demonstrate Proper Tool Use (2.2.4) : Antistatic Wrist Strap (2.2.4.1)Dokument1 SeiteDemonstrate Proper Tool Use (2.2.4) : Antistatic Wrist Strap (2.2.4.1)LunarNoch keine Bewertungen

- Grim Story IXDokument1 SeiteGrim Story IXLunarNoch keine Bewertungen

- Grim Story XDokument1 SeiteGrim Story XLunarNoch keine Bewertungen

- What Is A CD-ROMDokument2 SeitenWhat Is A CD-ROMLunarNoch keine Bewertungen

- Computer Repair Tech 3: The ProfessionalsDokument1 SeiteComputer Repair Tech 3: The ProfessionalsLunarNoch keine Bewertungen

- Proper Use of Tools (2.2)Dokument1 SeiteProper Use of Tools (2.2)LunarNoch keine Bewertungen

- Fixing Large Drive ProblemsDokument1 SeiteFixing Large Drive ProblemsLunarNoch keine Bewertungen

- Increases in CD-ROM SpeedDokument1 SeiteIncreases in CD-ROM SpeedLunarNoch keine Bewertungen

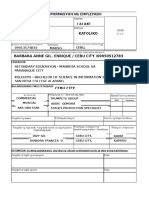

- Lalaki Katoliko Cebu City, Enrique MariDokument1 SeiteLalaki Katoliko Cebu City, Enrique MariLunarNoch keine Bewertungen

- DL 14aDokument5 SeitenDL 14aLunar100% (1)

- Module Outcomes SymbianDokument1 SeiteModule Outcomes SymbianLunarNoch keine Bewertungen

- I Use Scribd Because It's The Best Place To Find High Quality Information and Share It With A Global AudienceDokument1 SeiteI Use Scribd Because It's The Best Place To Find High Quality Information and Share It With A Global AudienceLunarNoch keine Bewertungen

- What Is Literature?Dokument3 SeitenWhat Is Literature?LunarNoch keine Bewertungen

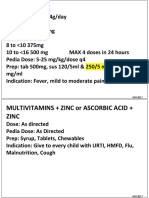

- CNS Drugs Pharmaceutical Form Therapeutic Group: 6mg, 8mgDokument7 SeitenCNS Drugs Pharmaceutical Form Therapeutic Group: 6mg, 8mgCha GabrielNoch keine Bewertungen

- RCPI V VerchezDokument2 SeitenRCPI V VerchezCin100% (1)

- The Magical Number SevenDokument3 SeitenThe Magical Number SevenfazlayNoch keine Bewertungen

- RH Control - SeracloneDokument2 SeitenRH Control - Seraclonewendys rodriguez, de los santosNoch keine Bewertungen

- MSC Nastran 20141 Install GuideDokument384 SeitenMSC Nastran 20141 Install Guiderrmerlin_2Noch keine Bewertungen

- Common RHU DrugsDokument56 SeitenCommon RHU DrugsAlna Shelah IbañezNoch keine Bewertungen

- Sample Programs in CDokument37 SeitenSample Programs in CNoel JosephNoch keine Bewertungen

- God As CreatorDokument2 SeitenGod As CreatorNeil MayorNoch keine Bewertungen

- Cloze Tests 2Dokument8 SeitenCloze Tests 2Tatjana StijepovicNoch keine Bewertungen

- Power System Planning Lec5aDokument15 SeitenPower System Planning Lec5aJoyzaJaneJulaoSemillaNoch keine Bewertungen

- Acc 106 Ebook Answer Topic 4Dokument13 SeitenAcc 106 Ebook Answer Topic 4syifa azhari 3BaNoch keine Bewertungen

- Quiz 3 Indigenous People in The PhilippinesDokument6 SeitenQuiz 3 Indigenous People in The PhilippinesMa Mae NagaNoch keine Bewertungen

- Automated Long-Distance HADR ConfigurationsDokument73 SeitenAutomated Long-Distance HADR ConfigurationsKan DuNoch keine Bewertungen

- Statistical TestsDokument47 SeitenStatistical TestsUche Nwa ElijahNoch keine Bewertungen

- Reviewer in Auditing Problems by Reynaldo Ocampo PDFDokument1 SeiteReviewer in Auditing Problems by Reynaldo Ocampo PDFCarlo BalinoNoch keine Bewertungen

- Chapter 5Dokument24 SeitenChapter 5Tadi SaiNoch keine Bewertungen

- Chapter 5, 6Dokument4 SeitenChapter 5, 6anmar ahmedNoch keine Bewertungen

- Chapter 3 SIP MethodologyDokument43 SeitenChapter 3 SIP MethodologyMáxyne NalúalNoch keine Bewertungen

- Ped Xi Chapter - 3Dokument15 SeitenPed Xi Chapter - 3DebmalyaNoch keine Bewertungen

- San Beda Alabang School of Law: Syllabus inDokument3 SeitenSan Beda Alabang School of Law: Syllabus inLucia DielNoch keine Bewertungen

- Vocabulary Ladders - Grade 3 - Degree of FamiliarityDokument6 SeitenVocabulary Ladders - Grade 3 - Degree of FamiliarityfairfurNoch keine Bewertungen

- CB Insights Venture Report 2021Dokument273 SeitenCB Insights Venture Report 2021vulture212Noch keine Bewertungen

- Partnership & Corporation: 2 SEMESTER 2020-2021Dokument13 SeitenPartnership & Corporation: 2 SEMESTER 2020-2021Erika BucaoNoch keine Bewertungen

- ProfessionalresumeDokument1 SeiteProfessionalresumeapi-299002718Noch keine Bewertungen

- 20 Great American Short Stories: Favorite Short Story Collections The Short Story LibraryDokument10 Seiten20 Great American Short Stories: Favorite Short Story Collections The Short Story Librarywileyh100% (1)