Beruflich Dokumente

Kultur Dokumente

Data Warehousing Concepts

Hochgeladen von

Urs Bargav HereOriginalbeschreibung:

Copyright

Verfügbare Formate

Dieses Dokument teilen

Dokument teilen oder einbetten

Stufen Sie dieses Dokument als nützlich ein?

Sind diese Inhalte unangemessen?

Dieses Dokument meldenCopyright:

Verfügbare Formate

Data Warehousing Concepts

Hochgeladen von

Urs Bargav HereCopyright:

Verfügbare Formate

Several concepts are of particular importance to data warehousing. They are discussed in detail in this section.

Dimensional Data Model: Dimensional data model is commonly used in data warehousing systems. This section describes this modeling technique, and the two common schema types, star schema and snowflake schema. Slowly Changing Dimension: This is a common issue facing data warehousing practioners. This section explains the problem, and describes the three ways of handling this problem with examples. Conceptual Data Model: What is a conceptual data model, its features, and an example of this type of data model. Logical Data Model: What is a logical data model, its features, and an example of this type of data model. Physical Data Model: What is a physical data model, its features, and an example of this type of data model. Conceptual, Logical, and Physical Data Model: Different levels of abstraction for a data model. This section compares and constrasts the three different types of data models. Data Integrity: What is data integrity and how it is enforced in data warehousing. What is OLAP: Definition of OLAP. MOLAP, ROLAP, and HOLAP: What are these different types of OLAP technology? This section discusses how they are different from the other, and the advantages and disadvantages of each. Bill Inmon vs. Ralph Kimball: These two data warehousing heavyweights have a different view of the role between data warehouse and data mart.

STAR SCHEMA:

In the star schema design, a single object (the fact table) sits in the middle and is radially connected to other surrounding objects (dimension lookup tables) like a star. Each dimension is represented as a single table. The primary key in each dimension table is related to a forieng key in the fact table.

Sample star schema All measures in the fact table are related to all the dimensions that fact table is related to. In other words, they all have the same level of granularity. A star schema can be simple or complex. A simple star consists of one fact table; a complex star can have more than one fact table. Let's look at an example: Assume our data warehouse keeps store sales data, and the different dimensions are time, store, product, and customer. In this case, the figure on the left repesents our star schema. The lines between two tables indicate that there is a primary key / foreign key relationship between the two tables. Note that different dimensions are not related to one another.

SNOWFLAKE SCHEMA:

The snowflake schema is an extension of the star schema, where each point of the star explodes into more points. In a star schema, each dimension is represented by a single dimensional table, whereas in a snowflake schema, that dimensional table is normalized into multiple lookup tables, each representing a level in the dimensional hierarchy.

Sample snowflake schema

For example, the Time Dimension that consists of 2 different hierarchies: 1. Year Month Day 2. Week Day We will have 4 lookup tables in a snowflake schema: A lookup table for year, a lookup table for month, a lookup table for week, and a lookup table for day. Year is connected to Month, which is then connected to Day. Week is only connected to Day. A sample snowflake schema illustrating the above relationships in the Time Dimension is shown to the right. The main advantage of the snowflake schema is the improvement in query performance due to minimized disk storage requirements and joining smaller lookup tables. The main disadvantage of the snowflake schema is the additional maintenance efforts needed due to the increase number of lookup tables.

Dimensional Data Model:

Dimensional data model is most often used in data warehousing systems. This is different from the 3rd normal form, commonly used for transactional (OLTP) type systems. As you can imagine, the same data would then be stored differently in a dimensional model than in a 3rd normal form model. To understand dimensional data modeling, let's define some of the terms commonly used in this type of modeling: Dimension: A category of information. For example, the time dimension. Attribute: A unique level within a dimension. For example, Month is an attribute in the Time Dimension. Hierarchy: The specification of levels that represents relationship between different attributes within a dimension. For example, one possible hierarchy in the Time dimension is Year Quarter Month Day. Fact Table: A fact table is a table that contains the measures of interest. For example, sales amount would be such a measure. This measure is stored in the fact table with the appropriate granularity. For example, it can be sales amount by store by day. In this case, the fact table would contain three columns: A date column, a store column, and a sales amount column. Lookup Table: The lookup table provides the detailed information about the attributes. For example, the lookup table for the Quarter attribute would include a list of all of the quarters available in the data warehouse. Each row (each quarter) may have several fields, one for the unique ID that identifies the quarter, and one or more additional fields that specifies how that particular quarter is represented on a report (for example, first quarter of 2001 may be represented as "Q1 2001" or "2001 Q1").

A dimensional model includes fact tables and lookup tables. Fact tables connect to one or more lookup tables, but fact tables do not have direct relationships to one another. Dimensions and hierarchies are represented by lookup tables. Attributes are the non-key columns in the lookup tables. In designing data models for data warehouses / data marts, the most commonly used schema types are Star Schema and Snowflake Schema. Whether one uses a star or a snowflake largely depends on personal preference and business needs. Personally, I am partial to snowflakes, when there is a business case to analyze the information at that particular level.

Slowly Changing Dimensions:

The "Slowly Changing Dimension" problem is a common one particular to data warehousing. In a nutshell, this applies to cases where the attribute for a record varies over time. We give an example below: Christina is a customer with ABC Inc. She first lived in Chicago, Illinois. So, the original entry in the customer lookup table has the following record: Customer Key 1001 Name Christina State Illinois

At a later date, she moved to Los Angeles, California on January, 2003. How should ABC Inc. now modify its customer table to reflect this change? This is the "Slowly Changing Dimension" problem. There are in general three ways to solve this type of problem, and they are categorized as follows: Type 1: The new record replaces the original record. No trace of the old record exists. Type 2: A new record is added into the customer dimension table. Therefore, the customer is treated essentially as two people. Type 3: The original record is modified to reflect the change. We next take a look at each of the scenarios and how the data model and the data looks like for each of them. Finally, we compare and contrast among the three alternatives.

Conceptual Data Model:

A conceptual data model identifies the highest-level relationships between the different entities. Features of conceptual data model include: Includes the important entities and the relationships among them. No attribute is specified. No primary key is specified.

The figure below is an example of a conceptual data model.

Conceptual Data Model

From the figure above, we can see that the only information shown via the conceptual data model is the entities that describe the data and the relationships between those entities. No other information is shown through the conceptual data model.

Data integrity:

Data integrity refers to the validity of data, meaning data is consistent and correct. In the data warehousing field, we frequently hear the term, "Garbage In, Garbage Out." If there is no data integrity in the data warehouse, any resulting report and analysis will not be useful. In a data warehouse or a data mart, there are three areas of where data integrity needs to be enforced: Database level

We can enforce data integrity at the database level. Common ways of enforcing data integrity include: Referential integrity The relationship between the primary key of one table and the foreign key of another table must always be maintained. For example, a primary key cannot be deleted if there is still a foreign key that refers to this primary key. Primary key / Unique constraint Primary keys and the UNIQUE constraint are used to make sure every row in a table can be uniquely identified. Not NULL vs NULL-able For columns identified as NOT NULL, they may not have a NULL value. Valid Values Only allowed values are permitted in the database. For example, if a column can only have positive integers, a value of '-1' cannot be allowed. ETL process For each step of the ETL process, data integrity checks should be put in place to ensure that source data is the same as the data in the destination. Most common checks include record counts or record sums. Access level We need to ensure that data is not altered by any unauthorized means either during the ETL process or in the data warehouse. To do this, there needs to be safeguards against unauthorized access to data (including physical access to the servers), as well as logging of all data access history. Data integrity can only ensured if there is no unauthorized access to the data.

OLTP:

OLAP stands for On-Line Analytical Processing. The first attempt to provide a definition to OLAP was by Dr. Codd, who proposed 12 rules for OLAP. Later, it was discovered that this particular white paper was sponsored by one of the OLAP tool vendors, thus causing it to lose objectivity. The OLAP Report has proposed the FASMI test, Fast Analysis of Shared Multidimensional Information. For a more detailed description of both Dr. Codd's rules and the FASMI test, please visit The OLAP Report. For people on the business side, the key feature out of the above list is "Multidimensional." In other words, the ability to analyze metrics in different dimensions such as time, geography,

gender, product, etc. For example, sales for the company is up. What region is most responsible for this increase? Which store in this region is most responsible for the increase? What particular product category or categories contributed the most to the increase? Answering these types of questions in order means that you are performing an OLAP analysis. Depending on the underlying technology used, OLAP can be braodly divided into two different camps: MOLAP and ROLAP. A discussion of the different OLAP types can be found in the MOLAP, ROLAP, and HOLAP section.

Buy vs. Build

Only in the rarest of cases does it make sense to build a metadata tool from scratch. This is because doing so requires resources that are intimately familiar with the operational, technical, and business aspects of the data warehouse system, and such resources are difficult to come by. Even when such resources are available, there are often other tasks that can provide more value to the organization than to build a metadata tool from scratch. In fact, the question is often whether any type of metadata tool is needed at all. Although metadata plays an extremely important role in a successful data warehousing implementation, this does not always mean that a tool is needed to keep all the "data about data." It is possible to, say, keey such information in the repository of other tools used, in a text documentation, or even in a presentation or a spreadsheet. Having said the above, though, it is author's believe that having a solid metadata foundation is one of the keys to the success of a data warehousing project. Therefore, even if a metadata tool is not selected at the beginning of the project, it is essential to have a metadata strategy; that is, how metadata in the data warehousing system will be stored.

Metadata Tool Functionalities

This is the most difficult tool to choose, because there is clearly no standard. In fact, it might be better to call this a selection of the metadata strategy. Traditionally, people have put the data modeling information into a tool such as ERWin and Oracle Designer, but it is difficult to extract information out of such data modeling tools. For example, one of the goals for your metadata selection is to provide information to the end users. Clearly this is a difficult task with a data modeling tool. So typically what is likely to happen is that additional efforts are spent to create a layer of metadata that is aimed at the end users. While this allows the end users to gain the required insight into what the data and reports they are looking at means, it is clearly inefficient because all that information already resides somewhere in the data warehouse system, whether it be the ETL tool, the data modeling tool, the OLAP tool, or the reporting tool. There are efforts among data warehousing tool vendors to unify on a metadata model. In June of 2000, the OMG released a metadata standard called CWM (Common Warehouse Metamodel),

and some of the vendors such as Oracle have claimed to have implemented it. This standard incorporates the latest technology such as XML, UML, and SOAP, and, if accepted widely, is truly the best thing that can happen to the data warehousing industry. As of right now, though, the author has not really seen that many tools leveraging this standard, so clearly it has not quite caught on yet. So what does this mean about your metadata efforts? In the absence of everything else, I would recommend that whatever tool you choose for your metadata support supports XML, and that whatever other tool that needs to leverage the metadata also supports XML. Then it is a matter of defining your DTD across your data warehousing system. At the same time, there is no need to worry about criteria that typically is important for the other tools such as performance and support for parallelism because the size of the metadata is typically small relative to the size of the data warehouse.

Das könnte Ihnen auch gefallen

- Shoe Dog: A Memoir by the Creator of NikeVon EverandShoe Dog: A Memoir by the Creator of NikeBewertung: 4.5 von 5 Sternen4.5/5 (537)

- Chris Baeza: ObjectiveDokument2 SeitenChris Baeza: Objectiveapi-283893712Noch keine Bewertungen

- The Yellow House: A Memoir (2019 National Book Award Winner)Von EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Bewertung: 4 von 5 Sternen4/5 (98)

- Reseller Handbook 2019 v1Dokument16 SeitenReseller Handbook 2019 v1Jennyfer SantosNoch keine Bewertungen

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeVon EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeBewertung: 4 von 5 Sternen4/5 (5794)

- LESSON 1: What Is Social Studies?: ObjectivesDokument15 SeitenLESSON 1: What Is Social Studies?: ObjectivesRexson Dela Cruz Taguba100% (1)

- Doloran Auxilliary PrayersDokument4 SeitenDoloran Auxilliary PrayersJosh A.Noch keine Bewertungen

- The Little Book of Hygge: Danish Secrets to Happy LivingVon EverandThe Little Book of Hygge: Danish Secrets to Happy LivingBewertung: 3.5 von 5 Sternen3.5/5 (400)

- Chapter 1Dokument6 SeitenChapter 1Alyssa DuranoNoch keine Bewertungen

- Grit: The Power of Passion and PerseveranceVon EverandGrit: The Power of Passion and PerseveranceBewertung: 4 von 5 Sternen4/5 (588)

- Makalsh CMDDokument22 SeitenMakalsh CMDMaudy Rahmi HasniawatiNoch keine Bewertungen

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureVon EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureBewertung: 4.5 von 5 Sternen4.5/5 (474)

- VlsiDokument216 SeitenVlsisenthil_5Noch keine Bewertungen

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryVon EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryBewertung: 3.5 von 5 Sternen3.5/5 (231)

- BS Assignment Brief - Strategic Planning AssignmentDokument8 SeitenBS Assignment Brief - Strategic Planning AssignmentParker Writing ServicesNoch keine Bewertungen

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceVon EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceBewertung: 4 von 5 Sternen4/5 (895)

- Ais Activiy Chapter 5 PDFDokument4 SeitenAis Activiy Chapter 5 PDFAB CloydNoch keine Bewertungen

- Team of Rivals: The Political Genius of Abraham LincolnVon EverandTeam of Rivals: The Political Genius of Abraham LincolnBewertung: 4.5 von 5 Sternen4.5/5 (234)

- Boxnhl MBS (Design-D) Check SheetDokument13 SeitenBoxnhl MBS (Design-D) Check SheetKumari SanayaNoch keine Bewertungen

- Never Split the Difference: Negotiating As If Your Life Depended On ItVon EverandNever Split the Difference: Negotiating As If Your Life Depended On ItBewertung: 4.5 von 5 Sternen4.5/5 (838)

- Razom For UkraineDokument16 SeitenRazom For UkraineАняNoch keine Bewertungen

- The Emperor of All Maladies: A Biography of CancerVon EverandThe Emperor of All Maladies: A Biography of CancerBewertung: 4.5 von 5 Sternen4.5/5 (271)

- Dermatome, Myotome, SclerotomeDokument4 SeitenDermatome, Myotome, SclerotomeElka Rifqah100% (3)

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaVon EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaBewertung: 4.5 von 5 Sternen4.5/5 (266)

- Blue Mountain Coffee Case (ADBUDG)Dokument16 SeitenBlue Mountain Coffee Case (ADBUDG)Nuria Sánchez Celemín100% (1)

- On Fire: The (Burning) Case for a Green New DealVon EverandOn Fire: The (Burning) Case for a Green New DealBewertung: 4 von 5 Sternen4/5 (74)

- GK-604D Digital Inclinometer System PDFDokument111 SeitenGK-604D Digital Inclinometer System PDFKael CabezasNoch keine Bewertungen

- Sections 3 7Dokument20 SeitenSections 3 7ninalgamaryroseNoch keine Bewertungen

- The Unwinding: An Inner History of the New AmericaVon EverandThe Unwinding: An Inner History of the New AmericaBewertung: 4 von 5 Sternen4/5 (45)

- Teruhisa Morishige: Mazda Engineering StandardDokument9 SeitenTeruhisa Morishige: Mazda Engineering Standardmohammad yazdanpanahNoch keine Bewertungen

- Story of Their Lives: Lived Experiences of Parents of Children With Special Needs Amidst The PandemicDokument15 SeitenStory of Their Lives: Lived Experiences of Parents of Children With Special Needs Amidst The PandemicPsychology and Education: A Multidisciplinary JournalNoch keine Bewertungen

- Classroom Management PaperDokument7 SeitenClassroom Management PaperdessyutamiNoch keine Bewertungen

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersVon EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersBewertung: 4.5 von 5 Sternen4.5/5 (345)

- RK Modul 2 PDFDokument41 SeitenRK Modul 2 PDFRUSTIANI WIDIASIHNoch keine Bewertungen

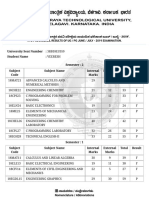

- VTU Result PDFDokument2 SeitenVTU Result PDFVaibhavNoch keine Bewertungen

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyVon EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyBewertung: 3.5 von 5 Sternen3.5/5 (2259)

- Sexuality Disorders Lecture 2ND Sem 2020Dokument24 SeitenSexuality Disorders Lecture 2ND Sem 2020Moyty MoyNoch keine Bewertungen

- Aleister Crowley Astrological Chart - A Service For Members of Our GroupDokument22 SeitenAleister Crowley Astrological Chart - A Service For Members of Our GroupMysticalgod Uidet100% (3)

- B2 First Unit 11 Test: Section 1: Vocabulary Section 2: GrammarDokument1 SeiteB2 First Unit 11 Test: Section 1: Vocabulary Section 2: GrammarNatalia KhaletskaNoch keine Bewertungen

- Post-Stroke Rehabilitation: Kazan State Medical UniversityDokument11 SeitenPost-Stroke Rehabilitation: Kazan State Medical UniversityAigulNoch keine Bewertungen

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreVon EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreBewertung: 4 von 5 Sternen4/5 (1090)

- Servicenow Rest Cheat SheetDokument3 SeitenServicenow Rest Cheat SheetHugh SmithNoch keine Bewertungen

- 9 - Digest - Mari Vs BonillaDokument2 Seiten9 - Digest - Mari Vs BonillaMarivic Escueta100% (1)

- Ocr Graphics Gcse CourseworkDokument6 SeitenOcr Graphics Gcse Courseworkzys0vemap0m3100% (2)

- Cleric Spell List D&D 5th EditionDokument9 SeitenCleric Spell List D&D 5th EditionLeandros Mavrokefalos100% (2)

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)Von EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Bewertung: 4.5 von 5 Sternen4.5/5 (121)

- Sandra Lippert Law Definitions and CodificationDokument14 SeitenSandra Lippert Law Definitions and CodificationЛукас МаноянNoch keine Bewertungen

- Baath Arab Socialist Party - Constitution (Approved in 1947)Dokument9 SeitenBaath Arab Socialist Party - Constitution (Approved in 1947)Antonio de OdilonNoch keine Bewertungen

- Her Body and Other Parties: StoriesVon EverandHer Body and Other Parties: StoriesBewertung: 4 von 5 Sternen4/5 (821)