Beruflich Dokumente

Kultur Dokumente

Matrix Algebra

Hochgeladen von

Hsu Pei-KenCopyright

Verfügbare Formate

Dieses Dokument teilen

Dokument teilen oder einbetten

Stufen Sie dieses Dokument als nützlich ein?

Sind diese Inhalte unangemessen?

Dieses Dokument meldenCopyright:

Verfügbare Formate

Matrix Algebra

Hochgeladen von

Hsu Pei-KenCopyright:

Verfügbare Formate

Contents

4 MATRIX ALGEBRA 2

4.1 Some Denitions . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 2

4.1.1 Matrix Multiplication . . . . . . . . . . . . . . . . . . . . . . . . . . . 2

4.1.2 Inverse . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 4

4.1.3 Determinant . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5

4.1.4 Trace . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 5

4.1.5 Kronecker Product . . . . . . . . . . . . . . . . . . . . . . . . . . . . 6

4.1.6 Vectorization . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 6

4.2 The Summation Vector . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 7

4.2.1 Orthogonal Idempotent Matrices . . . . . . . . . . . . . . . . . . . . . 8

4.2.2 General Orthogonal Idempotent Matrices . . . . . . . . . . . . . . . . 9

4.3 Rank . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 9

4.3.1 Some Results . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 11

4.4 Quadratic Forms . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 11

4.4.1 Denite Matrices . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 11

4.4.2 Matrix Comparisons . . . . . . . . . . . . . . . . . . . . . . . . . . . 12

4.4.3 Orthogonality and Matrix Comparisons . . . . . . . . . . . . . . . . . 12

4.5 Eigenvalues and Eigenvectors of Symmetric Matrices . . . . . . . . . . . . . . 13

4.5.1 Basic Properties . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 14

4.6 Matrix Differentiation . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . . 15

4.6.1 Taylor Expansions . . . . . . . . . . . . . . . . . . . . . . . . . . . . 17

4.7 Appendix: More Results on Disjoint Idempotent Matrices . . . . . . . . . . . . 19

1

Chapter 4

MATRIX ALGEBRA

4.1 Some Denitions

It is assumed that the meaning of vectors, both rows and columns, and matrices are known. The

denitions of vector and matrix addition and subtraction are also omitted. In this section we only

state without proofs the most important properties of ve matrix operations multiplication,

inverse, determinant, trace, Kronecker product. Proofs can be found in many standard matrix

algebra textbooks.

4.1.1 Matrix Multiplication

If a and b are two n-dimensional column vectors, then

a

b = [ a

1

a

2

. . . a

n

]

_

_

_

_

_

_

_

_

b

1

b

2

.

.

.

b

n

_

_

= a

1

b

1

+a

2

b

2

+ +a

n

b

n

=

n

i =1

a

i

b

i

.

Note that the apostrophe

means the transpose of the vector or matrix.

Let A be an n k matrix and B a k m matrix. If C = AB, then the (i, j )th element of the

n m matrix C is

c

i j

= a

i

b

j

= [ a

i 1

a

i 2

. . . a

i k

]

_

_

_

_

_

_

_

_

_

b

1 j

b

2 j

.

.

.

b

kj

_

_

=

k

=1

a

i

b

j

,

where a

i

is the i th row of A, a

i

is the (i, )-th element of A, b

j

is the j th column of B, and

b

j

is the (, j )th element of B. Here, we note the column number of A must be the same as

the row number of B before A can be multiplied to B, in which case A and B are said to be

conformable for multiplication.

A few special cases of the matrix multiplication should be worked out: If Ais a vector while

B is a matrix, then A must be a row vector (i.e., n = 1) and the product C is a row vector. If A

is a matrix while B is a vector, then B must be a column vector (i.e., m = 1) and the product C

2

CHAPTER 4. MATRIX ALGEBRA 3

is a column vector. If both A and B are vectors, then A must be a row vector and B must be a

column vector and the product C is a scalar.

It is important to knowhowthe multiplication between an nk matrix Xand a k-dimensional

column vector b works. The result of such a multiplication is a column vector which can be

viewed from two different angles according to how we partition the X matrix when we perform

the multiplication:

1. X is partitioned horizontally into k columns:

Xb =

_

_

_

_

_

_

_

_

x

11

x

12

x

1k

x

21

x

22

x

2k

.

.

.

.

.

.

.

.

.

.

.

.

x

n1

x

n2

x

nk

_

_

_

_

_

_

_

_

_

_

b

1

b

2

.

.

.

b

k

_

_

= [ x

1

x

2

. . . x

k

]

_

_

_

_

_

_

_

_

b

1

b

2

.

.

.

b

k

_

_

= b

1

x

1

+b

2

x

2

+ +b

k

x

k

,

where x

1

, x

2

, . . . x

k

are the k columns of the matrix X. So the product Xb can be viewed

as the weighted sum of the k columns of X with the elements b

i

of b being the weights.

(Note that when a scalar like b

i

is multiplied to a vector x

i

, then b

i

is multiplied to each

element of x

i

and the result is a vector.)

2. X is partitioned vertically into n rows:

Xb =

_

_

_

_

_

_

_

_

x

1

x

2

.

.

.

x

n

_

_

b =

_

_

_

_

_

_

_

_

x

1

b

x

2

b

.

.

.

x

n

b

_

_

=

_

_

_

_

_

_

_

_

b

1

x

11

+b

2

x

12

+ +b

k

x

1k

b

1

x

21

+b

2

x

22

+ +b

k

x

2k

.

.

.

b

1

x

n1

+b

2

x

n2

+ +b

k

x

nk

_

_

,

where x

1

, x

2

, . . . x

n

are the n rows of the matrix X. So the product Xb can also be

viewed as a column with each element being a linear combination of a row of X using the

elements of b as weights.

The notation x

j

for the columns of X and x

i

for the rows of X are only temporary. In many

occasions in the future we will also denote the rows of X by x

i

so that

X =

_

_

_

_

_

_

_

_

_

x

1

x

2

.

.

.

x

n

_

_

.

That is, while x

i

itself is a column vector, its transpose is the i th row of the matrix X.

Here are two basic properties of matrix multiplication:

CHAPTER 4. MATRIX ALGEBRA 4

1. For any conformable matrices A and B, (AB)

= B

.

2. For any conformable matrices A, B, and C, (AB)C = A(BC).

4.1.2 Inverse

Lets rst dene a special n n matrix the identity matrix which is denoted as I

n

, or

simply I, and is a matrix where the diagonal elements are all 1 and the off-diagonal elements

are 0. A nonsingular or invertible matrix A is a square matrix that has an inverse A

1

such that

AA

1

= A

1

A = I. Here are some properties about the inverse:

1. For any nonsingular matrices A and B, (AB)

1

= B

1

A

1

.

2. For any nonzero scalar a and nonsingular matrix A, (aA)

1

=

1

a

A

1

.

3. For any nonsingular matrix A, (A

)

1

= (A

1

)

.

4. For any nonsingular matrices A

1

and A

2

,

_

A

1

0

0 A

2

_

1

=

_

A

1

1

0

0 A

1

2

_

.

5. For any nonsingular matrices A

1

and A

2

,

_

A

1

B

C A

2

_

1

=

_

X

1

Y

Z X

2

_

,

where

X

1

= A

1

1

+A

1

1

BX

2

CA

1

1

= (A

1

BA

1

2

C)

1

, Y = A

1

1

BX

2

= X

1

BA

1

2

,

X

2

= A

1

2

+A

1

2

CX

1

BA

1

2

= (A

2

CA

1

1

B)

1

, Z = A

1

2

CX

1

= X

2

CA

1

1

.

6. Given that ad bc = 0,

_

a b

c d

_

1

=

1

ad bc

_

d b

c a

_

.

7. For any nonsingular matrix A and two column vectors b and c,

(A bc

)

1

= A

1

1

1 c

A

1

b

A

1

bc

A

1

.

CHAPTER 4. MATRIX ALGEBRA 5

8. For any nonsingular matrices A and C,

(A BCB

)

1

= A

1

A

1

B(C

1

B

A

1

B)

1

B

A

1

.

9. Given Ax = b, for any nonsingular matrix A, and two column vectors x and b, then we

can solve x = A

1

b.

4.1.3 Determinant

The determinant |A| of a square matrix A is a complicated sum of products involving all the

elements of A. In particular, for simple 2 2 and 3 3 matrices, we have

a b

c d

= ad bc and

a b c

d e f

g h i

= aei +bf g +cdh ceg af h bdi.

1. For any nonsingular matrices A and B, |AB| = |A||B|.

2. For any nonzero scalar a and any n n matrix A, |aA| = a

n

|A|.

3. For any nonsingular matrix A, |A

| = |A|.

4. For any nonsingular matrix A, |A

1

| = |A|

1

.

5. For any nonsingular matrices A

1

and A

2

, we have

A

1

0

0 A

2

= |A

1

||A

2

|.

6. Given that A

1

and A

2

are nonsingular,

A

1

B

C A

2

= |A

2

||A

1

BA

1

2

C| = |A

1

||A

2

CA

1

1

B|.

4.1.4 Trace

The trace tr(A) of a square matrix A is the sum of all diagonal elements.

1. For any n k matrix A and k n matrix B, tr(AB) = tr(BA).

2. For any scalar a and any square matrix A, tr(aA) = atr(A).

CHAPTER 4. MATRIX ALGEBRA 6

4.1.5 Kronecker Product

For any n k matrix A and p q matrix B, the Kronecker product of A and B is dened to be

A B =

_

_

_

_

_

_

_

_

a

11

B a

12

B a

1k

B

a

21

B a

22

B a

2k

B

.

.

.

.

.

.

.

.

.

.

.

.

a

n1

B a

n2

B a

nk

B

_

_

.

The result is an np kq matrix.

1. ab

= a b

= b

a.

2. (A B)

= A

.

3. (A B)

1

= A

1

B

1

.

4. For any n n matrix A and any k k matrix B, we have |A B| = |A|

k

|B|

n

.

5. tr(A B) = tr(A)tr(B).

6. (a B)C = a BC.

7. A(b

C) = b

AC.

8. (A b)C = AC b.

9. A(B c

) = AB c

.

10. Diag(A B) = Diag(A) Diag(B),

where Diag(A) denotes a diagonal matrix with the same diagonal elements as those of A.

11. a

bCD = (a

C)(b D).

4.1.6 Vectorization

Given an n k matrix A =

_

a

1

a

2

. . . a

k

_

, where a

j

s are columns of A, the vectorization of

A, denoted as vec(A), is an nk 1 column dened as:

vec(A) =

_

_

_

_

_

_

_

_

a

1

a

2

.

.

.

a

k

_

_

.

CHAPTER 4. MATRIX ALGEBRA 7

1. vec(ABC) = (C

A)vec(B).

2. vec(ab

) = b a.

3. vec(a

B) = a vec(B).

4. vec(a

B

nk

) = (I

k

a I

n

)vec(B) = vec(B a

).

5. tr(AB) = vec

(A

)vec(B) = vec

(B

)vec(A).

6. tr(ABCD) = vec

(A)(B D

)vec(C

) = vec

(A

)(D

B)vec(C).

7. a

BcDF = (c

D)[vec(B) F].

8.

A

nk

b c

D

mj

= (b

I

n

)vec(A)vec

(D

)(c

I

m

) = (b

I

n

c

)[vec(A) D].

4.2 The Summation Vector

We now consider a special n-dimensional column vector the column vector of ones which is

denoted as 1

n

and is called the summation vector because it helps sum the elements of another

vector x in a vector multiplication as follows:

1

n

x = [ 1 1 . . . 1 ]

_

_

_

_

_

_

_

_

x

1

x

2

.

.

.

x

n

_

_

= x

1

+ x

2

+ + x

n

=

n

i =1

x

i

.

Note that the result is a scalar, i.e., a single number.

The summation vector can be used to construct some interesting matrices as follows:

1. Since 1

n

1

n

= n and (1

n

1

n

)

1

= 1/n, we have

(1

n

1

n

)

1

1

n

x =

1

n

n

i =1

x

i

,

which is the average of the elements of the vector x and is usually denoted as x.

CHAPTER 4. MATRIX ALGEBRA 8

2. We can expand the scalar x to a vector of x by simply multiplying it with 1

n

as follows:

1

n

(1

n

1

n

)

1

1

n

x =

_

1

n

n

i =1

x

i

_

1

n

= x 1

n

=

_

_

_

_

_

_

_

_

x

x

.

.

.

x

_

_

.

3. By subtracting the above vector of the average from the original vector x, we get the

following vector of deviations:

x 1

n

(1

n

1

n

)

1

1

n

x = x x 1

n

=

_

_

_

_

_

_

_

_

x

1

x

x

2

x

.

.

.

x

n

x

_

_

=

_

I

n

1

n

(1

n

1

n

)

1

1

n

_

x,

where I

n

is the n n identity matrix.

We note that 1

n

(1

n

1

n

)

1

1

n

and I

n

1

n

(1

n

1

n

)

1

1

n

in (2) and (3) are both n n matrices.

They possess some important properties which will be discussed in the next subsection. Lets

denote them as follows:

P

1

1

n

(1

n

1

n

)

1

1

n

and M

1

I

n

P

1

.

It is interesting to point out that all the elements of P

1

are identically 1/n.

4.2.1 Orthogonal Idempotent Matrices

A symmetrical matrix A is said to be idempotent if AA = A. Also, two matrices A and B

are orthogonal (or disjoint) if AB = O. For the two matrices P

1

and M

1

generated from the

summation vector we have the following results:

1. P

1

and M

1

are a pair of symmetrical, idempotent, and orthogonal matrices:

(1) Symmetry: P

1

= P

1

and M

1

= M

1

.

(2) Idempotency: P

1

P

1

= P

1

and M

1

M

1

= M

1

.

(3) Orthogonality: P

1

M

1

= 0.

2. P

1

is called an averaging matrix, since as shown in the previous subsection

P

1

x = x 1

n

=

_

_

_

_

_

_

_

_

x

x

.

.

.

x

_

_

.

CHAPTER 4. MATRIX ALGEBRA 9

Moreover,

x

P

1

x = x

P

1

P

1

x = x

2

1

n

1

n

= n x

2

,

which is a scalar.

3. M

1

is called a deviation matrix, since

M

1

x = (I

n

P

1

)x = x P

1

x = x x 1

n

=

_

_

_

_

_

_

_

_

x

1

x

x

2

x

.

.

.

x

n

x

_

_

.

We have the following result

x

M

1

x = x

M

1

M

1

x = x

1

M

1

x = (M

1

x)

(M

1

x) = (x x 1

n

)

(x x 1

n

)

=

n

i =1

(x

i

x)

2

, (4.1)

which is the sum of squared deviations.

4. The pair of orthogonal idempotent matrices P

1

and M

1

are related to 1

n

as follows:

P

1

1

n

= 1

n

and M

1

1

n

= 0.

4.2.2 General Orthogonal Idempotent Matrices

Following the way P

1

and M

1

are generated from the vector 1

n

, we can dene a pair of sym-

metrical orthogonal idempotent matrices based on an arbitrary n k matrix X as follows:

P X(X

X)

1

X

and M I

n

P = I

n

X(X

X)

1

X

.

An important property of these two matrices is that PX = X and MX = O.

In the appendix additional results on orthogonal (disjoint) idempotent matrices will be pre-

sented.

4.3 Rank

A set of vectors {x

1

, x

2

, . . . , x

k

} are said to be linearly independent if the following equality

holds

1

x

1

+

2

x

2

+ +

k

x

k

= 0

only when

1

=

2

= . . . =

k

= 0. It is easy to see that no vector in a set of linearly

independent vectors can be expressed as a linear combination of the other vectors.

CHAPTER 4. MATRIX ALGEBRA 10

Given

X

nk

= [ x

1

x

2

. . . x

k

] =

_

_

_

_

_

_

_

_

x

11

x

12

x

1k

x

21

x

22

x

2k

.

.

.

.

.

.

.

.

.

.

.

.

x

n1

x

n2

x

nk

_

_

,

if among the k columns x

j

of X only c are linearly independent, then we say the column rank

of X is c. If all k columns x

j

of X are linearly independent, then we say X has full column rank.

The row rank of X can be dened similarly. The smaller of the column rank and the row rank

of X is referred to as the rank of X and denoted as rank(X).

Given that k < n, i.e., X is not square and has more rows than columns as in most of our

future applications, then rank(X) = k if X has full column rank.

The implication of full column rank: If X is not of full column rank: rank(X) < k, then at

least one of its column is a linear combination of the other k 1 columns. For example, suppose

the rst column is a linear combination of the other k 1 columns:

x

1

=

2

x

2

+ +

k

x

k

.

then, given

= [ 1

2

. . .

k

], we have

X = [ x

1

x

2

. . . x

k

]

_

_

_

_

_

_

_

_

1

2

.

.

.

k

_

_

= x

1

+

2

x

2

+ +

k

x

k

= 0.

Moreover, for any given vector y, if there is a coefcient vector such that

y = X = [ x

1

x

2

. . . x

k

]

_

_

_

_

_

_

_

_

2

.

.

.

k

_

_

=

1

x

1

+

2

x

2

+ +

k

x

k

,

then we immediately have another coefcient vector, +, that satises the equation

y = X(+) = [ x

1

x

2

. . . x

k

]

_

_

_

_

_

_

_

_

1

1

2

+

2

.

.

.

n

+

k

_

_

= (

1

1)x

1

+(

2

+

2

)x

2

+ +(

k

+

k

)x

k

,

This result implies that the solution to the system y = X is not unique, a situation that is

generally undesirable.

CHAPTER 4. MATRIX ALGEBRA 11

4.3.1 Some Results

1. A square matrix has full (column) rank if and only if it is nonsingular (invertible).

2. rank(X) = rank(X

X) = rank(XX

). Furthermore, if the n k matrix X is of full column

rank, then both the k k matrix X

X and the n n matrix XX

have rank k. So in such a

case X

X is nonsingular while XX

is not.

3. Given an n k matrix X, if A is an n n nonsingular matrix, then rank(AX) = rank(X).

That is, multiplying a matrix by a nonsingular matrix will not change its rank.

4.4 Quadratic Forms

Given an n n square matrix A and an n-vector x, the product

x

Ax = [ x

1

x

2

. . . x

n

]

_

_

_

_

_

_

_

_

a

11

a

12

a

1n

a

21

a

22

a

2n

.

.

.

.

.

.

.

.

.

.

.

.

a

n1

a

n2

a

nn

_

_

_

_

_

_

_

_

_

_

x

1

x

2

.

.

.

x

n

_

_

=

n

i =1

n

j =1

a

i j

x

i

x

j

,

is called the quadratic form which is a scalar.

4.4.1 Denite Matrices

The values of the quadratic forms of a symmetrical matrix A may be restricted:

If the quadratic form x

Ax > 0 for any x = 0, then A is said to be positive denite (p. d.).

If the quadratic formx

Ax < 0 for any x = 0, then A is said to be negative denite (n. d.).

If the quadratic form x

Ax 0 for any x, then A is said to be positive semi-denite

(p. s. d.).

If the quadratic form x

Ax 0 for any x, then A is said to be negative semi-denite

(n. s. d.).

Both p. d. and n. d. matrices are nonsingular, while p. s. d. and n. s. d. matrices may not be

nonsingular. Moreover, the properties of p. d. (or n. d.) matrices are similar to those of positive

(or negative) scalars in the following sense:

1. The inverse of a p. d. matrix is p. d. while the inverse of an n. d. matrix is also n. d.

2. A is p. d. if and only if there is a nonsingular matrix B such that A = B

B.

CHAPTER 4. MATRIX ALGEBRA 12

3. A is an n n p. s. d. matrix with rank m if and only if there is an n m matrix B of full

column rank such that A = BB

.

4. If X is an n k matrix with full column rank, then the k k matrix X

X is p. d. and the

n n matrix XX

is p. s. d. But if X is not of full column rank, then X

X is p. s. d. only.

4.4.2 Matrix Comparisons

Given two square matrices A and B of the same dimension,

A > B (A is greater than B) if A B is p. d.;

A B (A is no smaller than B) if A B is p. s. d.;

A < B (A is smaller than B) if A B is n. d.;

A B (A is no greater than B) if A B is n. s. d.

The following results indicate that matrix comparisons in many ways are similar to scalar

comparisons:

1. Given that A and B are two p. d. matrices, if A > B, then

(1) A

1

< B

1

.

(2) The diagonal elements of Aare greater than those of B, respectively, so that tr(A) >

tr(B). Also, |A| > |B|.

2. Given that A p. d. and B is p. s. d., if A B, then the diagonal elements of A are greater

than or equal to those of B, respectively, so that tr(A) tr(B). Also, |A| |B|.

4.4.3 Orthogonality and Matrix Comparisons

Given two matrices X and Y of the same size, if the matrix X is orthogonal to the difference

between X and Y:

X

(Y X) = O,

then

1

Y

Y X

X = (Y X)

(Y X), which is p. s. d. In other words, Y

Y X

X.

This result is useful for situations where we need to compare two matrices that can be

written as Y

Y and X

X for some matrices Y and X. What we need to show is simply that X is

orthogonal to the difference between Y and X.

1

Since X

(Y X) = O implies X

Y = X

X, we have (Y X)

(Y X) = Y

Y X

Y Y

X + X

X =

Y

Y X

X X

X +X

X = Y

Y X

X.

CHAPTER 4. MATRIX ALGEBRA 13

A particularly common application is to compare two sums of squares:

y

y =

n

i =1

y

2

i

and x

x =

n

i =1

x

2

i

,

for some n-dimensional vectors y and x. To prove y

y x

x, all we have to do is to show

x

(y x) =

n

i =1

x

i

(y

i

x

i

) = 0.

4.5 Eigenvalues and Eigenvectors of Symmetric Matrices

Given a square matrix A, if there are a vector h and a scalar such that

Ah = h,

then h is called a eigenvector and a eigenvalue of A. For any symmetrical nn matrix A, there

are n distinctive n-dimensional eigenvectors h

1

, h

2

, . . ., h

n

with corresponding eigenvalues

1

,

2

, . . .,

n

, which may not be distinctive.

The eigenvectors can be made orthonormal: h

i

h

i

= 1 and h

i

h

j

= 0, for i = j =

1, . . . , n, and put into a matrix as follows:

H

nn

= [ h

1

h

2

h

n

],

so that

H

H =

_

_

_

_

_

_

_

_

_

h

1

h

2

.

.

.

h

n

_

_

[ h

1

h

2

h

n

] =

_

_

_

_

_

_

_

_

_

h

1

h

1

h

1

h

2

h

1

h

n

h

2

h

1

h

2

h

2

h

2

h

n

.

.

.

.

.

.

.

.

.

.

.

.

h

n

h

1

h

n

h

2

h

n

h

n

_

_

= I

n

.

That is, H

= H

1

. The inverse of H is simply its transpose.

Dene the n n diagonal matrix with the n eigenvalues on the diagonal:

=

_

_

_

_

_

_

_

_

1

0 0

0

2

0

.

.

.

.

.

.

.

.

.

.

.

.

0 0

n

_

_

,

then we have

AH = H.

Consequently, we obtain the diagonalization of A:

H

AH = H

H = .

CHAPTER 4. MATRIX ALGEBRA 14

4.5.1 Basic Properties

1. |A| = |HH

| = |H||||H

| = |||H

||H| = || =

n

i =1

i

.

2. tr(A) = tr(HH

) = tr(H

H) = tr() =

n

i =1

i

.

3. Since H is nonsingular, rank(A) = rank(HH

) = rank(), the rank of A is equal to the

number of nonzero eigenvalues.

4. The eigenvalues of a nonsingular matrix are all nonzero; and the eigenvalues of a p. d. (n. d.)

matrix are all positive (negative).

5. If A is p. d., then from the corresponding dingonal matrix we can dene

1/2

_

_

_

_

_

_

_

_

1

0 0

0

2

0

.

.

.

.

.

.

.

.

.

.

.

.

0 0

n

_

_

,

where

1

,

2

, . . .,

n

are the positive eigenvalues of A. Moreover, we can dene

A

1/2

H

1/2

H

so that A

1/2

A

1/2

= A. Note that the eigenvalues of A

1/2

are the square roots of the

eigenvalues of A.

6. The eigenvalues of A

1

are the inverses of the eigenvalues of A.

7. The eigenvalues of an idempotent matrix are either 0 or 1. Consequently, an idempotent

matrix may be viewed as an item that serves as 0 or 1 in matrix algebra.

(1) If the rank of an idempotent matrix is k, then k of its eigenvalues are 1 and the rest

are zero.

(2) The rank of an idempotent matrix is equal to its trace.

8. The spectral decomposition of A is

A = HH

= [ h

1

h

2

h

n

]

_

_

_

_

_

_

_

_

1

0 0

0

2

0

.

.

.

.

.

.

.

.

.

.

.

.

0 0

n

_

_

_

_

_

_

_

_

_

_

_

h

1

h

2

.

.

.

h

n

_

_

=

n

i =1

i

h

i

h

i

.

CHAPTER 4. MATRIX ALGEBRA 15

From the spectral decomposition, the quadratic form can be expressed as

x

Ax = x

HH

x = y

y =

n

i =1

i

y

2

i

,

where y = H

x. In particular, if A is an idempotent matrix with rank k, then

x

Ax =

k

i =1

y

2

i

.

Note that there are only k terms in this summation.

9. Let Abe a pp matrix with p eigenvalues

i

s and Bbe a qq matrix with q eigenvalues

j

s, then the eigenvalues of A B are

i

j

, i = 1, . . . , p, j = 1, . . . , q,

4.6 Matrix Differentiation

We consider the derivatives of three types of functions involving vectors and matrices. The

issue is mostly about how to list the derivatives of scalar functions of a scalar variable in various

vector or matrix forms.

1. The scalar-valued function of a vector: let f (x) = f (x

1

, x

2

, . . . , x

n

) be a real-valued

function of the n-dimensional vector x, then its rst-order derivative is an n-dimensional

column vector:

f

x

=

_

_

_

_

_

_

_

_

f

1

f

2

.

.

.

f

n

_

_

, where f

i

=

f

x

i

.

This vector sometimes is called the gradient of f . The second-order derivative is an nn

matrix:

2

f

xx

=

_

_

_

_

_

_

_

_

f

11

f

12

f

1n

f

21

f

22

f

2n

.

.

.

.

.

.

.

.

.

.

.

.

f

n1

f

n2

f

nn

_

_

, where f

i j

=

2

f

x

i

x

j

.

This matrix sometimes is called the Hessian of f .

CHAPTER 4. MATRIX ALGEBRA 16

2. The vector-valued function of a vector: given an m-dimensional vector of functions of the

n-dimensional vector x,

f (x) =

_

_

_

_

_

_

_

_

f

1

(x)

f

2

(x)

.

.

.

f

m

(x)

_

_

then its rst-order derivative is an m n matrix:

f

x

=

_

_

_

_

_

_

_

_

f

11

f

12

f

1n

f

21

f

22

f

2n

.

.

.

.

.

.

.

.

.

.

.

.

f

m1

f

m2

f

mn

_

_

, where f

i j

=

f

i

x

j

.

3. The scalar-valued function of a matrix: let f (A) be a real-valued function of the n k

matrix A, then its rst-order derivative is an n k matrix:

f

A

=

_

_

_

_

_

_

_

_

f

11

f

12

f

1k

f

21

f

22

f

2k

.

.

.

.

.

.

.

.

.

.

.

.

f

n1

f

n2

f

nk

_

_

, where f

i j

=

f

a

i j

,

and a

i j

is the (i, j )th element of A.

Given these denitions, then we have the following useful results of matrix differentiation:

1.

Ax

x

= A.

2.

x

Ax

x

= (A +A

)x. Furthermore, if A is symmetrical, then

x

Ax

x

= 2Ax.

3.

x

Ay

A

= xy

if all elements of A are different.

4.

ln |A|

A

= A

1

.

5.

x

A

1

x

A

= A

1

xx

A

1

, if A is symmetric.

CHAPTER 4. MATRIX ALGEBRA 17

4.6.1 Taylor Expansions

Most results in statistical analysis focus on linear functions of the random variables since they

can be easily analyzed. As for nonlinear functions it becomes almost a standard procedure to

approximate them by linear functions (or occasionally by some quadratic functions) before any

analysis is conducted. Taylor expansions provide us with the formulas for such approximations.

Linear Approximation

The rst-order Taylor expansion of a vector-valued function f (x) around a given vector of con-

stants x

is:

f (x) f (x

) +

_

f (x

)

x

_

(x x

) = f (x

) +

n

i =1

_

f

x

i

_

(x

i

x

i

).

Lets consider an example of the rst-order Taylor expansion of a 2-dimensional vector-valued

function of three variables:

f (x) = f (x

1

, x

2

, x

3

) =

_

_

_

_

exp(x

1

+2x

2

3x

3

)

ln x

1

x

3

_

_

around the vector of constants x

=

_

_

_

_

_

1

0

2

_

_

.

We note the rst-order derivative of f (x) with respect to x is

f (x)

x

=

_

_

_

_

exp(x

1

+2x

2

3x

3

) 2exp(x

1

+2x

2

3x

3

) 3exp(x

1

+2x

2

3x

3

)

1

x

1

x

3

0

ln x

1

x

2

3

_

_

and if it is evaluated at x

, it becomes

f (x

)

x

=

_

_

_

_

e

7

2e

7

3e

7

1

2

0 0

_

_

.

Hence,

f (x) =

_

_

_

_

exp(x

1

+2x

2

3x

3

)

ln x

1

x

3

_

_

f (x

) +

_

f (x

)

x

_

(x x

_

_

_

e

7

0

_

_

+

_

_

_

_

e

7

2e

7

3e

7

1

2

0 0

_

_

_

_

_

_

_

x

1

1

x

2

x

3

+2

_

_

=

_

_

_

e

7

(x

1

+2x

2

3x

3

6)

1

2

x

1

+

1

2

_

_

.

CHAPTER 4. MATRIX ALGEBRA 18

So each nonlinear function in f (x) is approximated by a linear function in x.

It should be pointed out that the performance of the approximation differs at different x and

depends on both the complexity of the original nonlinear functions at each x and how much x

is away from x

. In particular, the approximation is better for those x which are close to x

than

those x which are far away from x

.

Quadratic Approximation

It is also possible, though less frequently used, to approximate a single nonlinear function by a

quadratic function which can be expressed conveniently in a quadratic form. This is the second-

order Taylor expansion of a scalar-valued function f (x) around a given vector of constants x

:

f (x) f (x

) +

_

f (x

)

x

_

(x x

) +

1

2

(x x

2

f (x

)

xx

_

(x x

) (4.2)

= f (x

) +

n

i =1

f

x

i

(x

i

x

i

) +

1

2

n

i =1

n

j =1

2

f

x

i

x

j

(x

i

x

i

)(x

j

x

j

). (4.3)

Lets consider an example of the second-order Taylor expansion of a scalar-valued function

f (x) = f (x

1

, x

2

) =

sin(x

1

)

exp(x

2

)

around the vector of constants x

=

_

_

/6

0

_

_

.

We note the rst-order derivative and the second-order derivative of f (x) with respect to x,

when evaluated at x

are

f (x)

x

=

_

_

_

_

_

_

cos(x

1

)

exp(x

2

)

sin(x

1

)

exp(x

2

)

_

_

and

f (x

)

x

=

_

_

_

_

_

3

2

1

2

_

_

,

and

2

f (x)

xx

=

_

_

_

_

_

_

sin(x

1

)

exp(x

2

)

cos(x

1

)

exp(x

2

)

cos(x

1

)

exp(x

2

)

sin(x

1

)

exp(x

2

)

_

_

and

2

f (x

)

xx

=

_

_

_

_

_

_

1

2

3

2

3

2

1

2

_

_

.

Hence,

f (x) =

sin(x

1

)

exp(x

2

)

f (x

) +

_

f (x

)

x

_

(x x

) +

1

2

(x x

2

f (x

)

xx

_

(x x

)

CHAPTER 4. MATRIX ALGEBRA 19

=

1

2

+

_

3

2

1

2

_

_

_

_

x

1

6

x

2

_

_

+

1

2

_

x

1

6

x

2

_

_

_

_

_

_

_

1

2

3

2

3

2

1

2

_

_

_

_

_

x

1

6

x

2

_

_

=

1

2

+

3

2

_

x

1

6

_

1

2

x

2

1

4

_

x

1

6

_

2

3

2

_

x

1

6

_

x

2

+

1

4

x

2

2

.

So the nonlinear function of x is now approximated by a quadratic function in x.

4.7 Appendix: More Results on Disjoint Idempotent Matri-

ces

1. Given n n symmetric matrices P

1

, P

2

, . . ., P

k

such that

k

j =1

P

j

= I

n

,

if P

1

, P

2

, . . ., P

k1

are idempotent, then

(1) P

k

is also idempotent;

(2) rank(P

k

) = n

k1

j =1

rank(P

j

);

(3) P

1

, P

2

, . . ., P

k

are all disjoint (orthogonal to each other).

Application: Suppose X =

_

X

1

X

2

_

, and let us dene

M= I X(X

X)

1

X

I P,

P

1

= X

1

(X

1

X

1

)

1

X

1

,

P

= P P

1

,

such that I = M+P

1

+P

, then P

is idempotent (since M and P

1

are both idempotent).

Moreover, we have

rank(P

) = n rank(M) rank(P

1

) = n (n k) k

1

= k k

1

.

Finally, M, P

1

, and P

are all disjoint.

CHAPTER 4. MATRIX ALGEBRA 20

2. If P

1

, P

2

, . . ., P

k

are disjoint idempotent matrices, then for any k + 1 scalars

,

1

, . . .,

k

, the matrix

A =

I

n

+

k

j =1

j

P

j

is p.d. and

A

1

=

I

n

+

k

j =1

j

P

j

,

where

=

1

and

j

=

j

+

j

)

,

for all j .

Das könnte Ihnen auch gefallen

- Shoe Dog: A Memoir by the Creator of NikeVon EverandShoe Dog: A Memoir by the Creator of NikeBewertung: 4.5 von 5 Sternen4.5/5 (537)

- Iot Based Garbage and Street Light Monitoring SystemDokument3 SeitenIot Based Garbage and Street Light Monitoring SystemHarini VenkatNoch keine Bewertungen

- The Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeVon EverandThe Subtle Art of Not Giving a F*ck: A Counterintuitive Approach to Living a Good LifeBewertung: 4 von 5 Sternen4/5 (5794)

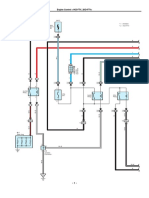

- Diagrama Hilux 1KD-2KD PDFDokument11 SeitenDiagrama Hilux 1KD-2KD PDFJeni100% (1)

- Hidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceVon EverandHidden Figures: The American Dream and the Untold Story of the Black Women Mathematicians Who Helped Win the Space RaceBewertung: 4 von 5 Sternen4/5 (895)

- Michael Ungar - Working With Children and Youth With Complex Needs - 20 Skills To Build Resilience-Routledge (2014)Dokument222 SeitenMichael Ungar - Working With Children and Youth With Complex Needs - 20 Skills To Build Resilience-Routledge (2014)Sølve StoknesNoch keine Bewertungen

- The Yellow House: A Memoir (2019 National Book Award Winner)Von EverandThe Yellow House: A Memoir (2019 National Book Award Winner)Bewertung: 4 von 5 Sternen4/5 (98)

- Gol GumbazDokument6 SeitenGol Gumbazmnv_iitbNoch keine Bewertungen

- Grit: The Power of Passion and PerseveranceVon EverandGrit: The Power of Passion and PerseveranceBewertung: 4 von 5 Sternen4/5 (588)

- New Cisco Certification Path (From Feb2020) PDFDokument1 SeiteNew Cisco Certification Path (From Feb2020) PDFkingNoch keine Bewertungen

- The Little Book of Hygge: Danish Secrets to Happy LivingVon EverandThe Little Book of Hygge: Danish Secrets to Happy LivingBewertung: 3.5 von 5 Sternen3.5/5 (400)

- Nielsen Report - The New Trend Among Indonesia's NetizensDokument20 SeitenNielsen Report - The New Trend Among Indonesia's NetizensMarsha ImaniaraNoch keine Bewertungen

- The Emperor of All Maladies: A Biography of CancerVon EverandThe Emperor of All Maladies: A Biography of CancerBewertung: 4.5 von 5 Sternen4.5/5 (271)

- Synthesis Essay Final DraftDokument5 SeitenSynthesis Essay Final Draftapi-283802944Noch keine Bewertungen

- Never Split the Difference: Negotiating As If Your Life Depended On ItVon EverandNever Split the Difference: Negotiating As If Your Life Depended On ItBewertung: 4.5 von 5 Sternen4.5/5 (838)

- Age and Gender Detection Using Deep Learning: HYDERABAD - 501 510Dokument11 SeitenAge and Gender Detection Using Deep Learning: HYDERABAD - 501 510ShyamkumarBannuNoch keine Bewertungen

- The World Is Flat 3.0: A Brief History of the Twenty-first CenturyVon EverandThe World Is Flat 3.0: A Brief History of the Twenty-first CenturyBewertung: 3.5 von 5 Sternen3.5/5 (2259)

- Historical Roots of The "Whitening" of BrazilDokument23 SeitenHistorical Roots of The "Whitening" of BrazilFernandoMascarenhasNoch keine Bewertungen

- On Fire: The (Burning) Case for a Green New DealVon EverandOn Fire: The (Burning) Case for a Green New DealBewertung: 4 von 5 Sternen4/5 (74)

- Leadership Nursing and Patient SafetyDokument172 SeitenLeadership Nursing and Patient SafetyRolena Johnette B. PiñeroNoch keine Bewertungen

- Elon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureVon EverandElon Musk: Tesla, SpaceX, and the Quest for a Fantastic FutureBewertung: 4.5 von 5 Sternen4.5/5 (474)

- Highway Journal Feb 2023Dokument52 SeitenHighway Journal Feb 2023ShaileshRastogiNoch keine Bewertungen

- A Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryVon EverandA Heartbreaking Work Of Staggering Genius: A Memoir Based on a True StoryBewertung: 3.5 von 5 Sternen3.5/5 (231)

- " Suratgarh Super Thermal Power Station": Submitted ToDokument58 Seiten" Suratgarh Super Thermal Power Station": Submitted ToSahuManishNoch keine Bewertungen

- Team of Rivals: The Political Genius of Abraham LincolnVon EverandTeam of Rivals: The Political Genius of Abraham LincolnBewertung: 4.5 von 5 Sternen4.5/5 (234)

- (Database Management Systems) : Biag, Marvin, B. BSIT - 202 September 6 2019Dokument7 Seiten(Database Management Systems) : Biag, Marvin, B. BSIT - 202 September 6 2019Marcos JeremyNoch keine Bewertungen

- Devil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaVon EverandDevil in the Grove: Thurgood Marshall, the Groveland Boys, and the Dawn of a New AmericaBewertung: 4.5 von 5 Sternen4.5/5 (266)

- Read The Dialogue Below and Answer The Following QuestionDokument5 SeitenRead The Dialogue Below and Answer The Following QuestionDavid GainesNoch keine Bewertungen

- The Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersVon EverandThe Hard Thing About Hard Things: Building a Business When There Are No Easy AnswersBewertung: 4.5 von 5 Sternen4.5/5 (345)

- Citing Correctly and Avoiding Plagiarism: MLA Format, 7th EditionDokument4 SeitenCiting Correctly and Avoiding Plagiarism: MLA Format, 7th EditionDanish muinNoch keine Bewertungen

- 10 - SHM, Springs, DampingDokument4 Seiten10 - SHM, Springs, DampingBradley NartowtNoch keine Bewertungen

- The Unwinding: An Inner History of the New AmericaVon EverandThe Unwinding: An Inner History of the New AmericaBewertung: 4 von 5 Sternen4/5 (45)

- Technical Textile and SustainabilityDokument5 SeitenTechnical Textile and SustainabilityNaimul HasanNoch keine Bewertungen

- Day 2 - Evident's Official ComplaintDokument18 SeitenDay 2 - Evident's Official ComplaintChronicle Herald100% (1)

- Assignment 1 - Vertical Alignment - SolutionsDokument6 SeitenAssignment 1 - Vertical Alignment - SolutionsArmando Ramirez100% (1)

- CV TitchievDokument3 SeitenCV TitchievIna FarcosNoch keine Bewertungen

- WT&D (Optimization of WDS) PDFDokument89 SeitenWT&D (Optimization of WDS) PDFAbirham TilahunNoch keine Bewertungen

- Datasheet 6A8 FusívelDokument3 SeitenDatasheet 6A8 FusívelMluz LuzNoch keine Bewertungen

- Ce Project 1Dokument7 SeitenCe Project 1emmaNoch keine Bewertungen

- Directorate of Technical Education, Maharashtra State, MumbaiDokument57 SeitenDirectorate of Technical Education, Maharashtra State, MumbaiShubham DahatondeNoch keine Bewertungen

- The Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreVon EverandThe Gifts of Imperfection: Let Go of Who You Think You're Supposed to Be and Embrace Who You AreBewertung: 4 von 5 Sternen4/5 (1090)

- ESA Mars Research Abstracts Part 2Dokument85 SeitenESA Mars Research Abstracts Part 2daver2tarletonNoch keine Bewertungen

- Feb-May SBI StatementDokument2 SeitenFeb-May SBI StatementAshutosh PandeyNoch keine Bewertungen

- Nptel Online-Iit KanpurDokument1 SeiteNptel Online-Iit KanpurRihlesh ParlNoch keine Bewertungen

- Topfast BRAND Catalogue Ingco 2021 MayDokument116 SeitenTopfast BRAND Catalogue Ingco 2021 MayMoh AwadNoch keine Bewertungen

- SUNGLAO - TM PortfolioDokument60 SeitenSUNGLAO - TM PortfolioGIZELLE SUNGLAONoch keine Bewertungen

- The Sympathizer: A Novel (Pulitzer Prize for Fiction)Von EverandThe Sympathizer: A Novel (Pulitzer Prize for Fiction)Bewertung: 4.5 von 5 Sternen4.5/5 (121)

- Parts List 38 254 13 95: Helical-Bevel Gear Unit KA47, KH47, KV47, KT47, KA47B, KH47B, KV47BDokument4 SeitenParts List 38 254 13 95: Helical-Bevel Gear Unit KA47, KH47, KV47, KT47, KA47B, KH47B, KV47BEdmundo JavierNoch keine Bewertungen

- Her Body and Other Parties: StoriesVon EverandHer Body and Other Parties: StoriesBewertung: 4 von 5 Sternen4/5 (821)