Beruflich Dokumente

Kultur Dokumente

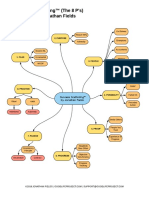

Naïve Bayes Classifier

Hochgeladen von

Zohair AhmedCopyright

Verfügbare Formate

Dieses Dokument teilen

Dokument teilen oder einbetten

Stufen Sie dieses Dokument als nützlich ein?

Sind diese Inhalte unangemessen?

Dieses Dokument meldenCopyright:

Verfügbare Formate

Naïve Bayes Classifier

Hochgeladen von

Zohair AhmedCopyright:

Verfügbare Formate

COMP24111 Machine Learning

Nave Bayes Classifier

Ke Chen

COMP24111 Machine Learning

2

Outline

Background

Probability Basics

Probabilistic Classification

Nave Bayes

Example: Play Tennis

Relevant Issues

Conclusions

COMP24111 Machine Learning

3

Background

There are three methods to establish a classifier

a) Model a classification rule directly

Examples: k-NN, decision trees, perceptron, SVM

b) Model the probability of class memberships given input data

Example: perceptron with the cross-entropy cost

c) Make a probabilistic model of data within each class

Examples: naive Bayes, model based classifiers

a) and b) are examples of discriminative classification

c) is an example of generative classification

b) and c) are both examples of probabilistic classification

COMP24111 Machine Learning

4

Probability Basics

Prior, conditional and joint probability for random variables

Prior probability:

Conditional probability:

Joint probability:

Relationship:

Independence:

Bayesian Rule

) | , ) (

1 2 1

X P(X X | X P

2

) (

) ( ) (

) (

X

X

X

P

C P C | P

| C P =

) (X P

) ) ( ), , (

2 2

,X P(X P X X

1 1

= = X X

) ( ) | ( ) ( ) | ( )

2 2 1 1 1 2 2

X P X X P X P X X P ,X P(X

1

= =

) ( ) ( ) ), ( ) | ( ), ( ) | (

2 1 2 1 2 1 2 1 2

X P X P ,X P(X X P X X P X P X X P

1

= = =

Evidence

Prior Likelihood

Posterior

=

COMP24111 Machine Learning

5

Probability Basics

Quiz: We have two six-sided dice. When they are tolled, it could end up

with the following occurance: (A) dice 1 lands on side 3, (B) dice 2 lands

on side 1, and (C) Two dice sum to eight. Answer the following questions:

? to equal ) , ( Is 8)

? ) , ( 7)

? ) , ( 6)

? ) | ( 5)

? ) | ( 4)

? 3)

? 2)

? ) ( ) 1

P(C) P(A) C A P

C A P

B A P

A C P

B A P

P(C)

P(B)

A P

-

=

=

=

=

=

=

=

COMP24111 Machine Learning

6

Probabilistic Classification

Establishing a probabilistic model for classification

Discriminative model

) , , , ) (

1 n 1 L

X (X c , , c C | C P = = X X

) , , , (

2 1 n

x x x = x

Discriminative

Probabilistic Classifier

1

x

2

x

n

x

) | (

1

x c P ) | (

2

x c P ) | ( x

L

c P

- - -

- - -

COMP24111 Machine Learning

7

Probabilistic Classification

Establishing a probabilistic model for classification (cont.)

Generative model

) , , , ) (

1 n 1 L

X (X c , , c C C | P = = X X

Generative

Probabilistic Model

for Class 1

) | (

1

c P x

1

x

2

x

n

x

- - -

Generative

Probabilistic Model

for Class 2

) | (

2

c P x

1

x

2

x

n

x

- - -

Generative

Probabilistic Model

for Class L

) | (

L

c P x

1

x

2

x

n

x

- - -

- - -

) , , , (

2 1 n

x x x = x

COMP24111 Machine Learning

8

Probabilistic Classification

MAP classification rule

MAP: Maximum A Posterior

Assign x to c* if

Generative classification with the MAP rule

Apply Bayesian rule to convert them into posterior probabilities

Then apply the MAP rule

L

c , , c c c c | c C P | c C P = = = = > = =

1

* *

, ) ( ) ( x X x X

L i

c C P c C | P

P

c C P c C | P

| c C P

i i

i i

i

, , 2 , 1 for

) ( ) (

) (

) ( ) (

) (

=

= = =

=

= = =

= = =

x X

x X

x X

x X

COMP24111 Machine Learning

9

Nave Bayes

Bayes classification

Difficulty: learning the joint probability

Nave Bayes classification

Assumption that all input attributes are conditionally independent!

MAP classification rule: for

) ( ) | , , ( ) ( ) ( ) (

1

C P C X X P C P C | P | C P

n

= X X

) | , , (

1

C X X P

n

) | ( ) | ( ) | (

) | , , ( ) | (

) | , , ( ) , , , | ( ) | , , , (

2 1

2 1

2 2 1 2 1

C X P C X P C X P

C X X P C X P

C X X P C X X X P C X X X P

n

n

n n n

=

=

=

L n n

c c c c c c P c x P c x P c P c x P c x P , , , ), ( )] | ( ) | ( [ ) ( )] | ( ) | ( [

1

*

1

* * *

1

= = >

) , , , (

2 1 n

x x x = x

COMP24111 Machine Learning

10

Nave Bayes

Nave Bayes Algorithm (for discrete input attributes)

Learning Phase: Given a training set S,

Output: conditional probability tables; for elements

Test Phase: Given an unknown instance ,

Look up tables to assign the label c* to X if

; in examples with ) | ( estimate ) | (

) , 1 ; , , 1 ( attribute each of value attribute every For

; in examples with ) ( estimate ) (

of value target each For

1

S

S

i jk j i jk j

j j jk

i i

L i i

c C x X P c C x X P

N , k n j X x

c C P c C P

) c , , c (c c

= = = =

= =

= =

=

L n n

c c c c c c P c a P c a P c P c a P c a P , , , ), (

)] | (

) | (

[ ) (

)] | (

) | (

[

1

*

1

* * *

1

= = ' ' > ' '

) , , (

1 n

a a

'

'

=

'

X

L N X

j j

,

COMP24111 Machine Learning

11

Example

Example: Play Tennis

COMP24111 Machine Learning

12

Example

Learning Phase

Outlook Play=Yes Play=No

Sunny

2/9 3/5

Overcast

4/9 0/5

Rain

3/9 2/5

Temperature Play=Yes Play=No

Hot

2/9 2/5

Mild

4/9 2/5

Cool

3/9 1/5

Humidity Play=Yes Play=No

High

3/9 4/5

Normal

6/9 1/5

Wind Play=Yes Play=No

Strong

3/9 3/5

Weak

6/9 2/5

P(Play=Yes) = 9/14 P(Play=No) = 5/14

COMP24111 Machine Learning

13

Example

Test Phase

Given a new instance,

x=(Outlook=Sunny, Temperature=Cool, Humidity=High, Wind=Strong)

Look up tables

MAP rule

P(Outlook=Sunny|Play=No) = 3/5

P(Temperature=Cool|Play==No) = 1/5

P(Huminity=High|Play=No) = 4/5

P(Wind=Strong|Play=No) = 3/5

P(Play=No) = 5/14

P(Outlook=Sunny|Play=Yes) = 2/9

P(Temperature=Cool|Play=Yes) = 3/9

P(Huminity=High|Play=Yes) = 3/9

P(Wind=Strong|Play=Yes) = 3/9

P(Play=Yes) = 9/14

P(Yes|x): [P(Sunny|Yes)P(Cool|Yes)P(High|Yes)P(Strong|Yes)]P(Play=Yes) = 0.0053

P(No|x): [P(Sunny|No) P(Cool|No)P(High|No)P(Strong|No)]P(Play=No) = 0.0206

Given the fact P(Yes|x) < P(No|x), we label x to be No.

COMP24111 Machine Learning

14

Example

Test Phase

Given a new instance,

x=(Outlook=Sunny, Temperature=Cool, Humidity=High, Wind=Strong)

Look up tables

MAP rule

P(Outlook=Sunny|Play=No) = 3/5

P(Temperature=Cool|Play==No) = 1/5

P(Huminity=High|Play=No) = 4/5

P(Wind=Strong|Play=No) = 3/5

P(Play=No) = 5/14

P(Outlook=Sunny|Play=Yes) = 2/9

P(Temperature=Cool|Play=Yes) = 3/9

P(Huminity=High|Play=Yes) = 3/9

P(Wind=Strong|Play=Yes) = 3/9

P(Play=Yes) = 9/14

P(Yes|x): [P(Sunny|Yes)P(Cool|Yes)P(High|Yes)P(Strong|Yes)]P(Play=Yes) = 0.0053

P(No|x): [P(Sunny|No) P(Cool|No)P(High|No)P(Strong|No)]P(Play=No) = 0.0206

Given the fact P(Yes|x) < P(No|x), we label x to be No.

COMP24111 Machine Learning

15

Relevant Issues

Violation of Independence Assumption

For many real world tasks,

Nevertheless, nave Bayes works surprisingly well anyway!

Zero conditional probability Problem

If no example contains the attribute value

In this circumstance, during test

For a remedy, conditional probabilities estimated with

) | ( ) | ( ) | , , (

1 1

C X P C X P C X X P

n n

=

0 ) | (

, = = = =

i jk j jk j

c C a X P a X

0 ) | (

) | (

) | (

1

=

i n i jk i

c x P c a P c x P

) 1 examples, virtual" " of (number prior to weight :

) of values possible for / 1 (usually, estimate prior :

which for examples training of number :

C and which for examples training of number :

) | (

>

=

=

= =

+

+

= = =

m m

X t t p p

c C n

c a X n

m n

mp n

c C a X P

j

i

i jk j c

c

i jk j

COMP24111 Machine Learning

16

Relevant Issues

Continuous-valued Input Attributes

Numberless values for an attribute

Conditional probability modeled with the normal distribution

Learning Phase:

Output: normal distributions and

Test Phase:

Calculate conditional probabilities with all the normal distributions

Apply the MAP rule to make a decision

i j ji

i j ji

ji

ji j

ji

i j

c C

c X

X

c C X P

=

=

|

|

.

|

\

|

= =

which for examples of X values attribute of deviation standard :

C which for examples of values attribute of (avearage) mean :

2

) (

exp

2

1

) | (

2

2

o

o t

L n

c c C X X , , ), , , ( for

1 1

= = X

L n

) , , ( for

1 n

X X

'

'

=

'

X

L i c C P

i

, , 1 ) ( = =

COMP24111 Machine Learning

17

Conclusions

Nave Bayes based on the independence assumption

Training is very easy and fast; just requiring considering each

attribute in each class separately

Test is straightforward; just looking up tables or calculating

conditional probabilities with normal distributions

A popular generative model

Performance competitive to most of state-of-the-art classifiers

even in presence of violating independence assumption

Many successful applications, e.g., spam mail filtering

A good candidate of a base learner in ensemble learning

Apart from classification, nave Bayes can do more

Das könnte Ihnen auch gefallen

- Success Scaffolding 8P 2018Dokument1 SeiteSuccess Scaffolding 8P 2018don_h_manzanoNoch keine Bewertungen

- DATA MINING Chapter 1 and 2 Lect SlideDokument47 SeitenDATA MINING Chapter 1 and 2 Lect SlideSanjeev ThakurNoch keine Bewertungen

- Multiple Choice QuestionsDokument64 SeitenMultiple Choice QuestionspatienceNoch keine Bewertungen

- Introduction To Data ScienceDokument8 SeitenIntroduction To Data ScienceMajor ProjectNoch keine Bewertungen

- Analysing Ad BudgetDokument4 SeitenAnalysing Ad BudgetSrikanth100% (1)

- Unit 1 Design ProcessDokument55 SeitenUnit 1 Design ProcessVasudevan.A100% (1)

- Vensim ManualDokument9 SeitenVensim ManualAbolfazl SafariNoch keine Bewertungen

- Data Science With R - Course MaterialsDokument25 SeitenData Science With R - Course MaterialsparagjduttaNoch keine Bewertungen

- DSM050 Data Visualisation Topic1Dokument36 SeitenDSM050 Data Visualisation Topic1LICHEN YUNoch keine Bewertungen

- The Ultimate Learning Path To Become A Data Scientist and Master Machine Learning in 2019Dokument12 SeitenThe Ultimate Learning Path To Become A Data Scientist and Master Machine Learning in 2019k2shNoch keine Bewertungen

- What Is Cluster Analysis?Dokument120 SeitenWhat Is Cluster Analysis?Mayukh MaitraNoch keine Bewertungen

- Decision TreeDokument25 SeitenDecision Treericardo_g1973Noch keine Bewertungen

- Form III - Student Branch (Bulk Students)Dokument4 SeitenForm III - Student Branch (Bulk Students)Amit BairwaNoch keine Bewertungen

- Data Science Course ContentDokument4 SeitenData Science Course ContentTR HarishNoch keine Bewertungen

- Deploy A Machine Learning Model Using Flask - Towards Data ScienceDokument12 SeitenDeploy A Machine Learning Model Using Flask - Towards Data SciencecidsantNoch keine Bewertungen

- DS Mod 1 To 2 Complete NotesDokument63 SeitenDS Mod 1 To 2 Complete NotesAnish ChoudharyNoch keine Bewertungen

- Systems Analysis and Design: The Systems Development EnvironmentDokument417 SeitenSystems Analysis and Design: The Systems Development EnvironmentSatya NadellaNoch keine Bewertungen

- On Hyperparameter Optimization of Machine Learning Algorithms: Theory and PracticeDokument22 SeitenOn Hyperparameter Optimization of Machine Learning Algorithms: Theory and PracticeRhafael Freitas da CostaNoch keine Bewertungen

- Performance Comparison Between Naïve Bayes, Decision Tree and K-Nearest Neighbor in Searching Alternative Design in An Energy Simulation ToolDokument7 SeitenPerformance Comparison Between Naïve Bayes, Decision Tree and K-Nearest Neighbor in Searching Alternative Design in An Energy Simulation ToolAkon AkkiNoch keine Bewertungen

- Principal Component Analysis (PCA) in Machine LearningDokument20 SeitenPrincipal Component Analysis (PCA) in Machine LearningMs Sushma BNoch keine Bewertungen

- Data Visualization Using Plotly, Matplotlib, Seaborn and Squarify - Data ScienceDokument61 SeitenData Visualization Using Plotly, Matplotlib, Seaborn and Squarify - Data ScienceRRNoch keine Bewertungen

- Machine Learning (CSC052P6G, CSC033U3M, CSL774, EEL012P5E) : Dr. Shaifu GuptaDokument18 SeitenMachine Learning (CSC052P6G, CSC033U3M, CSL774, EEL012P5E) : Dr. Shaifu GuptaSoubhav ChamanNoch keine Bewertungen

- Agile Data Science PDFDokument15 SeitenAgile Data Science PDFRodrigo IslasNoch keine Bewertungen

- Salary Prediction LinearRegressionDokument7 SeitenSalary Prediction LinearRegressionYagnesh Vyas100% (1)

- Program Overview: #Datascience - Data Science in IotDokument9 SeitenProgram Overview: #Datascience - Data Science in IotIván Peralta100% (1)

- Image Captioning Using CNN Long Short-Term Memory NetworkDokument14 SeitenImage Captioning Using CNN Long Short-Term Memory NetworkCHARVINoch keine Bewertungen

- My ML Lab ManualDokument21 SeitenMy ML Lab ManualAnonymous qZP5Zyb2Noch keine Bewertungen

- ML Lecture 11 (K Nearest Neighbors)Dokument13 SeitenML Lecture 11 (K Nearest Neighbors)Md Fazle RabbyNoch keine Bewertungen

- Introduction-ML MergedDokument56 SeitenIntroduction-ML MergedSHIKHA SHARMA100% (1)

- Introduction To Data Mining-SourcesDokument5 SeitenIntroduction To Data Mining-SourcesdmmartNoch keine Bewertungen

- Dealing With Missing Data in Python PandasDokument14 SeitenDealing With Missing Data in Python PandasSelloNoch keine Bewertungen

- Deep Learning and CNNFYTGS5101-GuoyangxieDokument42 SeitenDeep Learning and CNNFYTGS5101-GuoyangxievictorNoch keine Bewertungen

- A Detailed Analysis of The Supervised Machine Learning AlgorithmsDokument5 SeitenA Detailed Analysis of The Supervised Machine Learning AlgorithmsNIET Journal of Engineering & Technology(NIETJET)Noch keine Bewertungen

- Explaining Design ResearchDokument3 SeitenExplaining Design ResearchdasopaintNoch keine Bewertungen

- Envisioning The Future of Transport and Mobility System For Debesmscat Using Futures ThinkingDokument26 SeitenEnvisioning The Future of Transport and Mobility System For Debesmscat Using Futures ThinkingInternational Journal of Innovative Science and Research Technology100% (1)

- Different Types of Regression ModelsDokument18 SeitenDifferent Types of Regression ModelsHemal PandyaNoch keine Bewertungen

- Multi-Criteria Group Decision-Making (MCGDM) For Verification of HydroGIS Model Development FrameworkDokument12 SeitenMulti-Criteria Group Decision-Making (MCGDM) For Verification of HydroGIS Model Development FrameworkScience DirectNoch keine Bewertungen

- Alpha Beta PruningDokument35 SeitenAlpha Beta PruningChandra Bhushan SahNoch keine Bewertungen

- 6.unit I 2 MarksDokument3 Seiten6.unit I 2 MarksprasanthprpNoch keine Bewertungen

- Database Management System 2807203Dokument67 SeitenDatabase Management System 2807203Prabhanshu100% (1)

- Cluster Analysis: Concepts and Techniques - Chapter 7Dokument60 SeitenCluster Analysis: Concepts and Techniques - Chapter 7Suchithra SalilanNoch keine Bewertungen

- UNIT - 2 .DataScience 04.09.18Dokument53 SeitenUNIT - 2 .DataScience 04.09.18Raghavendra RaoNoch keine Bewertungen

- Data Science Course ContentDokument8 SeitenData Science Course ContentQshore online trainingNoch keine Bewertungen

- Introduction To AI IBM (COURSERA)Dokument2 SeitenIntroduction To AI IBM (COURSERA)Ritika MondalNoch keine Bewertungen

- Data VisualizationDokument35 SeitenData VisualizationKishan KikkeriNoch keine Bewertungen

- Econometrics Assignment For Group - 3Dokument13 SeitenEconometrics Assignment For Group - 3Legese TusseNoch keine Bewertungen

- BCADokument28 SeitenBCAAbhi TakkarNoch keine Bewertungen

- Au Coe QP: Question Paper CodeDokument2 SeitenAu Coe QP: Question Paper CodePeter ParkerNoch keine Bewertungen

- Unit 2 AI - AI Project CycleDokument50 SeitenUnit 2 AI - AI Project Cycleharrshanrmt20Noch keine Bewertungen

- Generative Learnimg TheoryDokument9 SeitenGenerative Learnimg TheoryZaryna TohNoch keine Bewertungen

- Data Warehousing and Data MiningDokument4 SeitenData Warehousing and Data MiningRamesh YadavNoch keine Bewertungen

- Industry 4.0 Course Syllabus: General InformationDokument4 SeitenIndustry 4.0 Course Syllabus: General InformationpranavNoch keine Bewertungen

- ML Lab ObservationDokument44 SeitenML Lab Observationjaswanthch16100% (1)

- Lesson 3 Artificial Neural NetworkDokument77 SeitenLesson 3 Artificial Neural NetworkVIJENDHER REDDY GURRAMNoch keine Bewertungen

- UE20CS302 Unit4 SlidesDokument312 SeitenUE20CS302 Unit4 SlidesDRUVA HegdeNoch keine Bewertungen

- Chapter 7Dokument31 SeitenChapter 7mehmetgunn100% (1)

- Atm Case StudyDokument43 SeitenAtm Case StudyJitendra Soni100% (2)

- A Basic Introduction To Machine Learning and Data Analytics: Yolanda Gil University of Southern CaliforniaDokument118 SeitenA Basic Introduction To Machine Learning and Data Analytics: Yolanda Gil University of Southern CaliforniaAbokhaled AL-ashmawiNoch keine Bewertungen

- Unit-3 DS StudentsDokument35 SeitenUnit-3 DS StudentsHarpreet Singh BaggaNoch keine Bewertungen

- Structured programming Complete Self-Assessment GuideVon EverandStructured programming Complete Self-Assessment GuideNoch keine Bewertungen

- Introduction to Machine Learning in the Cloud with Python: Concepts and PracticesVon EverandIntroduction to Machine Learning in the Cloud with Python: Concepts and PracticesNoch keine Bewertungen

- Action Research PaperDokument37 SeitenAction Research PaperKATE SHELOU TABIANNoch keine Bewertungen

- Academic Writing Research AnjaliDokument7 SeitenAcademic Writing Research AnjaliAnjali RamnaniNoch keine Bewertungen

- Outline: Optimum Spray Cooling in Continuous Slab Casting Process Under Productivity ImprovementDokument5 SeitenOutline: Optimum Spray Cooling in Continuous Slab Casting Process Under Productivity ImprovementKiatkajohn WorapradyaNoch keine Bewertungen

- Chapter 1Dokument11 SeitenChapter 1Crist John PastorNoch keine Bewertungen

- Introduction To Fuzzy Logic Using MATLAB: S.N. Sivanandam, S. Sumathi and S.N. DeepaDokument5 SeitenIntroduction To Fuzzy Logic Using MATLAB: S.N. Sivanandam, S. Sumathi and S.N. DeepaIrwan P Gunawan100% (1)

- Unhelpful Thinking Styles - 09 - Emotional ReasoningDokument1 SeiteUnhelpful Thinking Styles - 09 - Emotional Reasoningletitia2hintonNoch keine Bewertungen

- Home AutomationDokument19 SeitenHome AutomationsatyajitNoch keine Bewertungen

- Institute of Engineering and Technology Devi Ahilya Vishwavidyalaya, Indore Grade Sheet (B.E. Third Yr. Info. Tech. - A, Exam: Nov-Dec'2017)Dokument2 SeitenInstitute of Engineering and Technology Devi Ahilya Vishwavidyalaya, Indore Grade Sheet (B.E. Third Yr. Info. Tech. - A, Exam: Nov-Dec'2017)Sudhir KumarNoch keine Bewertungen

- A Guide To Process Mapping and ImprovementDokument14 SeitenA Guide To Process Mapping and Improvementibrahim354100% (1)

- 4323Dokument8 Seiten4323KaushikBoseNoch keine Bewertungen

- DLL TLE 6 HOME ECONOMICS SY2023-2024 Dec. 04-08Dokument5 SeitenDLL TLE 6 HOME ECONOMICS SY2023-2024 Dec. 04-08GLORIA MACAYNoch keine Bewertungen

- KaphDokument7 SeitenKaphFrater MagusNoch keine Bewertungen

- Transcribed Speech Roberto Mangabeira Unger at Social FrontiersDokument4 SeitenTranscribed Speech Roberto Mangabeira Unger at Social Frontiersmarli_kaboomNoch keine Bewertungen

- Henry Corbin and The Archetypal RealmDokument2 SeitenHenry Corbin and The Archetypal RealmZeteticus100% (2)

- Brochure DronsparkDokument2 SeitenBrochure DronsparksachinNoch keine Bewertungen

- Pro BurnerDokument11 SeitenPro BurnergamerhobbistaNoch keine Bewertungen

- BullyingDokument9 SeitenBullyingTran PhanNoch keine Bewertungen

- Establish Verification Procedures (Task 11 / Principle 6) : Good Hygiene Practices Along The Coffee ChainDokument15 SeitenEstablish Verification Procedures (Task 11 / Principle 6) : Good Hygiene Practices Along The Coffee ChainKaushik LanjekarNoch keine Bewertungen

- Supervisory Behavior Description QuestionnaireDokument139 SeitenSupervisory Behavior Description QuestionnaireAnonymous j9lsM2RBaINoch keine Bewertungen

- Quiz On Parts of A Research PaperDokument5 SeitenQuiz On Parts of A Research Paperyscgudvnd100% (1)

- Camouflage Lesson PlanDokument4 SeitenCamouflage Lesson Planapi-344569443Noch keine Bewertungen

- Choosing A Career: 1. Read The Following Text CarefullyDokument7 SeitenChoosing A Career: 1. Read The Following Text CarefullyVivianaNoch keine Bewertungen

- The Future of Money-Bernard Lietaer PDFDokument382 SeitenThe Future of Money-Bernard Lietaer PDFJavier De Ory ArriagaNoch keine Bewertungen

- Ken Draken Tokyo Revengers MBTIDokument1 SeiteKen Draken Tokyo Revengers MBTIÁngela Espinosa GonzálezNoch keine Bewertungen

- Chaos Theory and The Lorenz EquationDokument15 SeitenChaos Theory and The Lorenz EquationloslogosehNoch keine Bewertungen

- Post Weld Heat Treatment Procedure: Doc Ref: Isb/PwhtDokument13 SeitenPost Weld Heat Treatment Procedure: Doc Ref: Isb/PwhtMahtemeselasie Tesfamariam Hailu100% (4)

- Boundy8e PPT Ch03Dokument29 SeitenBoundy8e PPT Ch03AndrewNoch keine Bewertungen

- Edc - Krash EceDokument75 SeitenEdc - Krash EcePrabhakar DasNoch keine Bewertungen

- Endnote X8: Research SmarterDokument9 SeitenEndnote X8: Research SmarterMuhajir BusraNoch keine Bewertungen